For years, the ceiling of visual fidelity in modern gaming and professional 3D rendering has been dictated not just by raw processing power, but by the physical limits of video random access memory (VRAM). As textures grow in resolution to accommodate 4K and 8K displays, the amount of memory required to store these assets has scaled aggressively, often leaving users with mid-range hardware struggling to maintain performance.

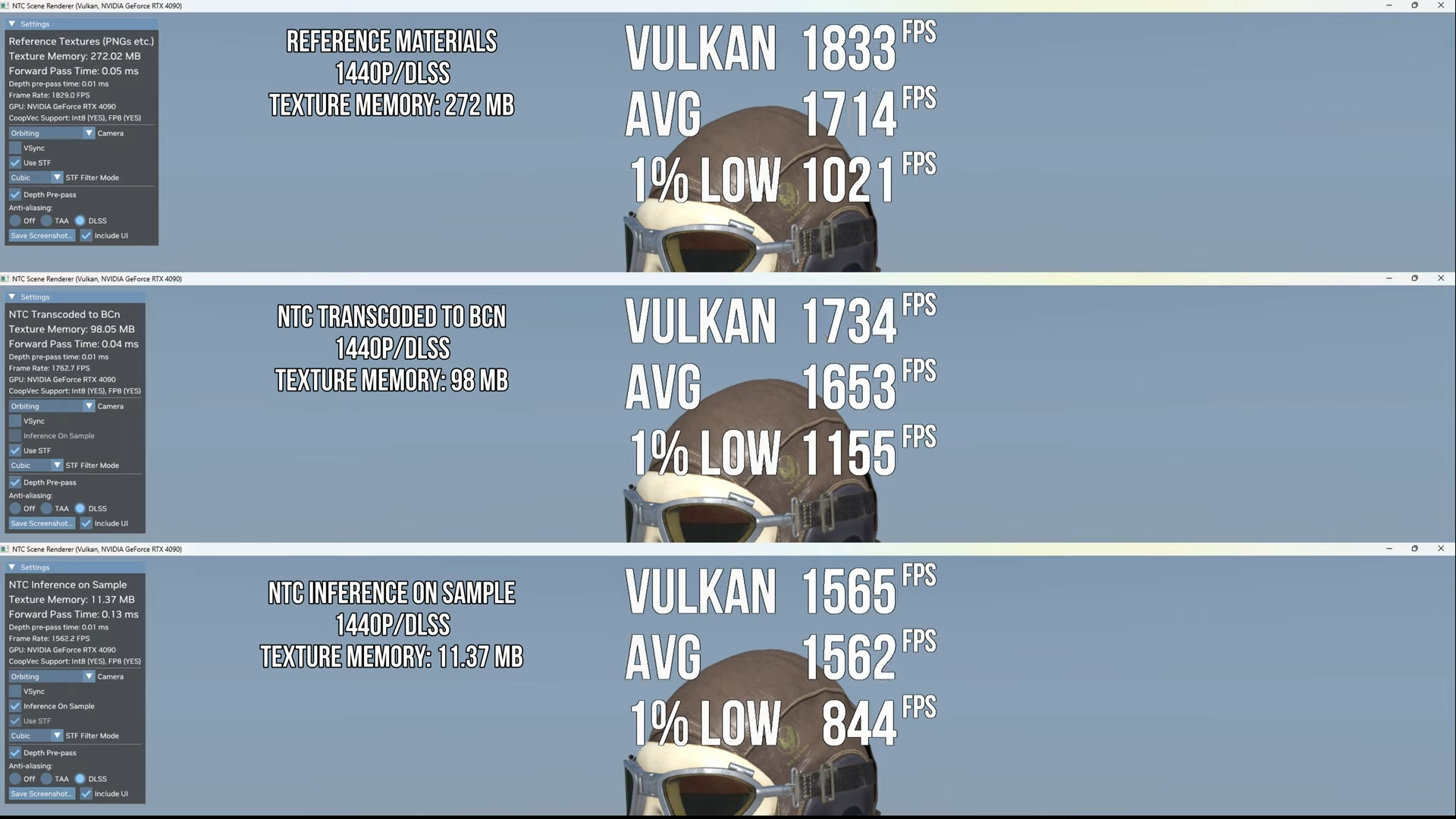

Nvidia is addressing this bottleneck with the introduction of Neural Texture Compression (NTC), a new approach to handling image data that leverages artificial intelligence to drastically reduce the VRAM footprint of high-resolution textures. By moving away from traditional, static compression methods and toward a learned, neural-based system, Nvidia aims to allow developers to implement significantly more detailed environments without requiring an exponential increase in hardware memory.

The technology represents a fundamental shift in how GPUs handle assets. While traditional compression focuses on reducing file sizes through mathematical shortcuts that often introduce visible artifacts, NTC uses a lightweight neural network to decode textures in real-time. This allows for a higher compression ratio while maintaining a level of visual quality that is often indistinguishable from uncompressed source material.

Beyond Block Compression: How NTC Works

To understand the impact of Neural Texture Compression, it is necessary to look at the industry standard: Block Compression (BC). For decades, GPUs have used BC formats to store textures in memory. These methods divide images into tiny blocks and leverage a limited color palette for each block to save space. However, as texture resolutions have climbed, BC has hit a point of diminishing returns, often resulting in “blockiness” or blurring in complex textures.

NTC replaces these rigid blocks with a learned representation. Instead of storing the texture as a series of fixed-size blocks, Nvidia’s system trains a neural network to represent the texture data more efficiently. When the GPU needs to render a surface, it uses the hardware’s Tensor Cores to decode the neural representation back into a high-resolution image on the fly.

Due to the fact that the decompression happens within the Tensor Cores—the same hardware responsible for DLSS (Deep Learning Super Sampling)—the process is highly optimized. This allows the GPU to pull a much smaller “compressed” version of the texture from VRAM and expand it instantly, effectively multiplying the perceived capacity of the available memory.

Comparing Traditional vs. Neural Compression

The primary advantage of NTC is the ability to maintain high-frequency details—the fine grains of sand, the pores of skin, or the weave of fabric—that are typically lost during aggressive traditional compression. By utilizing a neural decoder, the system can “predict” and reconstruct these details more accurately than a standard block-based algorithm.

| Feature | Traditional Block Compression (BC) | Neural Texture Compression (NTC) |

|---|---|---|

| Compression Method | Fixed mathematical blocks | Learned neural representations |

| Hardware Requirement | Standard GPU Rasterizer | Tensor Cores (RTX Architecture) |

| VRAM Efficiency | Moderate; scales linearly | High; significant footprint reduction |

| Visual Fidelity | Prone to blocky artifacts | High fidelity; preserves fine detail |

The Impact on Game Development and Hardware

For developers, the constraints of VRAM are a constant struggle. To ensure a game runs on a wide variety of hardware, artists often have to “down-res” textures or use aggressive mipmapping, which can lead to a noticeable loss of quality on high-end machines. Neural Texture Compression provides a path toward “future-proofing” assets.

With NTC, developers can potentially ship textures with much higher native resolutions, knowing that the neural decoder can handle the memory overhead. This reduces the need for multiple versions of the same asset for different hardware tiers, streamlining the production pipeline.

For the end user, this technology is particularly beneficial for those using GPUs with 8GB or 12GB of VRAM. As modern titles increasingly demand 16GB or more for “Ultra” settings, NTC could bridge the gap, allowing mid-range cards to handle high-resolution texture packs that would otherwise cause stuttering or crashes due to memory overflow.

Integration Within the Nvidia Ecosystem

NTC does not exist in a vacuum; it is part of a broader strategy by Nvidia to move the entire rendering pipeline toward AI-driven solutions. Just as DLSS uses AI to upscale resolution and Frame Generation uses AI to insert frames, NTC uses AI to manage memory.

This shift suggests that the future of graphics is less about “brute-forcing” pixels and more about “intelligent reconstruction.” By offloading the heavy lifting of texture storage to the Tensor Cores, Nvidia is maximizing the utility of the silicon already present in RTX GPUs.

However, some constraints remain. Because NTC relies on Tensor Cores, the technology is exclusive to the RTX line of graphics cards. Users on older GTX hardware or competing architectures will not be able to leverage these specific memory savings, potentially widening the gap between AI-accelerated hardware and traditional GPUs.

Looking Ahead: Implementation and Adoption

While the technical foundation for Neural Texture Compression has been unveiled through Nvidia’s research and developer communications, the widespread adoption of the technology depends on integration into game engines like Unreal Engine 5 and Unity. Once these engines incorporate NTC into their standard pipelines, the transition for developers will be seamless.

The next confirmed step for this technology involves further optimization of the decoder to ensure that the computational cost of decompression does not offset the performance gains from reduced VRAM usage. Nvidia is expected to provide more detailed implementation guides and SDK updates for developers in the coming cycles to facilitate the rollout of NTC in upcoming AAA titles.

We invite you to share your thoughts on AI-driven rendering in the comments below. Do you believe neural compression is the answer to the VRAM crisis, or should the industry focus on increasing physical memory capacities?