The arrival of generative artificial intelligence has created a profound tension within the halls of academia. While students and faculty are integrating large language models into their daily workflows with startling speed, the structural frameworks of universities—curricula, grading rubrics, and ethical guidelines—are struggling to keep pace.

This widening AI gap in higher education is more than a matter of academic integrity or the fear of plagiarism. It represents a systemic lag between the capabilities of available technology and the pedagogical strategies used to prepare students for a workforce where AI proficiency is rapidly becoming a baseline requirement rather than a specialized skill.

For many institutions, the initial reaction was one of containment. From banning ChatGPT to deploying detection software, the instinct was to protect the traditional essay. However, as these tools grow embedded in professional software and industry standards, the risk has shifted. The danger is no longer just that students might cheat, but that they may graduate without the critical AI literacy needed to navigate a transformed economy.

As a physician and medical writer, I have seen this pattern before in clinical settings: technology often arrives in the field long before the formal training manuals are rewritten. In medicine, the gap between a new diagnostic tool and its integration into medical school curricula can leave practitioners reliant on intuition rather than evidence-based application. The current crisis in higher education mirrors this, requiring a shift from policing tools to mastering them.

The regulatory lag and the ethical vacuum

The pace of AI development is currently outstripping the ability of governments and institutions to create guardrails. This creates an ethical vacuum where students and professors are essentially beta-testing the boundaries of academic honesty and intellectual property in real-time.

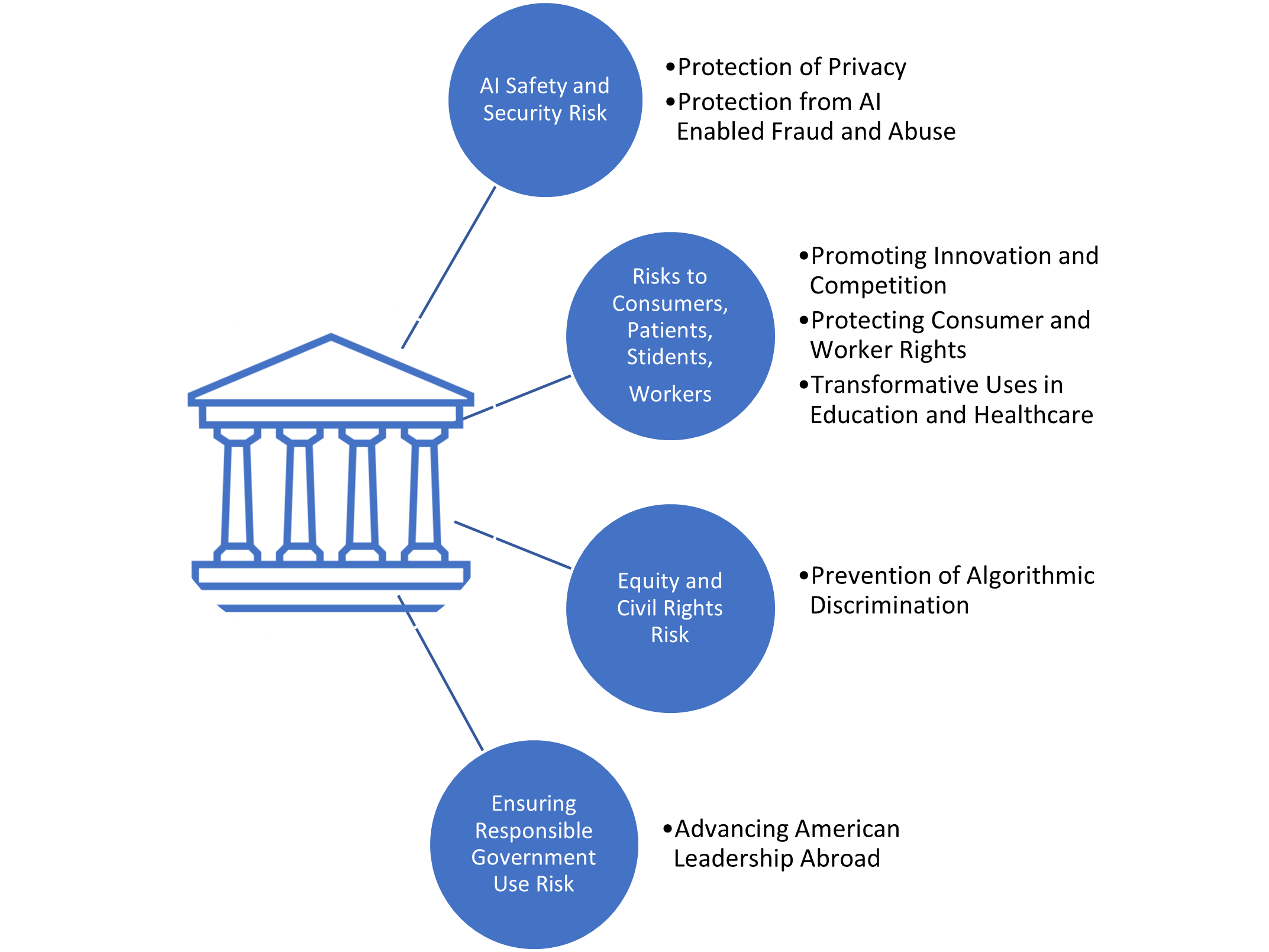

On a global scale, regulatory efforts are attempting to catch up. The European Union AI Act, the world’s first comprehensive AI law, seeks to categorize AI systems by risk level and mandate transparency. Similarly, in the United States, the Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence emphasizes safety and security standards. However, these high-level mandates rarely translate into immediate, actionable policies for a professor managing a 200-person lecture hall.

The challenge for universities is to develop internal ethical frameworks that encourage exploration without sacrificing rigor. This involves moving toward “AI-augmented” assignments where the process of prompting, refining, and fact-checking an AI output is graded more heavily than the final product itself.

Redefining literacy for the modern workforce

Bridging the gap requires a fundamental shift in how we define “literacy.” In the previous decade, digital literacy meant knowing how to use a word processor or conduct a Google search. Today, it encompasses prompt engineering, the ability to identify algorithmic bias, and the skill of verifying AI-generated hallucinations against primary sources.

The World Economic Forum’s Future of Jobs Report 2023 indicates that AI and big data are among the highest priorities for skills training through 2027. When students enter the workforce, they will not be asked if they can write a five-paragraph essay without facilitate; they will be asked if they can use AI to synthesize vast amounts of data while maintaining human oversight and ethical judgment.

To meet this demand, institutions are beginning to experiment with curriculum redesign. This includes:

- Interdisciplinary AI modules: Integrating AI ethics and application into non-technical degrees, such as philosophy, history, and nursing.

- Oral examinations and in-person assessments: Returning to traditional methods to verify foundational knowledge before allowing AI assistance in higher-order synthesis.

- Collaborative AI projects: Assigning students to “co-author” papers with AI, requiring them to provide a detailed audit trail of how the AI was used and where it was corrected.

The risk of a new digital divide

While the conversation often focuses on cheating, there is a more insidious risk: the creation of a tiered educational system based on AI access. As the most powerful models move behind expensive paywalls, students with the financial means to afford “Pro” subscriptions gain a significant cognitive advantage over those using free, less capable versions.

This equity gap threatens to exacerbate existing disparities in higher education. If the ability to synthesize research or draft complex code is tied to a monthly subscription fee, the “AI gap” becomes a socioeconomic barrier. Universities must consider providing institutional access to high-tier AI tools, treating them as essential infrastructure similar to library databases or high-speed campus Wi-Fi.

| Approach | Primary Goal | Key Risk | Outcome |

|---|---|---|---|

| Restrictive | Maintain traditional rigor | Student alienation/shadow use | Outdated skill sets |

| Permissive | Rapid adoption | Loss of critical thinking | Superficial learning |

| Integrated | AI Literacy | High faculty workload | Workforce readiness |

Moving toward a sustainable model

The path forward is not found in a return to the pre-AI era, nor in an unconditional surrender to automation. Instead, it requires a “human-in-the-loop” philosophy. In medical education, for example, AI can analyze a thousand X-rays in seconds, but the physician must be the one to correlate those findings with the patient’s physical symptoms and personal history. The same logic applies to the humanities and social sciences.

The goal of higher education should be to teach students how to be the “editor-in-chief” of their own operate. So strengthening the teaching of critical thinking and skepticism. When a student can confidently tell an AI “this is wrong” because they possess the foundational knowledge to recognize a hallucination, the gap has been successfully bridged.

The immediate next step for many institutions will be the implementation of formal AI policies ahead of the next academic cycle. Many universities are currently forming task forces to determine whether AI use should be disclosed via a mandatory “AI Statement” on all submitted work, a move that would mirror the citations used for traditional research.

Disclaimer: This article is provided for informational purposes and does not constitute academic or legal advice.

We desire to hear from you. How is your institution handling the integration of AI, and where do you observe the biggest gaps in current training? Share your thoughts in the comments below.