As generative artificial intelligence continues to permeate the healthcare sector, a growing number of U.S. States are moving to establish guardrails around the utilize of AI-driven medical tools. The push to regulate healthcare chatbots reflects a widening gap between the rapid deployment of large language models (LLMs) and the slower pace of federal oversight, leaving state legislatures to grapple with the risks of algorithmic “hallucinations” and the potential for unauthorized medical practice.

The core of the tension lies in the distinction between a tool that provides general health information and one that offers a clinical diagnosis. While the U.S. Food and Drug Administration (FDA) regulates software as a medical device (SaMD), many AI chatbots operate in a gray area, offering advice that can feel diagnostic to a patient but lacks the clinical validation required for medical software.

For physicians, the concern is not just about accuracy, but about the erosion of the patient-provider relationship. When a patient arrives at a clinic with a set of symptoms “diagnosed” by a chatbot, it creates a new clinical challenge: the physician must not only treat the patient but also debunk the potentially incorrect data provided by an AI, often without knowing which model the patient used or what prompts led to the output.

From a public health perspective, the stakes are high. Inaccurate AI-generated medical advice can lead to delayed care for critical conditions or the misuse of medications. Because these tools are often marketed as “wellness” or “productivity” assistants rather than medical devices, they have historically bypassed the rigorous clinical trials necessary to ensure patient safety.

The Shift Toward State-Level Oversight

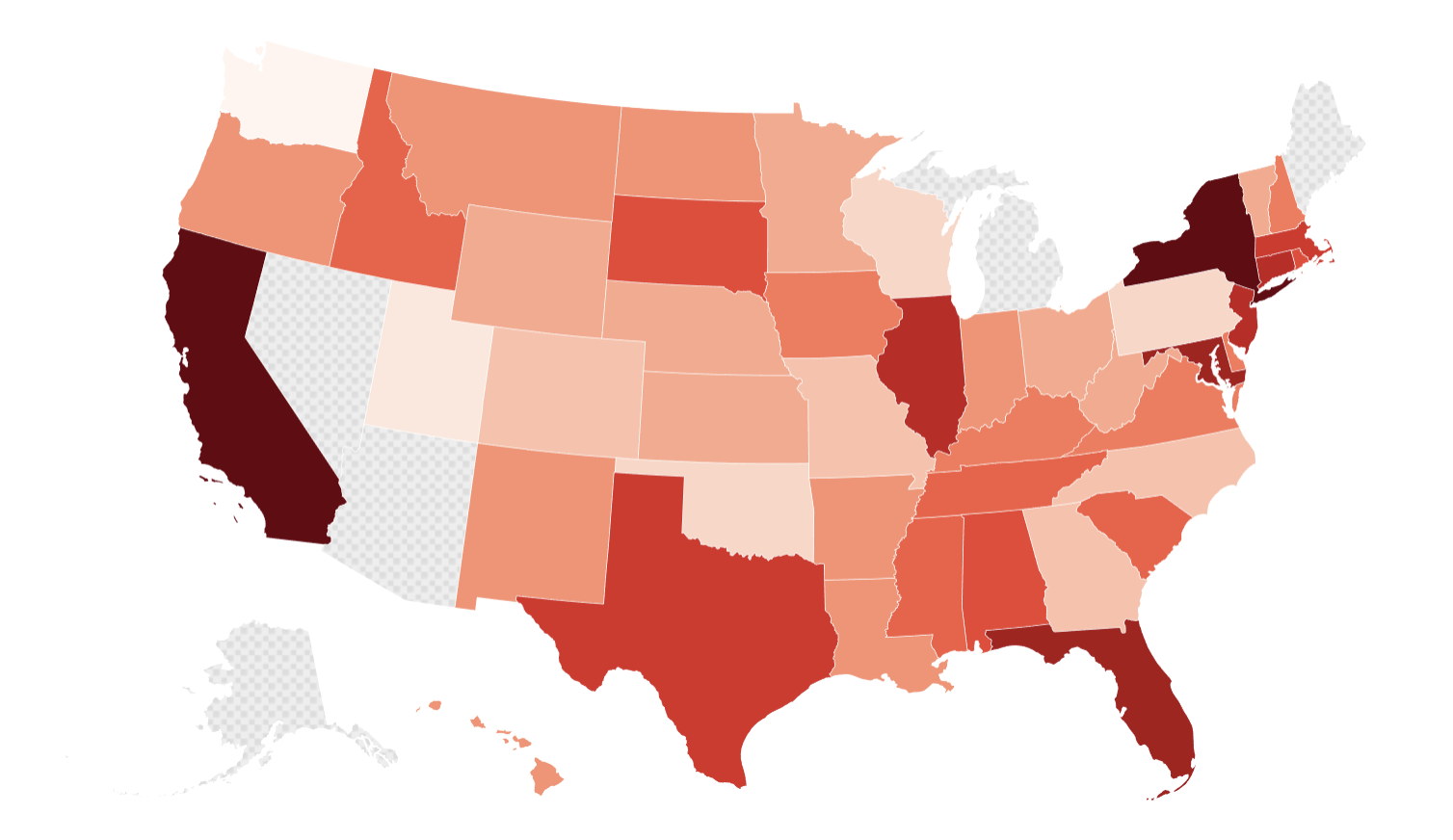

In the absence of a comprehensive federal AI law, states are increasingly treating AI health tools as a matter of consumer protection and professional licensing. Legislators are examining how to redefine “medical advice” in the digital age to ensure that chatbots do not inadvertently practice medicine without a license.

The primary focus for many state regulators is transparency. There is a growing demand for “clear and conspicuous” disclosures, ensuring that users are aware they are interacting with an algorithm and not a licensed professional. States are exploring liability frameworks to determine who is responsible when an AI chatbot provides harmful advice: the developer who built the model, the healthcare organization that deployed it, or the user who followed the guidance.

This regulatory movement is coinciding with a broader effort to protect patient privacy. While the Health Insurance Portability and Accountability Act (HIPAA) governs how covered entities handle protected health information, many consumer-facing chatbots fall outside these protections, leading to concerns that sensitive health data is being used to train commercial AI models without explicit patient consent.

Key Regulatory Priorities and Risks

The effort to regulate healthcare chatbots is centering on several critical failure points inherent in current AI technology:

- Algorithmic Hallucinations: The tendency of LLMs to generate confident but entirely fabricated medical citations or treatment protocols.

- Bias and Equity: The risk that AI models trained on non-representative datasets may provide less accurate or biased health recommendations for marginalized populations.

- Licensing Violations: The legal ambiguity of whether an AI providing a specific treatment plan constitutes the “unauthorized practice of medicine.”

- Data Sovereignty: The question of whether health data entered into a chatbot remains the property of the patient or becomes part of a corporate training set.

The Impact on Clinical Practice and Innovation

For the medical community, the integration of AI is a double-edged sword. On one hand, AI can drastically reduce the administrative burden on clinicians by automating documentation and summarizing patient histories. On the other, the proliferation of patient-facing chatbots can complicate the triage process.

Medical professionals are advocating for a “human-in-the-loop” requirement, where AI is used to support clinical decision-making rather than replace it. This approach ensures that a board-certified physician remains the final authority on any diagnosis or prescription, maintaining the standard of care and legal accountability.

Industry stakeholders, however, warn that overly restrictive state laws could stifle innovation. They argue that a patchwork of 50 different state regulations would make it nearly impossible to deploy scalable health tech solutions across the country. This has led to calls for a unified federal framework that balances safety with the need for rapid technological evolution.

Comparison of Regulatory Approaches

| Approach | Primary Goal | Mechanism | Key Challenge |

|---|---|---|---|

| Federal (FDA) | Patient Safety | Pre-market clearance/approval | Slow approval cycles |

| State Consumer Law | Fraud Prevention | Disclosure and transparency rules | Inconsistent across states |

| Medical Boards | Professional Integrity | Licensing and ethics enforcement | Hard to track anonymous AI use |

Navigating the Path Forward

The trajectory of AI in healthcare will likely be defined by how regulators handle the “black box” problem—the fact that even the creators of these models cannot always explain why a specific output was generated. For a medical tool to be truly safe, it must be explainable and reproducible, a standard that current generative AI often fails to meet.

As states continue to draft legislation, the focus is shifting toward “algorithmic auditing,” where third-party experts review the training data and performance of a health chatbot before it is allowed to be marketed to the public. This would mirror the clinical trial process, providing a layer of verification that protects the patient without completely halting the development of the technology.

The immediate future of these regulations will likely hinge on upcoming legislative sessions and the potential for a federal “AI Bill of Rights” or similar framework that could preempt conflicting state laws. Until then, the responsibility remains with the consumer to verify AI-generated health information with a licensed provider.

Disclaimer: This article is for informational purposes only and does not constitute medical or legal advice. Always seek the advice of your physician or other qualified health provider with any questions you may have regarding a medical condition.

The next critical checkpoint for these regulations will be the introduction and debate of AI-specific health bills in state legislatures during the 2025 session, where several states are expected to propose formal mandates for AI transparency in clinical settings.

We invite readers to share their experiences with AI health tools in the comments below or share this article with colleagues in the health tech space.