Intel and SambaNova have announced a joint production-ready heterogeneous AI inference platform designed to break the “one-size-fits-all” approach to large language model (LLM) deployment. By splitting the complex process of AI inference across three different types of silicon, the two companies aim to create a more efficient, scalable alternative to the dominant hardware stacks currently controlled by Nvidia.

In a traditional AI setup, a single type of accelerator typically handles every stage of a request. Still, the computational demands of an AI response change drastically from the moment a user hits “enter” to the moment the final word is generated. The new architecture addresses this by assigning specific workloads to the hardware best suited for them: AI GPUs or accelerators handle the initial “prefill” stage, SambaNova’s SN50 Reconfigurable Dataflow Units (RDUs) manage the “decode” phase, and Intel Xeon 6 processors orchestrate the system and run agentic tools.

This strategic division of labor is a direct attempt to siphon market share from Nvidia and other emerging AI chipmakers by reducing the bottlenecks associated with memory bandwidth and compute efficiency. The solution is scheduled to be available to enterprises, cloud operators, and sovereign AI programs in the second half of 2026.

Solving the prefill and decode bottleneck

To understand why this partnership matters, it helps to look at how LLMs actually perform under the hood. When you send a long prompt to an AI, the system goes through two primary phases: prefill, and decode. These two stages have fundamentally different hardware requirements, which is why a heterogeneous AI inference platform is theoretically more efficient than a monolithic one.

The prefill stage is compute-bound. The system must ingest the entire input prompt and build a “key-value (KV) cache,” which allows the model to remember the context of the conversation. This requires massive raw throughput, a task where high-end AI GPUs and accelerators excel. Once the prefill is complete, the system shifts to the decode stage, where it generates tokens (words or characters) one by one.

Decoding is memory-bandwidth bound. The bottleneck isn’t how rapid the chip can calculate, but how quickly it can move data from memory to the processor. This represents where SambaNova’s SN50 RDUs come in. Unlike traditional GPUs, RDUs are designed to optimize dataflow, allowing for faster token generation and more efficient handling of the KV cache, which reduces the latency users experience during long responses.

Finally, the architecture integrates Intel Xeon 6 processors to handle “agentic” workloads. As AI evolves from simple chatbots to “agents” that can execute code, validate outputs, and interact with other software, the need for general-purpose compute returns. The Xeon 6 CPUs act as the brain of the operation, coordinating the distribution of workloads across the GPUs and RDUs while running the complex logic required for autonomous agent tools.

Performance benchmarks and data center compatibility

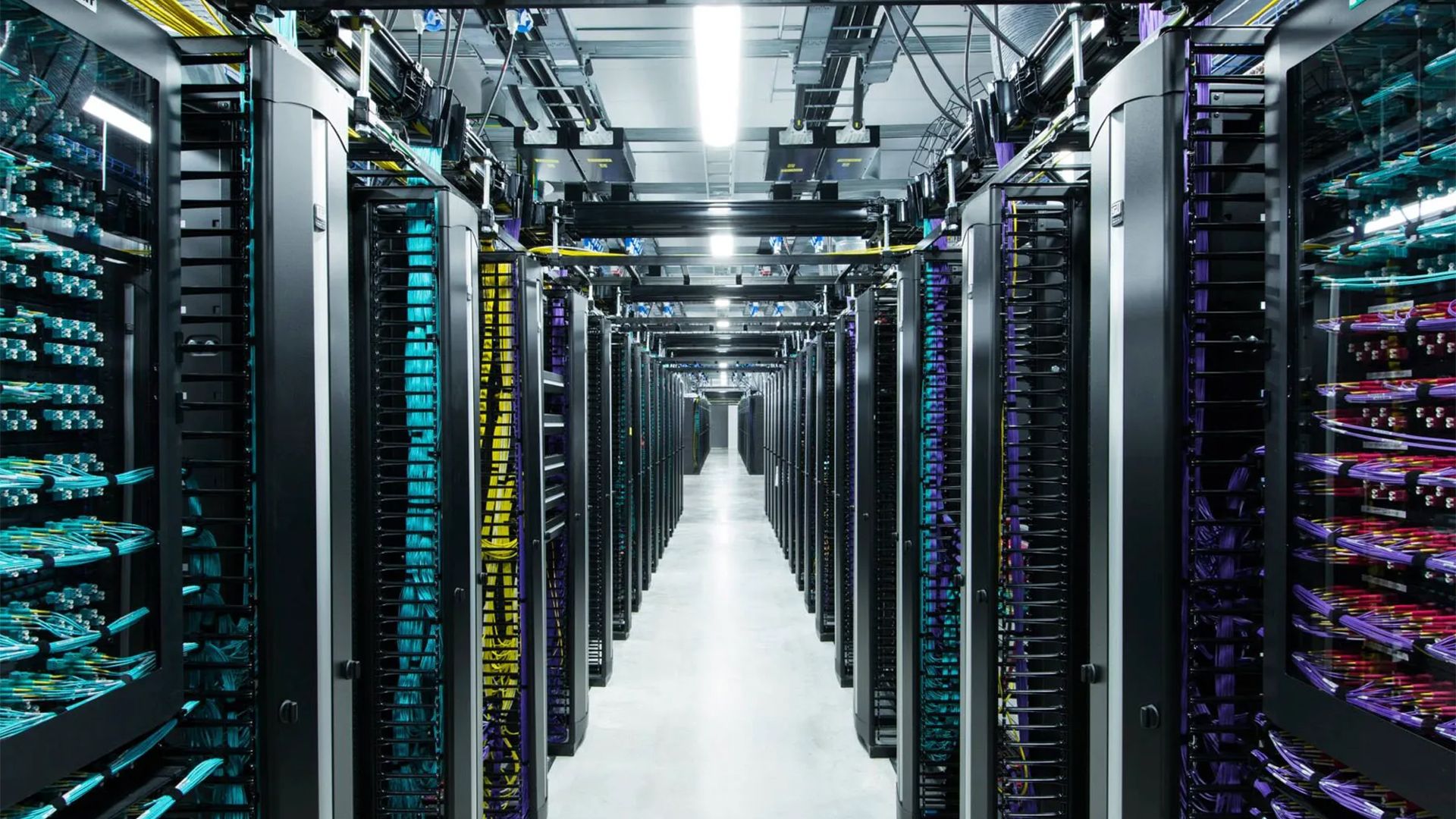

For many enterprises, the barrier to adopting new AI hardware isn’t just performance—it’s power. Many existing data centers are designed for 30kW racks. The high power draw of the latest AI chips often forces companies to undergo expensive facility retrofits. Intel and SambaNova claim their joint architecture is “drop-in compatible” with these 30kW environments, potentially removing a significant financial hurdle for corporate adoption.

Beyond power, the partnership leans heavily on the performance of the Intel Xeon 6. According to internal data from SambaNova, the Xeon 6 delivers substantial gains in the “glue” workloads that support AI agents:

- LLVM Compilation: Over 50% faster compared to Arm-based server CPUs.

- Vector Database Workloads: Up to 70% higher performance relative to competing x86 processors, specifically the AMD EPYC series.

These improvements are intended to shorten the end-to-end development cycles for coding agents—AI tools that can write, test, and deploy software autonomously. By speeding up the compilation and database retrieval phases, the platform aims to make the entire agentic loop feel more instantaneous.

| Inference Stage | Hardware Component | Primary Function |

|---|---|---|

| Prefill | AI GPUs / Accelerators | Prompt ingestion & KV cache building |

| Decode | SambaNova SN50 RDUs | Token generation & output streaming |

| Agentic/Orchestration | Intel Xeon 6 CPUs | Code execution & system coordination |

The broader market strategy

This move reflects a growing trend in the industry to move away from monolithic GPU clusters. Even Nvidia has explored similar concepts with its Rubin platform, though reports indicate that certain components, such as the Rubin CPX, may not reach the market as originally planned. Intel’s advantage here is the existing ubiquity of x86 architecture.

Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group at Intel, emphasized that the software ecosystem is already deeply rooted in Intel’s architecture. “The data center software ecosystem is built on x86, and it runs on Xeon — providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale,” Kechichian said. He added that future workloads will require a “heterogeneous mix of computing” to remain cost-efficient.

The timing is particularly critical for “sovereign AI” programs—national initiatives where countries build their own AI infrastructure to ensure data privacy and cultural alignment. These programs often seek scalable, in-house platforms that do not rely on a single vendor’s proprietary lock-in.

As the industry moves toward 2026, the success of this platform will depend on how easily developers can migrate their existing models to this split-hardware architecture. The next major milestone will be the general availability of the production-ready servers in the second half of 2026, which will provide the first real-world benchmarks on how this tripartite system handles massive, agent-driven enterprise workloads.

Do you think splitting AI workloads across different hardware is the future of the data center, or will a single “super-chip” eventually win out? Share your thoughts in the comments.