Amazon has revealed for the first time that the artificial intelligence services within its cloud-computing division are now generating an annualized revenue of more than $15 billion. The figure, disclosed by CEO Andy Jassy, marks a critical pivot for the company as it moves from the research and development phase of generative AI into a period of tangible, large-scale monetization.

For months, investors and industry analysts have scrutinized the “AI spend” of the major cloud providers, questioning when the massive capital expenditures on data centers and GPUs would translate into top-line growth. By quantifying the Amazon cloud AI revenue, Jassy is providing a concrete answer: the demand for enterprise-grade AI infrastructure is not just theoretical, but is actively driving billions in new business.

The disclosure comes at a time when Amazon Web Services (AWS) is fighting to maintain its dominant position in the cloud market. While AWS remains the largest provider by market share, it has faced intense pressure from Microsoft Azure, which leveraged an early and aggressive partnership with OpenAI to capture the initial wave of generative AI excitement.

The architecture of a $15 billion revenue stream

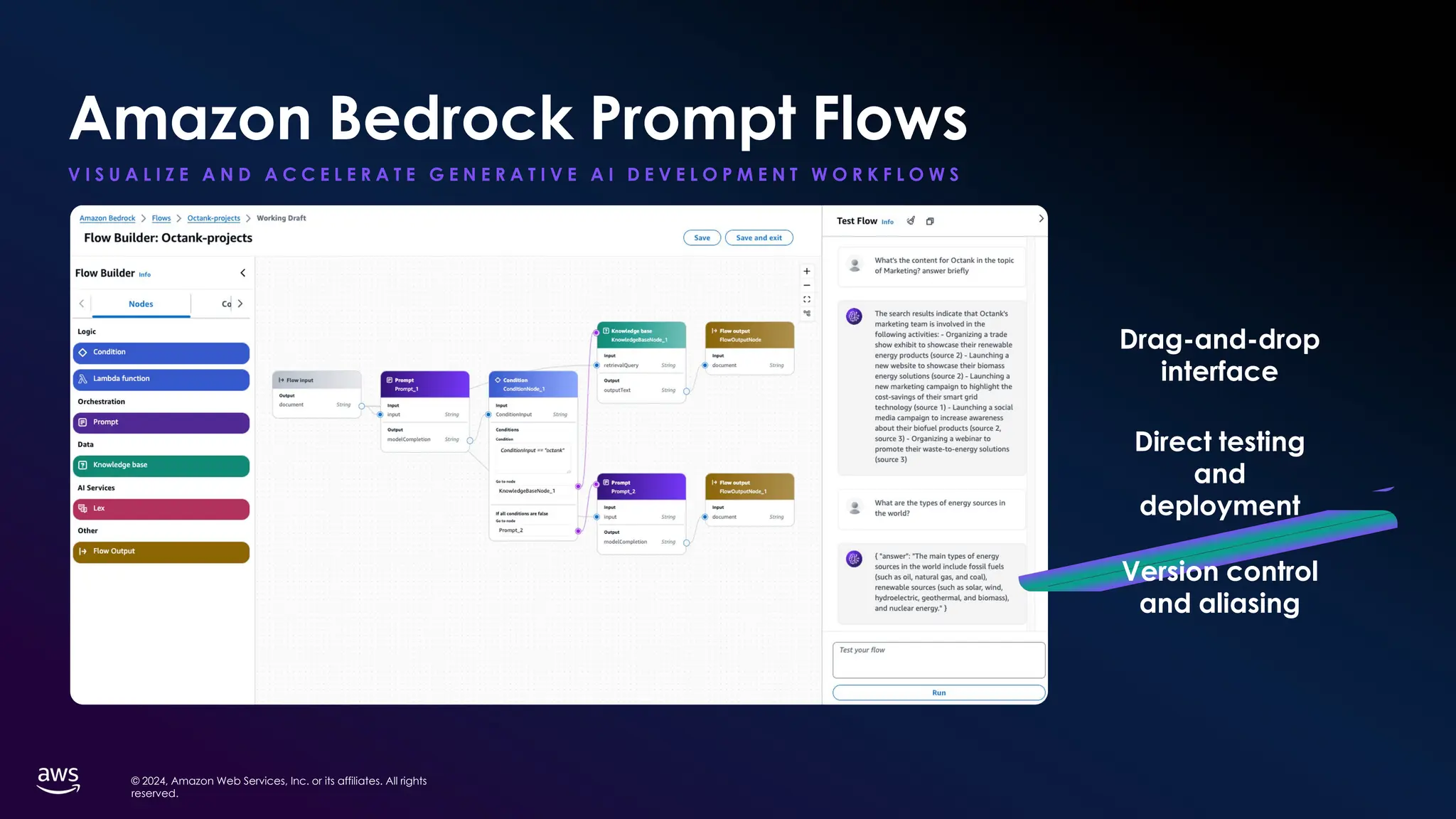

The revenue mentioned by Jassy does not stem from a single product, but rather a tiered ecosystem designed to capture value at every level of the AI stack. At the top is Amazon Bedrock, a fully managed service that allows businesses to build and scale generative AI applications using a variety of foundation models from companies like Anthropic, Meta, and Mistral, as well as Amazon’s own Titan models.

Below the software layer, Amazon is leveraging its background in custom silicon to reduce costs for its customers. The company has invested heavily in its own AI chips, Trainium and Inferentia, which are designed to train and run large language models (LLMs) more efficiently than general-purpose GPUs. For the enterprise customer, this means lower latency and reduced compute costs, making the transition from a “pilot project” to a “production environment” more financially viable.

The revenue growth is further supported by Amazon Q, a generative AI-powered assistant designed specifically for work. By integrating AI directly into the developer workflow and corporate data silos, Amazon is attempting to move AI from a standalone tool to an invisible, integrated part of the cloud operating system.

Breaking down the AI cloud landscape

To understand where Amazon stands, it is helpful to look at how the “Big Three” cloud providers are positioning their AI offerings to capture corporate spending.

| Provider | Primary AI Platform | Hardware Strategy | Key Model Partnership |

|---|---|---|---|

| AWS | Amazon Bedrock | Trainium / Inferentia | Anthropic |

| Azure | Azure AI Studio | Maia / NVIDIA GPUs | OpenAI |

| Google Cloud | Vertex AI | TPU (Tensor Processing Units) | Gemini (First-party) |

From experimentation to production

The $15 billion milestone suggests a fundamental shift in how companies are using AI. Throughout 2023 and early 2024, much of the cloud growth was driven by “experimentation”—companies paying for small-scale tests to observe what generative AI could do. However, the scale of current revenue indicates that a significant number of these experiments have graduated to full-scale deployment.

Enterprise adoption is typically slower than consumer adoption due to concerns over data privacy, security, and “hallucinations.” Amazon’s strategy has focused heavily on these pain points. By ensuring that data used to fine-tune models in Bedrock remains encrypted and is not used to train the base models, AWS has managed to attract highly regulated industries, including healthcare and financial services.

This “production-ready” phase is where the real revenue resides. When a company moves a chatbot from a demo to a customer-facing tool serving millions of users, the compute requirements—and the subsequent billing for the cloud provider—increase exponentially.

The capital expenditure challenge

Despite the impressive revenue figures, the growth comes with a steep price tag. Building the infrastructure necessary to support $15 billion in AI revenue requires an immense investment in physical assets. This includes not only the chips themselves but the massive power grids and cooling systems required to keep AI data centers operational.

Industry analysts note that the “AI arms race” has forced cloud providers into a cycle of aggressive capital expenditure. The challenge for Amazon moving forward will be maintaining margins. While the revenue is growing, the cost of building the “factories” for this AI—the data centers—is rising simultaneously.

As a former software engineer, I’ve watched this cycle before. The early days of cloud computing were defined by massive over-provisioning of hardware in hopes that the market would catch up. We are seeing a similar pattern with AI, but at a much larger scale and a much faster pace. The $15 billion figure proves the market is catching up, but the efficiency of the underlying hardware will determine who wins the long-term margin war.

What remains uncertain

While the revenue figure is a victory for Jassy, several variables remain. First, the reliance on third-party models in Bedrock means Amazon is partially dependent on the success and pricing of partners like Anthropic. If a dominant, open-source model emerges that can be run cheaply on commodity hardware, the “platform fee” model of cloud AI could be disrupted.

Second, there is the question of sustainability. AI workloads are significantly more energy-intensive than traditional cloud computing. Amazon’s ability to scale this revenue further will likely depend on its ability to secure green energy sources to power its expanding fleet of data centers without violating its corporate sustainability goals.

The next major checkpoint for the company’s AI trajectory will be the upcoming quarterly earnings reports, where analysts will look for signs that AI revenue is not just growing, but accelerating relative to the cost of the infrastructure being built to support it.

Disclaimer: This article is for informational purposes only and does not constitute financial or investment advice.

Do you think the move toward custom AI silicon will give AWS a permanent edge over its competitors, or will open-source models level the playing field? Share your thoughts in the comments below.