The race to bring high-performance computing into the vacuum of space has long been dominated by theoretical blueprints and ambitious promises. However, the industry is shifting from conceptual white papers to operational hardware. Canada-based Kepler Communications has launched what is currently the largest orbital compute cluster, marking a pivotal step in the transition toward a functional space-based internet and processing layer.

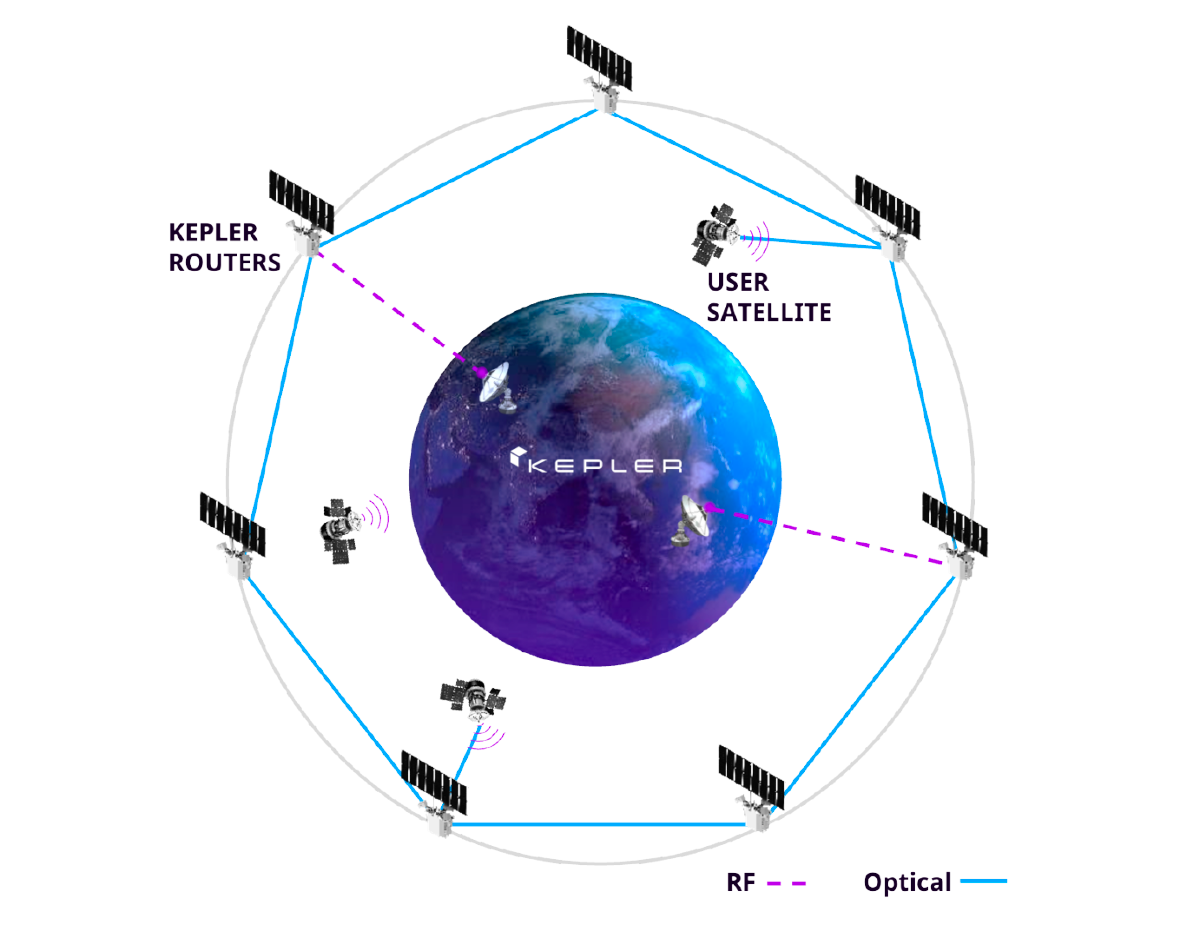

Launched in January, the constellation consists of 10 operational satellites equipped with approximately 40 Nvidia Orin edge processors. These units are interconnected via laser communication links, allowing the cluster to function as a distributed computing environment. While the industry has buzzed with the idea of “data centers in space,” this deployment represents a pragmatic approach to orbital compute: focusing on edge processing rather than massive, centralized server farms.

The network is already seeing commercial traction, with Kepler reporting 18 customers. The latest addition to this roster is Sophia Space, a startup specializing in orbital computing hardware. The partnership aims to move beyond simple data relay, attempting to run complex software configurations across multiple GPUs in orbit—a task that is routine in terrestrial data centers but remains an unproven frontier in space.

Moving the Brain to the Edge

For years, the standard model for satellite operations has been “bent-pipe” architecture: a satellite collects data and beams it back to Earth for processing. This creates significant latency and consumes vast amounts of bandwidth. By placing the compute cluster in orbit, Kepler is enabling “edge processing,” where data is analyzed where it is collected, and only the relevant insights are sent back to the ground.

This capability is particularly critical for power-hungry sensors, such as synthetic aperture radar (SAR), which generate massive datasets. Offloading the processing to an orbital cluster allows satellites to react in real-time. This has immediate implications for national security; the U.S. Military is a primary stakeholder in this technology as it develops missile defense systems that require the rapid detection and tracking of threats. Kepler has already demonstrated a space-to-air laser link in a government demonstration to prove this viability.

Mina Mitry, CEO of Kepler, emphasizes that the company does not view itself as a traditional data center provider. Instead, Kepler is building infrastructure—a network layer that provides processing and connectivity for other satellites, drones, and aircraft. The strategy focuses on inference—the process of applying a trained AI model to new data—rather than the computationally expensive process of training models from scratch.

“Because we have the belief it’s more inference than training, we seek more distributed GPUs that do inference, rather than one superpower GPU that has the training workload capacity,” Mitry said. “If this thing consumes kilowatts of power and you’re only running at 10% of the time, then that’s not super helpful. In our case, our GPUs are running 100% of the time.”

The Thermal Challenge and the Sophia Partnership

The primary obstacle to scaling orbital compute is heat. In the vacuum of space, there is no air to carry heat away via convection, meaning processors can quickly overheat. Traditional solutions involve heavy, expensive active-cooling systems (like liquid loops), which add significant mass and cost to a launch.

Sophia Space is attempting to solve this through passively-cooled space computers. As part of their new partnership, Sophia will upload a proprietary operating system to a Kepler satellite and attempt to configure it across six GPUs on two separate spacecraft. This “de-risking” exercise is a critical milestone before Sophia proceeds with its own planned satellite launch in late 2027.

| Approach | Primary Focus | Key Players | Expected Timeline |

|---|---|---|---|

| Distributed Edge Compute | Real-time inference & sensor offloading | Kepler, Sophia Space | Operational Now |

| Large-Scale Data Centers | Heavy workloads & model training | SpaceX, Blue Origin, Starcloud | 2030s |

A New Frontier for Data Sovereignty

The push toward orbital compute is not only driven by technical necessity but also by terrestrial constraints. As AI demand surges, the physical footprint of data centers on Earth has become a point of political and environmental contention. Recent legislative moves, including a ban on new data center construction in Wisconsin and similar discussions in the U.S. Congress, are creating a regulatory environment that may push compute capacity off-planet.

Rob DeMillo, CEO of Sophia Space, suggests that as land-leverage restrictions and energy constraints develop terrestrial expansion more difficult, the economic argument for space-based alternatives becomes more compelling. If the ground is closed to new data centers, the orbit becomes the only logical place for expansion.

This vision separates the “edge” players from the “mega-center” players. While startups like Starcloud and Aetherflux are raising significant capital to build massive orbital hubs with data-center-grade processors, Kepler and Sophia are betting on a distributed, lean architecture that provides immediate utility for the current generation of space-based sensors.

The next major milestone for this orbital ecosystem will be the successful deployment and configuration of Sophia Space’s operating system across Kepler’s GPUs, followed by Sophia’s first independent satellite launch scheduled for late 2027.

Do you think the future of AI is in the cloud or in the stars? Share your thoughts in the comments below.