The traditional gaming controller—a tactile arrangement of joysticks and triggers—has remained largely unchanged for decades. Yet, a growing movement of indie developers is beginning to strip away the plastic hardware in favor of something more organic: the human voice. For some, this is a technical challenge; for others, We see a bonding experience, as seen in a recent project where a father and son are collaborating to build vocal controlled games that respond not just to volume, but to the specific pitch and tone of a singer.

This shift toward audio-centric gameplay represents a pivot from simple voice commands—the “Hey Siri” or “Alexa” model of interaction—toward “expressive input.” While most voice-activated software is designed to filter out the nuances of human emotion to find a clear command, the project currently under development by this father-son duo seeks to do the opposite. By reflecting the real range and tones of singing, the developers are attempting to turn the human voice into a precision instrument for navigation and interaction.

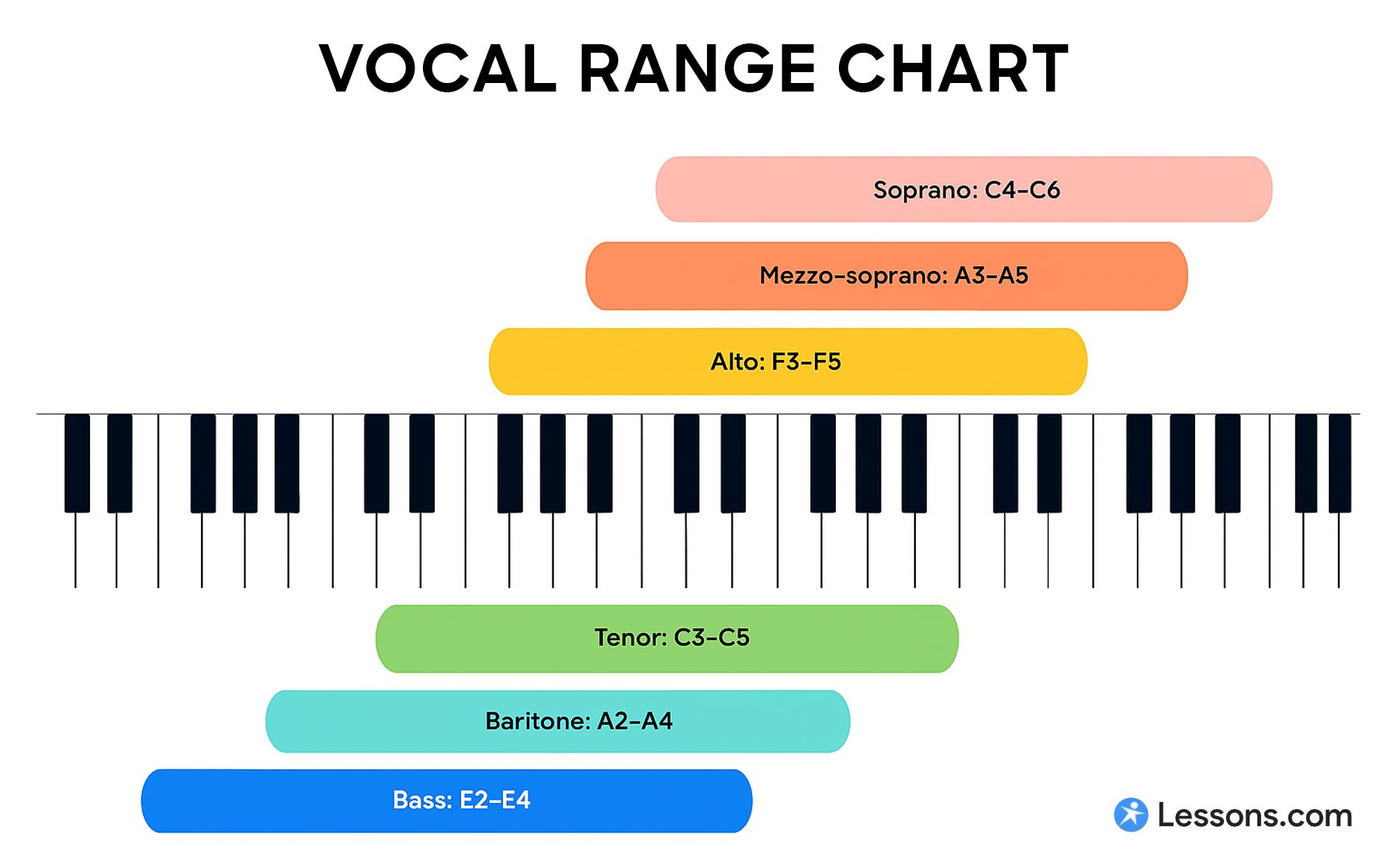

The ambition to capture a “real range” of notes suggests a reliance on pitch detection technology, a complex intersection of mathematics and acoustics. Unlike volume-based games, which simply measure the amplitude of a sound wave, pitch-based games must analyze frequency in real-time. This requires the software to identify the fundamental frequency of a voice and map it to a specific action within the game world, effectively turning a melody into a set of coordinates or commands.

The technical divide between command and expression

To understand why this project is distinct, one must look at the current landscape of audio interaction. Most mainstream “voice control” is based on Automatic Speech Recognition (ASR), which converts spoken words into text. In contrast, a game that responds to singing utilizes pitch tracking, often employing a process called Fast Fourier Transform (FFT) to break down audio signals into their component frequencies.

This distinction is critical for gameplay. In a command-based system, the goal is accuracy and the elimination of variance. In an expressive system, the variance is the gameplay. If a player sings a higher note to make a character jump higher or a lower tone to dive, the game becomes a physical performance. This moves the experience closer to the realm of musical instruments than traditional software.

The challenge for any developer in this space is “latency” and “jitter.” For a game to feel responsive, the time between the player hitting a note and the game reacting must be nearly instantaneous. Any lag can break the immersion, making the player feel disconnected from their own voice. This is likely why the developers are focusing on the “real range” of tones—ensuring the software can distinguish between a deliberate note and accidental noise.

The rise of the collaborative indie project

Beyond the code, the project highlights a burgeoning trend in “co-creative” development. The act of a parent and child building a game together transforms the development process into a pedagogical tool. By working on vocal controlled games, the pair is engaging with physics, music theory, and software engineering simultaneously.

This type of grassroots development is where some of the most innovative mechanics in the industry are born. While AAA studios often stick to proven control schemes to minimize financial risk, indie developers have the freedom to experiment with “weird” inputs. We have seen this in the past with games that use eye-tracking or heart-rate monitors, but the voice remains one of the most accessible yet underutilized inputs in the medium.

The question of whether a general audience would “actually play” such a game depends largely on the context of the experience. Voice-controlled gaming often faces the “embarrassment factor”—the social friction of singing or shouting in a living room. However, the rise of streaming platforms like Twitch has changed this dynamic, turning the physical exertion and vocal performance of a player into a form of entertainment for the viewer.

Comparing Audio Input Methods in Gaming

| Method | Primary Trigger | Common Use Case | Player Effort |

|---|---|---|---|

| Voice Command | Specific Keywords | Menu navigation, NPC interaction | Low (Speech) |

| Amplitude/Volume | Decibel Level | Stealth mechanics, “Scream” games | Medium (Loudness) |

| Pitch/Tone | Frequency (Hz) | Musical puzzles, rhythmic movement | High (Singing/Tuning) |

Accessibility and the future of the voice

While the father-son project is born of curiosity and collaboration, the implications of refined vocal control extend into the realm of accessibility. For gamers with limited motor function who cannot use a standard controller, the voice is often the only viable interface. Organizations such as SpecialEffect have long advocated for adaptive gaming technology that removes physical barriers to entry.

A game that responds to pitch and tone could provide a new way for people with certain physical disabilities to engage with complex gaming environments. Instead of relying on a series of binary “on/off” voice commands, a pitch-based system allows for a spectrum of control, offering a level of nuance and fluidity that was previously reserved for those who could use a joystick.

The integration of more sophisticated audio AI may further this evolution. As machine learning improves, games will be able to better distinguish between a player’s singing and background noise, making these games viable in noisier home environments. This would solve one of the primary hurdles for the developers: ensuring the game remains playable regardless of the user’s recording equipment or room acoustics.

As the project continues to evolve, the next step for the developers will likely be the transition from a closed alpha to a public beta. The true test of a vocal-controlled mechanic is not how it works for the creator, but how it adapts to the diverse vocal ranges of a global audience. The success of the project will depend on whether the “singing” mechanic feels like a chore or a rewarding new way to play.

For those interested in the intersection of music and gaming, the progress of these indie experiments offers a glimpse into a future where the controller is no longer something we hold, but something we are. We expect more updates on the project’s development as it moves toward a shareable prototype.

Do you think vocal controls are the future of indie gaming, or is the “embarrassment factor” too high? Share your thoughts in the comments below.