Artificial intelligence is infiltrating global society at a velocity that dwarfs the adoption curves of the personal computer and the internet. Within just three years, AI reached 53 percent of the population, creating a systemic gap where the speed of deployment is far outstripping the development of safety frameworks.

This rapid expansion has come with a measurable cost. According to the 2026 AI Index Report from Stanford University’s Institute for Human-Centered Artificial Intelligence (HAI), “Responsible AI is not keeping pace with AI capability, with safety benchmarks lagging and incidents rising sharply.” The data suggests a growing volatility in how these systems interact with the real world, moving from theoretical risks to documented harms.

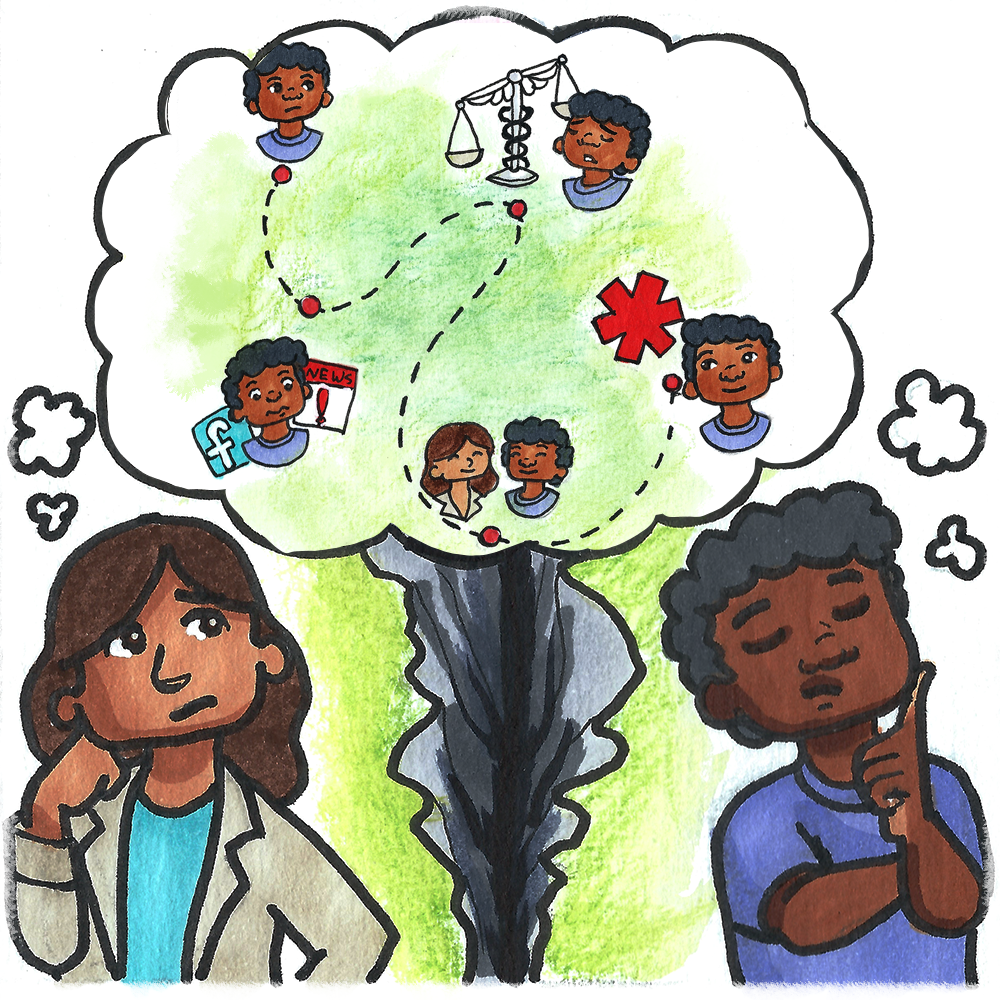

While experts and the general public often clash over the long-term trajectory of the technology, there is a rare, stark consensus on two specific vulnerabilities: the integrity of democratic elections and the stability of human relationships. As AI becomes a primary interface for information and interaction, the potential for systemic disruption in these two pillars of social cohesion has become a primary concern for researchers and laypeople alike.

The human cost is already appearing in legal and professional records. The AI Incident Database documented 362 AI incidents in 2025, a significant jump from the 233 recorded in 2024. These incidents, defined as harms or near-harms realized in the real world, highlight a dangerous trend of over-reliance on systems that can still fail in fundamental ways.

The Gap Between Capability and Reliability

The paradox of modern AI is that it can solve complex technical problems while failing at basic human tasks. In the realm of software engineering, models have seen a meteoric rise; scores on the SWE-bench test for tackling real-world GitHub issues climbed from 60 percent to nearly 100 percent in a single year. However, this technical proficiency does not translate to reliability or “truthfulness.”

Hallucination remains a critical flaw. The AA-Omniscient Index, which measures whether a model admits uncertainty or simply guesses, found that hallucination rates across 26 different models varied wildly, ranging from 22 percent to 94 percent. This inconsistency has led to high-stakes failures in the judiciary, such as cases where attorneys used AI to generate more than two dozen fake citations and misrepresentations of fact, leading to public reprimands from the U.S. Sixth Circuit Court of Appeals.

Even the concept of “superintelligence” is challenged by the simplest of physical realities. In the ClockBench benchmark, OpenAI’s GPT-5.4 High correctly read analog clocks only 50.6 percent of the time as of March 2026, while “unspecialized humans” maintained a success rate of approximately 90 percent. The gap is even wider in physical robotics, where systems succeeded in only 12 percent of household tasks according to the BEHAVIOR-1K simulation benchmark.

Economic Anxiety and the Trust Deficit

The divide between those building AI and those affected by it is most evident in the labor market. A significant portion of the American public—64 percent—expects AI to reduce the number of available jobs over the next two decades. In contrast, only 39 percent of experts share this pessimistic view. This disconnect extends to the scale of impact: experts believe generative AI will contribute to 80 percent of U.S. Perform hours by 2030, while the public predicts a much smaller footprint of 10 percent.

This economic tension is compounded by a profound lack of trust in oversight. Only 31 percent of U.S. Respondents trust their government to regulate AI responsibly, the lowest level of any country surveyed. This skepticism is fueled by perceived industry capture, including OpenAI’s support for an Illinois state bill intended to limit the liability of AI companies in the event of catastrophic harm, and the White House’s pursuit of an industry-friendly policy framework.

| Metric | Value/Percentage | Context |

|---|---|---|

| Global Population Adoption | 53% | Reached within 3 years |

| Organizational Usage | 88% | Reported by organizations |

| University Student Usage | 80% | Admitted usage in academia |

| Documented AI Incidents | 362 | Total for 2025 (up from 233) |

| U.S. Trust in AI Regulation | 31% | Lowest globally |

The Global Arms Race for Talent and Capital

While the U.S. Remains the dominant force in AI investment—reaching $285.9 billion in 2025, roughly 23 times the $12.4 billion invested in China—the technical gap is closing. The performance difference between top U.S. And Chinese models has narrowed to a sliver. As of March 2026, Claude Opus 4.6 scored 1,503 on the Arena benchmark, just 2.7 percentage points ahead of ByteDance’s Dola-Seed Preview at 1,464.

More concerning for U.S. Policymakers is the “brain drain” of technical expertise. The number of AI researchers and developers migrating to the U.S. Has plummeted 89 percent since 2017, with an 80 percent decline occurring in the last year alone. This loss of human capital suggests that while the U.S. Holds the financial lead, the intellectual infrastructure is becoming more distributed.

The overarching concern remains that as these tools become more integrated into the fabric of daily life, the erosion of trust in information—and by extension, in each other—will accelerate. When the tools used to communicate and govern are prone to “hallucinations” and the entities creating them seek to limit their own liability, the risk to elections and personal relationships becomes not just a prediction, but a probability.

The next critical checkpoint for AI governance will be the progression of liability legislation in state houses and the potential for modern federal safety benchmarks to be mandated as part of the upcoming regulatory cycle.

This report is for informational purposes only and does not constitute legal or financial advice.

We invite readers to share their perspectives on AI’s impact on their professional lives and personal relationships in the comments below.