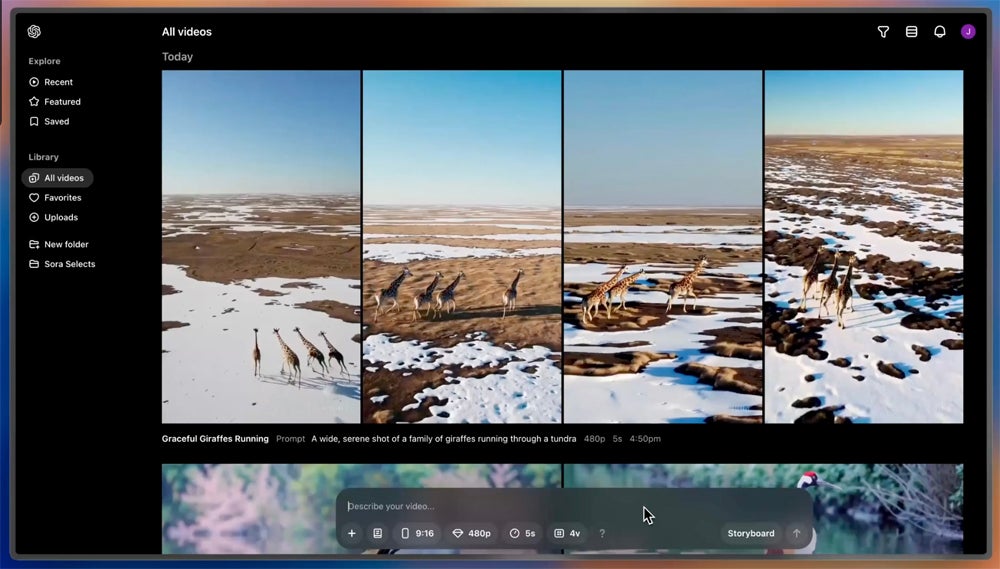

The intersection of artificial intelligence and creative expression is reaching a critical juncture as new generative tools move beyond simple text and images into the realm of high-fidelity video production. The release of Sora, OpenAI’s text-to-video model, represents a significant leap in how machines simulate physical worlds, promising to transform everything from independent filmmaking to corporate advertising.

By translating complex written prompts into scenes that can last up to a minute, the technology aims to maintain visual consistency and adhere to a user’s specific request while simulating the physics of a three-dimensional environment. For those tracking the evolution of AI video generation, the shift is not merely in resolution, but in the model’s ability to understand the relationship between objects and their movements over time.

However, the rollout of such powerful tools comes with substantial caution. OpenAI has not yet released Sora to the general public, instead limiting access to a “red team” of experts in cybersecurity, misinformation, and artist rights to identify potential harms before a wider launch. This controlled release highlights the tension between rapid innovation and the necessity of safety guardrails in the age of synthetic media.

The Mechanics of Motion and Simulation

Unlike previous iterations of video AI that often produced “hallucinations”—where objects would morph unnaturally or disappear—Sora utilizes a transformer architecture similar to those powering GPT-4, but applied to visual patches. This allows the system to treat video frames as a sequence of data points, creating a more coherent flow of motion.

The model is designed to handle multiple characters, specific types of motion, and accurate details of the subject and background. While the system still struggles with complex physics—such as the exact way a glass of water might shatter or the precise sequence of a person eating a cookie—the baseline realism is a marked improvement over earlier generative video tools. According to OpenAI’s technical documentation, the model learns to simulate the physical world by training on massive datasets of visual information.

The implications for content creators are vast. A filmmaker can now prototype a scene without a physical set, and a marketer can generate high-quality B-roll without a camera crew. This democratization of visual production lowers the barrier to entry but raises urgent questions about the future of professional cinematography and visual effects (VFX) artistry.

Addressing the Risks of Synthetic Media

The ability to create photorealistic video from a text prompt introduces significant risks regarding disinformation and the creation of non-consensual content. The potential for “deepfakes” to influence public opinion or impersonate individuals has led OpenAI to implement several safety layers. These include classifiers designed to detect Sora-generated content and the integration of metadata to mark videos as AI-produced.

The “red teaming” process is specifically designed to stress-test the model against attempts to generate hate speech, violent content, or deceptive political imagery. By partnering with external specialists, the developers aim to build a robust filter that prevents the tool from being weaponized for large-scale misinformation campaigns.

Beyond safety, there is the ongoing debate over copyright and the training data used to build these models. Many artists and studios have expressed concern that their copyrighted works are being used to train AI that could eventually replace them. While OpenAI maintains that it uses publicly available data and licensed content, the legal landscape regarding “fair use” in AI training remains unsettled in many jurisdictions, including the U.S. Copyright Office.

Comparison of Generative Video Capabilities

| Feature | Early Generative Video | Sora (Current State) |

|---|---|---|

| Clip Length | 2–4 seconds | Up to 60 seconds |

| Consistency | Low (Objects morph) | High (Maintains identity) |

| Physics | Abstract/Random | Simulated (with some errors) |

| Control | Basic Prompts | Complex Scene Direction |

What This Means for the Creative Industry

The arrival of high-fidelity AI video does not necessarily signal the end of human creativity, but it does signal a change in the required skill set. The role of the director may shift toward “prompt engineering” and curation, where the ability to describe a vision precisely becomes as important as the ability to execute it technically.

For the broader economy, the impact will likely be felt first in low-budget commercial production and social media content. Small businesses that cannot afford a full production house may discover Sora-like tools a viable way to create professional-grade advertisements. However, the “uncanny valley”—the slight feeling of wrongness in AI-generated humans—still persists, ensuring that high-end cinema and prestige television will likely rely on human actors and directors for the foreseeable future.

The timeline for a general release remains undisclosed, but the focus is clearly on stability and safety. As the model continues to be refined, the gap between “synthetic” and “captured” footage will continue to shrink, making the verification of visual evidence more difficult for journalists and investigators alike.

The next significant checkpoint for the technology will be the publication of the red teaming results and any subsequent updates to the model’s safety filters before a limited beta release is announced. Users are encouraged to follow official updates from OpenAI regarding access and usage terms.

We invite our readers to share their thoughts on the impact of AI video in the comments below. How do you see this changing your industry?