The dream of a truly “app-less” future arrived in a bright orange, pocket-sized box this year, promising to liberate users from the endless cycle of scrolling and tapping. The Rabbit R1, a handheld AI companion designed by Rabbit Inc., was marketed not as another smartphone accessory, but as a fundamental shift in how we interact with technology. Instead of opening an app to book a ride or order food, the R1 promised to do the work for you using a Large Action Model (LAM).

However, as the initial wave of hype settles, the reality of the device suggests a wider gap between the vision of agentic AI and the current state of hardware execution. While the R1 is a bold experiment in AI-first design, it currently functions more as a glimpse into a possible future than a reliable tool for the present. For those of us who have spent years building software, the ambition of the Large Action Model is fascinating, but the implementation reveals the steep climb ahead for dedicated AI hardware.

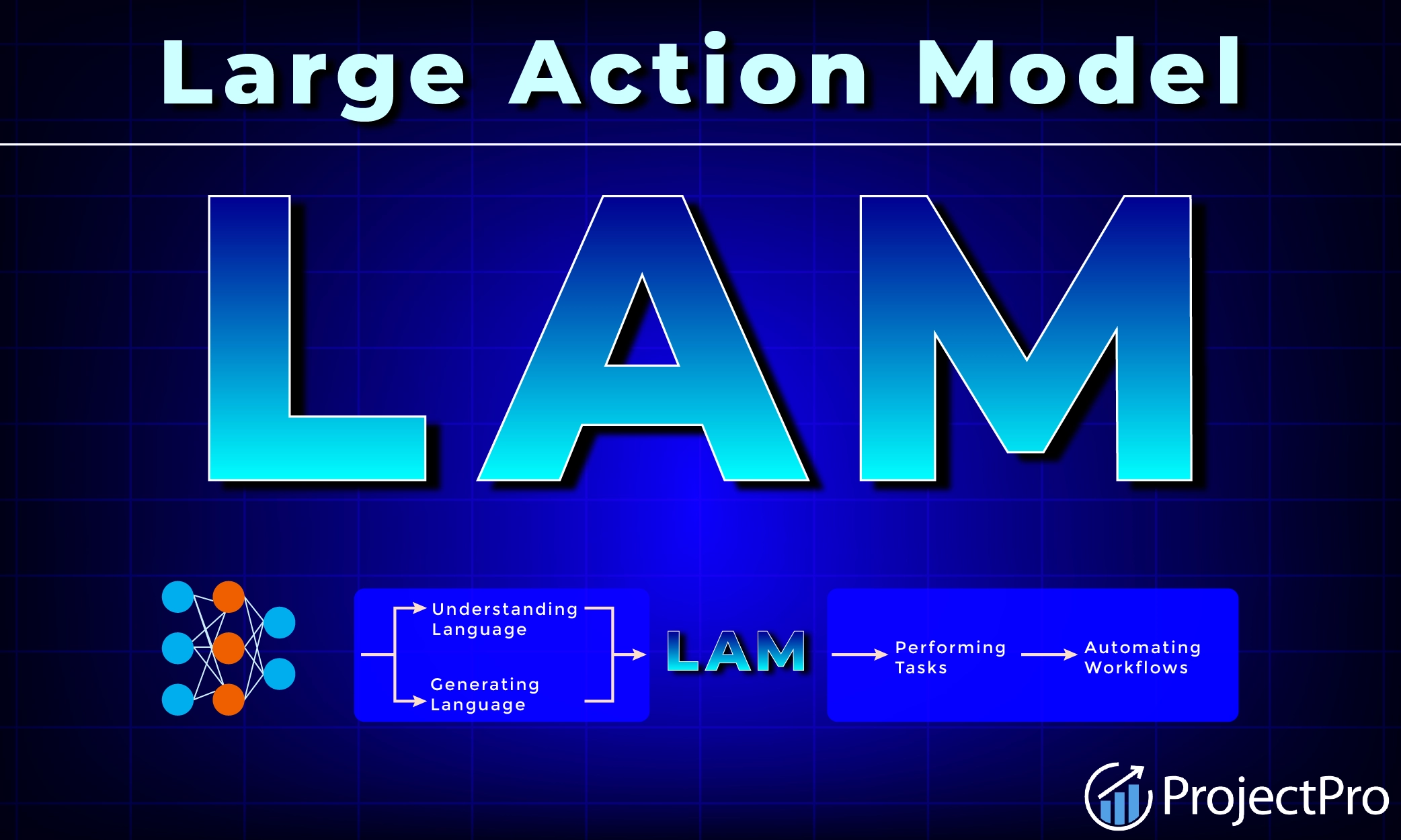

At its core, the Rabbit R1 is an attempt to move beyond the Large Language Models (LLMs) that power ChatGPT or Claude. While an LLM can tell you how to book a flight, a Large Action Model is designed to actually navigate the interface of a travel site and execute the booking. This “agentic” approach aims to treat the internet as a series of actions rather than a series of pages, effectively acting as a digital concierge that lives in your pocket.

The Ambition of the Large Action Model

The technical cornerstone of the device is the Rabbit LAM. Unlike traditional apps that rely on APIs—the digital bridges that allow two pieces of software to talk to each other—the LAM is trained on human interface interactions. It essentially “watches” how humans use apps and learns to mimic those actions. This allows the R1 to potentially interact with services that don’t have an open API, theoretically granting it access to almost any web-based service.

To make this work, Rabbit utilizes a “Rabbit Hole,” a cloud-based environment where users can log into their accounts (such as Uber, Spotify, or DoorDash). Once authenticated, the R1 can perform tasks on the user’s behalf in the cloud. From a software engineering perspective, this is a clever workaround to the “walled gardens” of the App Store and Google Play, but it introduces significant security and privacy considerations, as users must trust Rabbit Inc. With their primary account credentials.

The hardware itself, designed in collaboration with Teenage Engineering, is striking. It features a 2.8-inch touchscreen, a rotating “Rabbit Eye” camera for visual recognition, and a push-to-talk button that anchors the user experience. The goal was to create a device that feels tactile and intentional, moving away from the dopamine-loop design of the modern smartphone.

Performance vs. Promise

In practice, the Rabbit R1 often struggles to meet the high expectations set during its launch. While simple queries and basic AI chat functions work reasonably well, the “action” part of the Large Action Model is frequently inconsistent. Tasks that were demonstrated as seamless in promotional materials—like ordering a pizza or checking a ride-share price—often suffer from high latency or outright failure during real-world testing.

Many users have found that the R1 essentially functions as a dedicated interface for a series of LLMs, with the “action” capabilities feeling more like a beta product than a finished feature. The dependency on a cloud-based login system also means that any hiccup in the Rabbit servers renders the device nearly useless. The battery life has been a recurring point of criticism, with some units struggling to last a full day of moderate use.

The following table summarizes the core specifications of the device as provided by the manufacturer:

| Feature | Specification |

|---|---|

| Operating System | Android-based (Custom) |

| Camera | 720p “Rabbit Eye” (Rotating) |

| Interaction | Push-to-Talk / Touchscreen |

| Core Tech | Large Action Model (LAM) |

The AI Hardware Race

The Rabbit R1 is not alone in this pursuit. It exists alongside other attempts to decouple AI from the smartphone, such as the Humane AI Pin. Both devices share a common struggle: the “smartphone gravity” problem. It is incredibly difficult to convince users to carry a second, less-capable device when their phone already possesses a camera, a screen, and an internet connection—and is now integrating its own AI agents via Apple Intelligence and Google Gemini.

For the R1 to succeed, it must prove that its agentic capabilities provide a “10x improvement” over simply using a voice assistant on a phone. Currently, the convenience of the Rabbit R1’s physical form is offset by the friction of its software reliability. However, the project serves as a critical case study in the transition from generative AI (which creates content) to agentic AI (which performs tasks).

The broader implication for the tech industry is the shift toward “invisible interfaces.” If the R1’s vision eventually succeeds, the concept of an “app” becomes an implementation detail rather than a user experience. We would stop thinking about *which* app to use and instead focus on *what* we want to achieve, leaving the AI to handle the navigation of the digital plumbing.

As Rabbit Inc. Continues to push over-the-air software updates, the focus remains on refining the LAM’s accuracy and expanding the list of supported services. The next critical milestone for the company will be the release of more robust integration tools that allow the R1 to interact with a wider array of enterprise and personal software without requiring manual cloud logins for every single service.

We invite you to share your thoughts on the future of AI hardware in the comments below. Do you see a world without apps, or is the smartphone’s grip too strong to break?