Frankfurt is hosting a film screening and discussion centered around the critical issue of algorithmic bias, specifically as it relates to surveillance and decision-making systems. The event, focused on the documentary “Coded Bias,” aims to raise awareness about how artificial intelligence (AI) can perpetuate and even amplify existing societal inequalities. This conversation is increasingly vital as AI systems become more integrated into everyday life, impacting areas from loan applications to criminal justice.

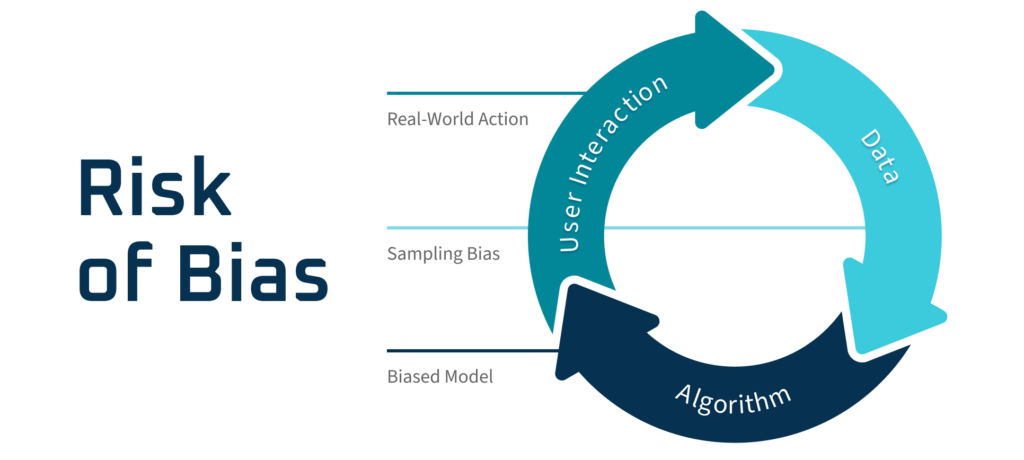

The core concern highlighted by “Coded Bias” – and now being brought to the forefront in Frankfurt – is that AI isn’t neutral. These systems are built by humans, trained on data often reflecting historical biases, and therefore can produce discriminatory outcomes. This isn’t a hypothetical problem; the film demonstrates real-world examples of facial recognition technology misidentifying people of color at disproportionately higher rates, and algorithms used in hiring processes favoring certain demographics over others. Understanding algorithmic bias is crucial for ensuring fairness and equity in an increasingly automated world.

The event in Frankfurt isn’t simply a screening; it’s designed to be a catalyst for dialogue. Organizers intend to foster a discussion about the ethical implications of AI, the demand for greater transparency in algorithmic design, and potential solutions to mitigate bias. What we have is particularly relevant in the context of growing surveillance technologies and their potential impact on civil liberties. The city’s involvement signals a growing recognition of the need to proactively address these challenges at a local level.

The Roots of Bias in Artificial Intelligence

The issue of bias in AI stems from several interconnected factors. One primary source is the data used to train these systems. If the training data is skewed – for example, if it predominantly features images of one race or gender – the AI will likely perform poorly, or even unfairly, when encountering data outside of that representation. Joy Buolamwini, founder of the Algorithmic Justice League and featured in “Coded Bias,” demonstrated this vividly in her research, showing how facial recognition systems struggled to accurately identify darker-skinned faces. The Algorithmic Justice League continues to advocate for equitable and accountable AI.

Beyond biased data, the algorithms themselves can be inherently biased due to the choices made by their creators. The selection of features, the weighting of different variables, and the overall design of the algorithm can all introduce bias, even unintentionally. The lack of diversity within the tech industry itself contributes to the problem. A more diverse team of developers is more likely to identify and address potential biases in their work.

Surveillance and the Amplification of Inequality

The film’s focus on surveillance systems is particularly pertinent. Facial recognition technology, increasingly deployed by law enforcement agencies and in public spaces, has been shown to be less accurate when identifying individuals with darker skin tones. This can lead to wrongful arrests, misidentification, and increased scrutiny of marginalized communities. A 2019 study by the National Institute of Standards and Technology (NIST) confirmed significant disparities in the accuracy of facial recognition algorithms across different demographic groups.

The use of AI in predictive policing also raises concerns. These systems analyze crime data to predict where future crimes are likely to occur, and then deploy police resources accordingly. However, if the underlying crime data reflects existing biases in policing practices – for example, if certain neighborhoods are disproportionately targeted – the AI will simply reinforce those biases, leading to a self-fulfilling prophecy of increased surveillance and arrests in those areas.

Frankfurt’s Response and the Path Forward

The event in Frankfurt represents a proactive step towards addressing these challenges. By bringing “Coded Bias” to a wider audience and facilitating a public discussion, the city is encouraging critical thinking about the ethical implications of AI. The discussion will likely cover potential regulatory frameworks, the need for greater transparency in algorithmic decision-making, and the importance of investing in research to develop more equitable AI systems.

Several key areas are emerging as crucial for mitigating algorithmic bias. These include:

- Data Diversity: Ensuring that training data is representative of the population it will be used to serve.

- Algorithmic Auditing: Regularly evaluating AI systems for bias and discrimination.

- Transparency and Explainability: Making the decision-making processes of AI systems more understandable.

- Accountability: Establishing clear lines of responsibility for the outcomes of AI-driven decisions.

The conversation extends beyond technical solutions. Addressing the root causes of societal inequality is essential for creating a more just and equitable AI landscape. This requires a multi-faceted approach involving policymakers, researchers, developers, and community stakeholders. The event in Frankfurt is a valuable opportunity to commence that conversation and explore potential pathways forward. The increasing awareness of AI ethics and the need for responsible innovation is a global trend, and Frankfurt’s initiative aligns with this growing movement.

The next step following the film screening and discussion in Frankfurt will be a report summarizing the key takeaways and recommendations from the event, to be published by the Stadt Frankfurt’s technology and innovation department in early November. This report will inform future policy decisions related to the use of AI within the city.

We encourage readers to share their thoughts on algorithmic bias and its impact on society in the comments below. Let’s continue this vital conversation.