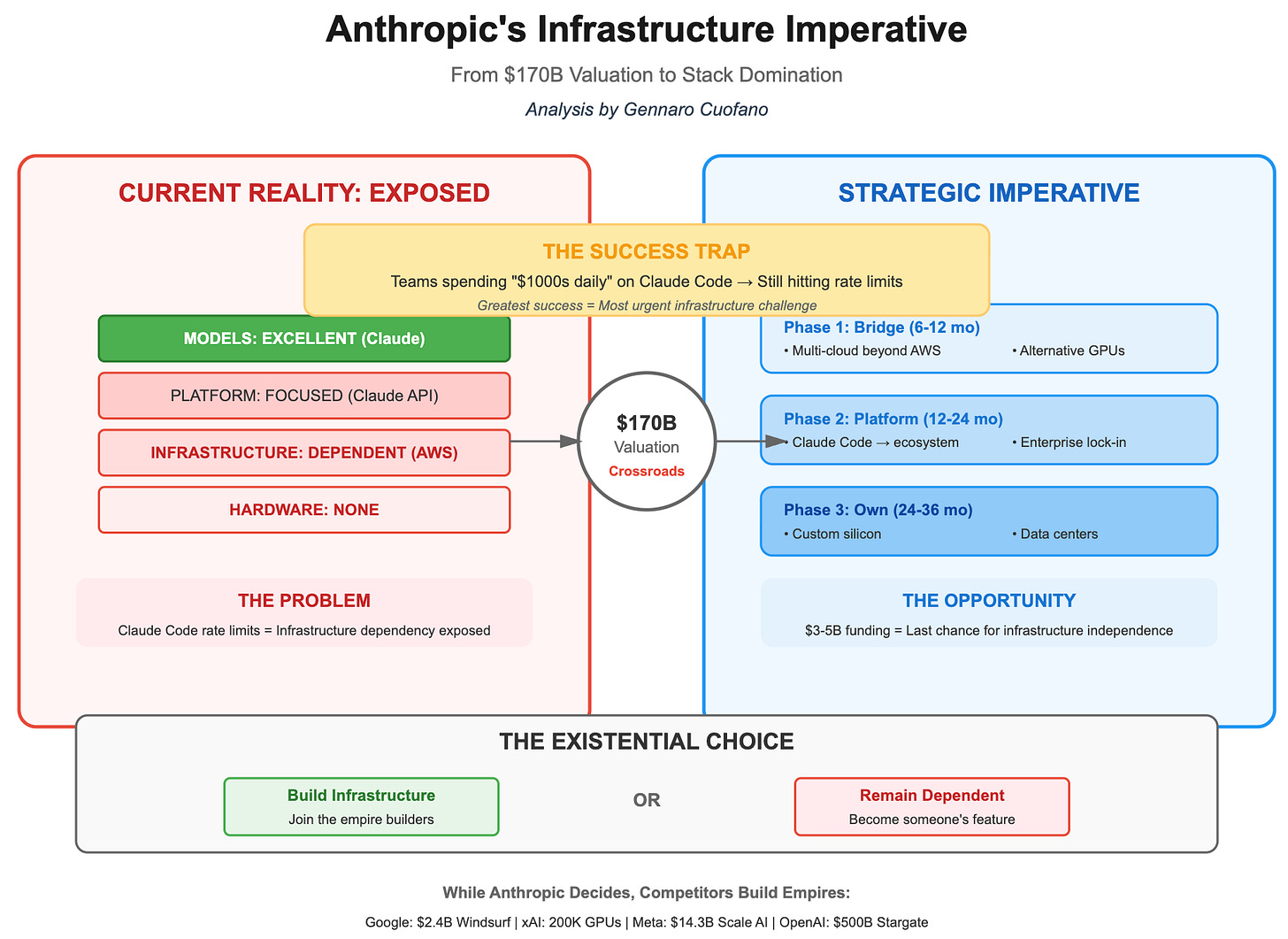

Anthropic is exploring the possibility of designing its own AI chips, according to a report from Reuters. The move signals a strategic pivot for the San Francisco-based AI lab as it seeks to reduce its reliance on third-party hardware and optimize the massive compute requirements of its Claude model family.

The initiative is currently in its early stages. Sources familiar with the matter indicate that the company has not yet committed to a specific hardware design nor has it assembled a dedicated team to lead the project. While the exploration is active, Anthropic may still decide to continue purchasing silicon from external vendors rather than venturing into the high-risk world of custom chip fabrication.

A spokesperson for Anthropic declined to comment on the reports. However, the timing of this exploration coincides with a period of explosive financial growth for the company, which recently disclosed that its annualized revenue run rate has surpassed $30 billion—a sharp increase from approximately $9 billion at the end of 2025.

The Economics of Custom Silicon

For a company of Anthropic’s scale, the decision to build proprietary hardware is primarily a matter of economics, and efficiency. Currently, the company utilizes a diversified “mix and match” approach to compute, deploying workloads across Nvidia GPUs, Amazon’s custom chips, and Tensor Processing Units (TPUs) developed by Google in partnership with Broadcom.

By designing its own silicon, Anthropic could theoretically tailor the hardware to the specific mathematical requirements of its large language models, potentially increasing performance while lowering the astronomical costs of energy and hardware procurement. This trajectory mirrors a broader trend among “hyperscalers” and AI labs. Meta and OpenAI are both pursuing custom silicon to escape the “Nvidia tax” and the supply chain bottlenecks associated with general-purpose GPUs.

However, the barrier to entry is steep. Industry sources estimate the development cost of a single advanced AI chip at roughly $500 million. This figure covers the recruitment of specialized silicon engineers and the rigorous process of validating manufacturing runs. While Here’s a significant capital outlay for a company that remains unprofitable, it becomes a more manageable investment when weighed against a revenue base that has more than tripled in just four months.

The Google and Broadcom Connection

The exploration of internal chip design comes immediately after Anthropic solidified its existing infrastructure partnerships. The company recently signed a long-term agreement with Google and Broadcom to secure approximately 3.5 gigawatts of TPU-based compute capacity starting in 2027. This represents a massive scale-up from the roughly one gigawatt the company was consuming in early 2026.

According to a Broadcom SEC filing, this expanded deployment is contingent on Anthropic’s continued commercial success—a notable hedge for a regulatory document that underscores the volatility and high stakes of the AI arms race. This deal further integrates Anthropic into the Broadcom ecosystem, as the firm also serves as a chip design partner for OpenAI.

This complex relationship creates an interesting tension: Anthropic is simultaneously doubling down on external TPU capacity while investigating how to build its own alternative. This “hedging” strategy ensures that the company has the compute it needs for the immediate future while preparing for a potential future where it owns the entire stack from the weights of the model down to the transistors of the chip.

Compute Infrastructure Roadmap

| Timeline | Event/Milestone | Scale/Value |

|---|---|---|

| Nov 2025 | US Computing Infrastructure Commitment | $50 Billion |

| Early 2026 | TPU Compute Consumption | ~1 Gigawatt |

| 2027 (Planned) | Google/Broadcom TPU Capacity | 3.5 Gigawatts |

| Current | Annualized Revenue Run Rate | $30+ Billion |

Strategic Implications for the AI Market

If Anthropic successfully transitions to its own silicon, it would join an elite group of companies capable of vertical integration. In the software world, vertical integration—controlling both the hardware and the software—allows for optimizations that are impossible when relying on general-purpose hardware. For AI, Which means faster inference times, lower latency for users of Claude, and a significant reduction in the power-per-token cost.

The move also places Broadcom in a pivotal position. As the bridge between the AI labs and the fabrication plants (foundries), Broadcom is effectively the “arms dealer” for the custom AI silicon market. Whether Anthropic decides to build its own chips from scratch or partners with a firm like Broadcom to design them, the shift away from general-purpose GPUs toward specialized XPUs is accelerating.

For the broader industry, this signals that the “compute moat” is evolving. It is no longer just about who has the most chips, but who has the most efficient architecture. As the cost of training the next generation of models climbs into the billions, the ability to shave even a small percentage off the energy cost through custom silicon can result in savings of hundreds of millions of dollars.

The next critical checkpoint for Anthropic’s hardware ambitions will likely be the formal assembly of a dedicated silicon engineering team or the announcement of a partnership with a foundry. Until then, the company remains in a state of exploration, balancing the immediate need for massive external compute with the long-term goal of hardware independence.

We want to hear from you. Do you think custom silicon is a necessity for AI labs to survive the next five years, or is the cost of development too high? Share your thoughts in the comments below.