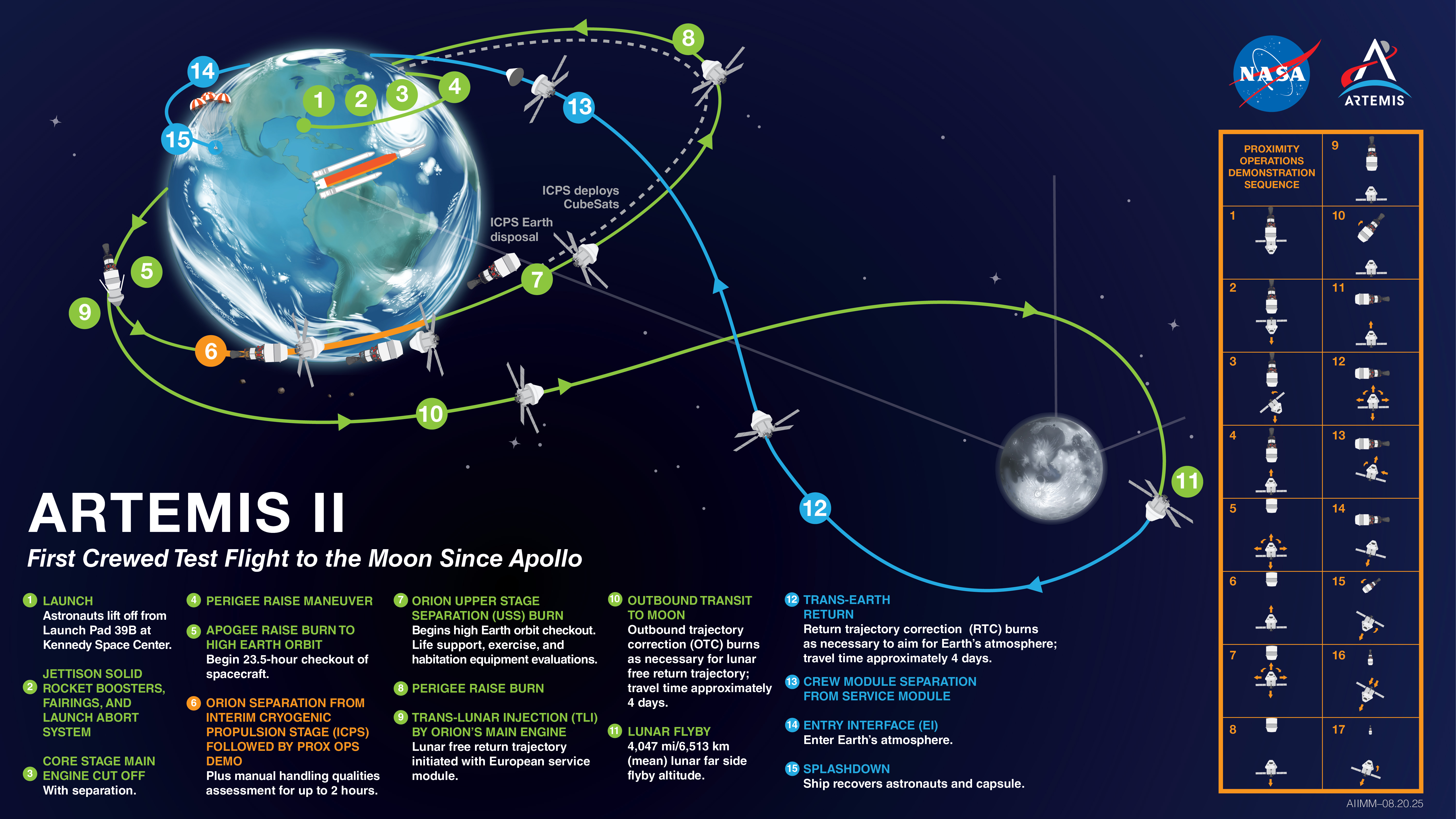

For decades, the imagery returning from deep space was the product of massive, specialized hardware—cameras designed by engineers to survive the vacuum of space and the crushing forces of launch. But as NASA prepares for the Artemis II mission, the tools used to capture the cosmos are becoming surprisingly familiar. The inclusion of high-end consumer smartphones on the journey around the moon marks a pivot in how space agencies approach documentation and data collection.

The integration of smartphones in space is no longer just about astronaut convenience or social media updates; it is a calculated test of modern computational photography and hardware resilience. By bringing commercial-off-the-shelf (COTS) technology into the lunar environment, NASA is exploring whether the sophisticated image processing found in a pocket-sized device can supplement or even replace some of the bulkier equipment traditionally required for mission documentation.

As a former software engineer, I find the technical shift particularly compelling. We are moving away from the era of “radiation-hardened” everything and toward a hybrid model where the sheer power of modern mobile chipsets—which can perform trillions of operations per second—offers a level of flexibility that legacy space hardware simply cannot match.

The shift toward consumer optics in deep space

The decision to utilize iPhones on the Artemis II mission reflects a broader trend in aerospace engineering: the realization that the pace of consumer electronics innovation far outstrips the development cycle of government-funded space hardware. A smartphone released today possesses more computing power and better optical stabilization than the dedicated cameras used on many previous orbital missions.

For the Artemis II crew—which includes Commander Reid Wiseman, Pilot Victor Glover, and Mission Specialists Christina Koch and Jeremy Hansen—these devices serve as more than just cameras. They are tools for rapid prototyping of imagery. The ability to capture high-resolution 4K video and utilize advanced HDR (High Dynamic Range) processing allows the crew to document the lunar flyby with a level of visual fidelity that is immediately accessible and shareable.

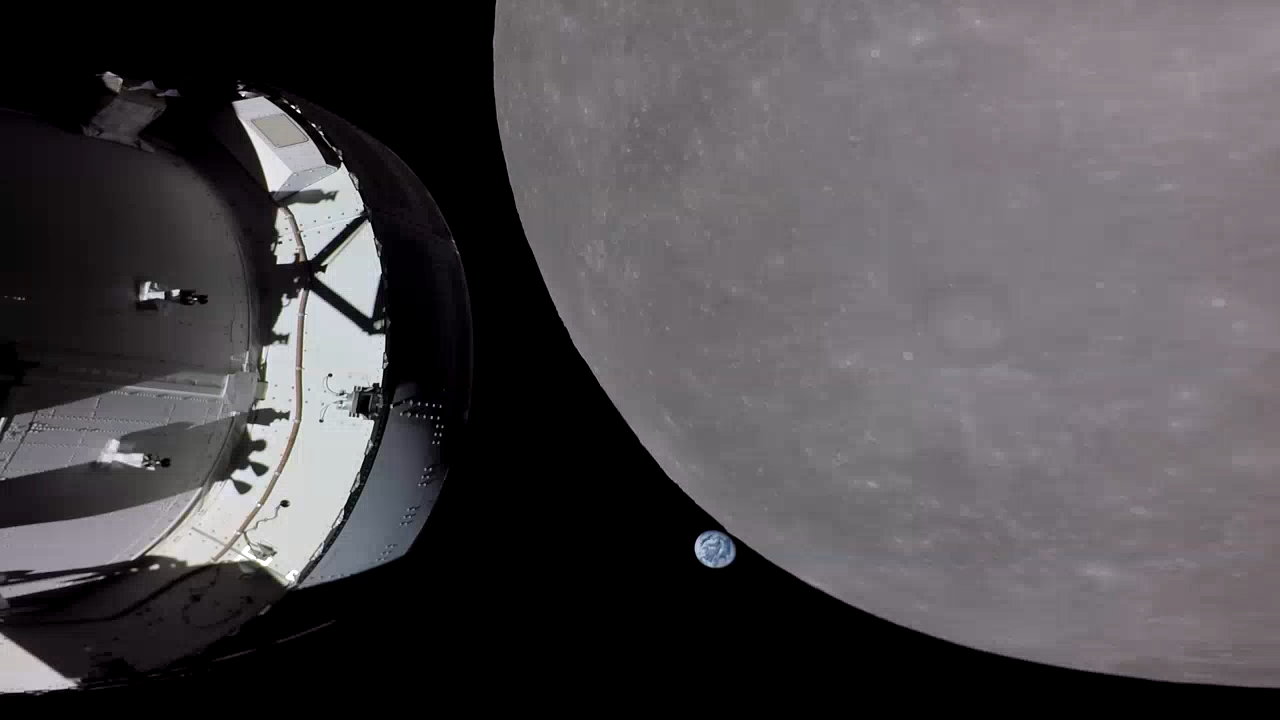

This “mainstreaming” of mobile technology allows NASA to conduct camera tests that would be prohibitively expensive to develop from scratch. By using devices that millions of people already own, the agency can gather data on how consumer-grade sensors handle the extreme lighting contrasts of the lunar environment—where the blinding brightness of the sun meets the absolute black of the void.

Technical challenges of mobile hardware in orbit

Despite their power, consumer smartphones are not built for the lunar environment. Bringing a mobile device beyond Low Earth Orbit (LEO) introduces several critical engineering hurdles that the mission team must manage.

- Radiation Exposure: Outside the protection of Earth’s magnetic field, cosmic rays and solar particles can cause “bit flips” in memory or permanently damage the silicon in a smartphone’s SoC (System on a Chip).

- Thermal Regulation: Without an atmosphere to dissipate heat, devices can overheat rapidly during intense processing tasks, such as recording long 4K sequences, or freeze during periods of shadow.

- Battery Chemistry: Lithium-ion batteries behave differently in microgravity and extreme temperature swings, requiring strict monitoring to prevent swelling or failure.

Due to the fact that these devices are not radiation-hardened, they are typically used for non-critical mission tasks. However, the data they produce provides an invaluable benchmark for future “space-native” consumer electronics.

Why the iPhone specifically?

The choice of the iPhone for these tests likely stems from the maturity of its ecosystem and the consistency of its hardware. For NASA, the appeal lies in the intersection of hardware and software. Modern iPhones utilize a “computational photography” pipeline that automatically adjusts exposure and noise reduction in real-time—a feature that is incredibly useful when photographing the Earth from a distance where lighting is erratic.

| Feature | Specialized Space Hardware | Consumer Smartphones |

|---|---|---|

| Development Cycle | Years/Decades | Annual Updates |

| Processing | Dedicated, Radiation-Hardened | High-Speed SoC (ARM-based) |

| Weight/Bulk | Heavy, Modular | Ultra-Light, Integrated |

| Data Handling | Proprietary Formats | Universal (JPEG, HEVC) |

By leveraging these devices, the crew can capture “Earth-rise” style imagery that is optimized for public consumption without requiring hours of post-processing by ground teams. This immediacy is key to the Artemis program’s goal of inspiring a new generation by making the mission feel tangible and modern.

The broader implication for future exploration

The move toward smartphones in space is a precursor to a more decentralized approach to space exploration. As commercial companies like SpaceX and Axiom Space take a larger role in lunar and orbital logistics, the reliance on bespoke, government-made hardware is decreasing. We are entering an era of “plug-and-play” spaceflight.

If consumer devices can prove their reliability during the Artemis II flyby, we may soon see the development of “space-grade” consumer electronics—phones and tablets specifically designed with shielded components but maintaining the user interface and power of a standard smartphone. This would fundamentally change how astronauts interact with their environment, moving from clunky laptops and tablets to seamless, integrated mobile workflows.

the presence of a smartphone on a trip to the moon is a symbol of how the boundary between “Earth tech” and “Space tech” is dissolving. The tools we use to navigate our daily lives are now capable of documenting the furthest reaches of human exploration.

The next confirmed milestone for the program is the continued testing of the Orion spacecraft and the final crew preparations for the Artemis II launch, currently targeted for late 2025. As the mission approaches, NASA is expected to release more details regarding the specific payloads and experimental tools the crew will carry.

Do you think consumer tech belongs in deep space, or should NASA stick to specialized hardware? Share your thoughts in the comments below.