The concept of moving the cloud into the cosmos is no longer confined to the realm of science fiction. As terrestrial data centers face mounting pressure from energy shortages and land constraints, the prospect of hosting data centers in space has become a serious point of discussion for aerospace engineers and tech giants alike. The logic is tempting: abundant solar energy, a natural heat sink in the vacuum of space, and the potential for global low-latency connectivity.

Though, transitioning from terrestrial server farms to orbital infrastructure requires more than just a larger rocket. The leap involves solving a complex puzzle of orbital mechanics, environmental sustainability, and autonomous engineering. To make this vision viable, industry experts suggest that four primary technical and economic hurdles must be overcome.

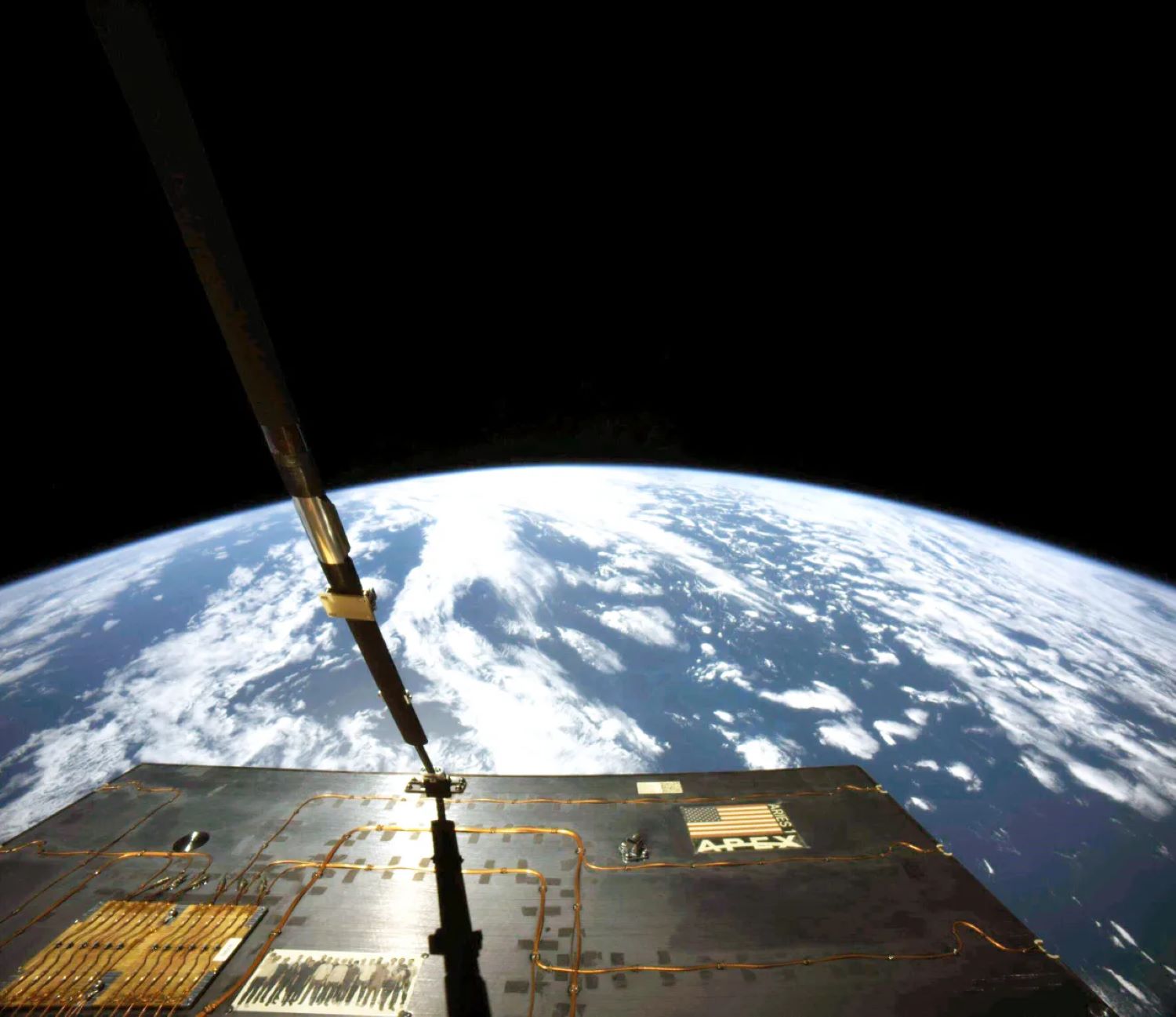

Among the most pressing concerns is the sheer volatility of the environment. Low Earth orbit (LEO)—the region extending up to 2,000 kilometers above the surface—is becoming increasingly crowded. For a large-scale data infrastructure to survive, it must navigate a minefield of debris while operating within the strict physical limits of orbital “shells.”

The bottleneck of orbital traffic

The first challenge is a matter of real estate. Space is vast, but the specific altitudes required for stable, low-latency communication are limited. Greg Vialle, founder of the orbital recycling startup Lunexus Space, notes that the number of satellites that can safely occupy LEO is far lower than many imagine. According to Vialle, a single orbital shell can accommodate roughly four to five thousand satellites.

When accounting for all available shells in LEO, the theoretical maximum capacity is approximately 240,000 satellites. This creates a significant problem for companies envisioning “mega-constellations” of a million units to support orbital computing. For such a scale to perform, the entire network would likely need to be managed by a single entity to ensure satellites can communicate and maneuver around one another effectively.

Safety margins are also a critical constraint. To allow spacecraft to move between orbits or safely de-orbit at the end of their life cycles, gaps of at least 10 kilometers must be maintained between satellites. While tightly packed constellations like SpaceX’s Starlink can manage this through inter-satellite communication, a fragmented ecosystem of competing orbital data centers could lead to a chaotic and dangerous traffic environment.

Mitigating the debris dilemma

Even if the traffic is managed, the physical environment remains hostile. Large-scale data centers would require massive solar arrays—hundreds of square meters in size—to power high-performance computing clusters. These arrays are highly susceptible to damage from meteorites and small pieces of space debris, which can degrade performance over time and create further fragments of junk in orbit.

The environmental impact extends beyond the vacuum of space. Hardware in orbit eventually fails or becomes obsolete, necessitating regular upgrades. If a company were to replace a million satellites every five years, the volume of reentry debris would skyrocket. Astronomers have warned that this could increase the rate of debris falling back into the atmosphere from a few pieces per day to roughly one every three minutes.

This constant rain of incinerating metal is not just a tracking nuisance. Some scientists express concern that the resulting atmospheric pollution could damage the ozone layer and disrupt Earth’s thermal balance, effectively trading a terrestrial environmental crisis for an atmospheric one.

The economics of the launch

For orbital data centers to be financially sustainable, the cost of getting hardware into space must plummet. The return on investment depends entirely on the lifespan of the hardware versus the cost of the launch. Currently, the industry is looking toward “mega-rockets” to bridge this gap.

SpaceX is banking on the Starship system, which is designed to carry up to six times the payload of the Falcon 9, the current industry workhorse. This increase in capacity is essential because the sheer weight of server racks, cooling systems, and power shielding would be prohibitively expensive on smaller launchers. This need for heavy-lift capability is a global trend; a study by Thales Alenia Space concluded that for Europe to compete in the orbital data center market, it would need to develop a launcher with similar potency.

| Requirement | Current Standard (Falcon 9) | Future Target (Starship/Mega-Rockets) |

|---|---|---|

| Payload Capacity | Moderate | Up to 6x increase |

| Infrastructure Goal | Small Satellite Deployment | Large-Scale Data Center Components |

| Deployment Style | Single-Launch Satellites | Multi-Launch Orbital Assembly |

The necessity of orbital assembly

The final hurdle is a physical one: a fully functional orbital data center is simply too large to fit inside any existing rocket fairing. Even the largest mega-rockets cannot launch a completed data center in one piece.

Which means the industry must move toward in-orbit assembly. This shift requires advanced autonomous robotic systems capable of precision docking, welding, and wiring in a zero-gravity environment—technology that does not yet exist for commercial use. While several companies have conducted Earth-based tests with precursor robotic systems, these remain prototypes.

The transition from “launch and leave” to “assemble and maintain” represents a fundamental shift in how we view space infrastructure. Until robots can reliably build and repair server arrays in the void, the dream of a space-based cloud remains a blueprint rather than a reality.

The next major checkpoint for this technology will be the continued flight testing of the Starship system and the subsequent results of orbital robotics trials. As these platforms prove their reliability, the industry will move closer to determining if the cost of assembly outweighs the benefits of an orbital cloud.

Do you think the environmental risks of orbital debris are worth the potential gains in computing power? Let us know in the comments or share this story with your network.