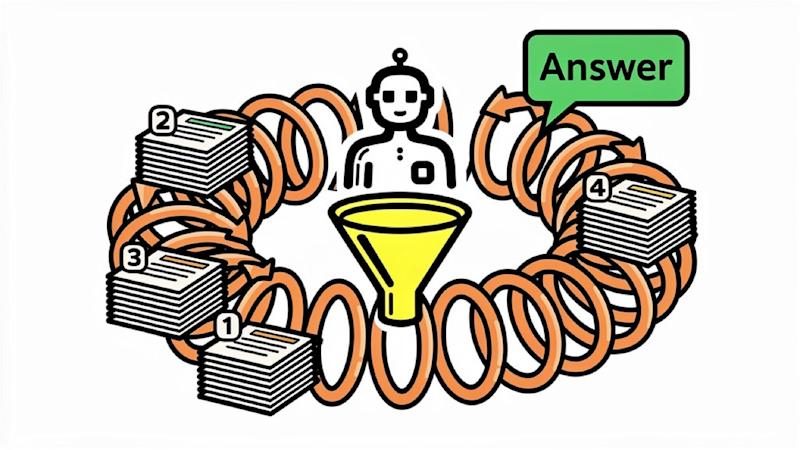

The search for information within large organizations is often a surprisingly clumsy process. Existing Retrieval-Augmented Generation (RAG) systems, designed to enhance large language models with real-time data, frequently stumble when faced with the diverse and nuanced ways employees actually seek knowledge. Databricks aims to address this challenge with KARL, short for Knowledge Agents via Reinforcement Learning, a latest agent the company claims can handle a wider range of enterprise search tasks than existing solutions—and at a lower cost.

KARL represents a shift in how companies might approach internal knowledge management. Traditional RAG pipelines are typically optimized for a single type of search, often failing when confronted with tasks outside their narrow focus. Databricks argues that a single model capable of generalizing across multiple search behaviors is a significant step forward, potentially unlocking greater efficiency and accuracy in accessing critical business information. The company asserts that KARL matches the performance of the Claude Opus 4.6 model on a purpose-built benchmark, but at 33% lower cost per query and 47% lower latency, according to a report from VentureBeat.

The core innovation behind KARL lies in its training methodology. Unlike many existing LLMs, KARL was trained across six distinct enterprise search behaviors simultaneously using a new reinforcement learning algorithm. These behaviors include synthesizing intelligence from meeting notes, reconstructing deal outcomes from fragmented records, answering questions about account history, and generating competitive analyses. This multi-task approach, Databricks researchers believe, allows the model to generalize more effectively than systems trained on a single task. The training process leveraged synthetic data, eliminating the need for extensive human labeling, a significant cost and time saver.

The Challenge of Generalization in Enterprise Search

Standard RAG systems often struggle with the ambiguity and complexity inherent in real-world enterprise data. They excel at simple lookups but falter when faced with multi-step reasoning or fragmented information. As Jonathan Frankle, Chief AI Scientist at Databricks, explained in an interview with VentureBeat, “A model trained to synthesize cross-document reports handles constraint-driven entity search poorly. A model tuned for simple lookup tasks falls apart on multi-step reasoning over internal notes.” This limitation highlights the need for a more versatile approach to enterprise search.

To address this, Databricks developed KARLBench, a benchmark designed to evaluate performance across those six enterprise search behaviors. The benchmark includes PMBench, built from the company’s own product manager meeting notes—data intentionally fragmented and unstructured to mimic the challenges of real-world internal documentation. The results showed that multi-task reinforcement learning significantly improved generalization, with KARL performing well on tasks it hadn’t been specifically trained on.

OAPL: The Engine Behind KARL’s Efficiency

A key component of KARL’s success is OAPL, or Optimal Advantage-based Policy Optimization with Lagged Inference policy. Developed jointly by researchers from Cornell, Databricks, and Harvard, OAPL addresses a common challenge in reinforcement learning: the discrepancy between the data used to train the model and the data used to update it in distributed training environments. A paper detailing OAPL was published on arXiv in February 2026. The new approach embraces the off-policy nature of distributed training, resulting in greater stability and sample efficiency.

This efficiency is crucial for practical implementation. OAPL’s ability to reuse previously collected data significantly reduced the computational resources required for training, keeping the total runtime within a few thousand GPU hours—a scale achievable for many enterprise teams. This contrasts with research projects that often require substantially more resources.

RAG, Agents, and the Future of Context

The development of KARL also sheds light on the evolving relationship between RAG and agentic memory. Frankle views these concepts not as mutually exclusive, but as layers in a larger system. A vector database provides a vast store of information, while the LLM’s context window offers a limited space for immediate processing. Between these layers, compression and caching mechanisms are emerging to manage the flow of information. KARL itself demonstrates this principle, learning to compress its own context to handle complex queries that exceed the LLM’s initial capacity. Removing this learned compression, Databricks found, significantly reduced accuracy.

However, KARL is not without its limitations. Frankle acknowledged that the model struggles with questions that are genuinely ambiguous, where multiple valid answers exist. Determining whether a question is open-ended or simply difficult remains a challenge. Currently, KARL is also limited to vector search and does not yet support tasks requiring SQL queries, file searches, or Python-based calculations, though these capabilities are planned for future development.

Implications for Enterprise Data Strategies

The emergence of KARL suggests several key considerations for organizations evaluating their retrieval infrastructure. First, a narrow focus on a single search behavior is likely insufficient. Multi-task training and generalization are critical for handling the diverse range of information needs within an enterprise. Second, reinforcement learning offers a significant advantage over traditional supervised fine-tuning, particularly when dealing with unpredictable query types. Finally, efficiency—in terms of both cost and computational resources—is paramount. Building purpose-built search agents, rather than relying solely on general-purpose APIs, can lead to more effective and sustainable solutions.

Databricks plans to continue expanding KARL’s capabilities, with a focus on integrating additional data sources and search modalities. The company will also be closely monitoring the model’s performance in real-world deployments, gathering feedback to further refine its algorithms and address its limitations. The next step for Databricks is to integrate SQL queries, file search, and Python-based calculation into KARL’s skillset, expanding its utility across a wider range of enterprise tasks.

The development of KARL marks a significant step toward more intelligent and versatile enterprise search. As organizations grapple with ever-increasing volumes of data, the ability to quickly and accurately access relevant information will grow increasingly critical.