The internet, as many have observed, remembers everything. But sometimes, what it remembers is a glitch. A video circulating widely online, featuring what appears to be Taylor Swift endorsing President Joe Biden, is prompting a lot of discussion – and a lot of scrutiny. The clip, which quickly gained traction across platforms like X (formerly Twitter) and TikTok, shows a woman resembling the pop superstar urging her fans to register to vote and support the incumbent president. Still, the video is not authentic. It’s a deepfake, and a remarkably convincing one at that, raising questions about the increasing sophistication of artificial intelligence and its potential impact on the upcoming 2024 presidential election.

The video, posted initially on TikTok by an account called @deeptakeswift, quickly amassed millions of views before being widely debunked. The account itself appears to be dedicated to creating AI-generated content featuring Swift. While the creator has acknowledged the video’s artificial nature, labeling it as a “parody,” the speed at which it spread underscores the challenge of identifying and countering misinformation in the digital age. The incident highlights the growing concern surrounding the use of deepfake technology to influence public opinion, particularly during a highly charged political season. Understanding deepfakes and their potential for manipulation is becoming increasingly crucial for voters.

The clip itself is technically impressive. It features a digitally altered version of Taylor Swift speaking directly to the camera, using language consistent with endorsements seen from other celebrities. The audio and visual elements are seamlessly integrated, making it hard for the casual observer to discern its fabricated nature. This level of realism is a significant departure from earlier, more rudimentary deepfakes, which were often easily identifiable due to glitches or unnatural movements. The sophistication of this particular deepfake demonstrates how quickly the technology is evolving, and the potential for it to be used for more malicious purposes.

The Technology Behind the Illusion

Deepfakes are created using a branch of artificial intelligence called generative adversarial networks (GANs). GANs involve two neural networks: a generator and a discriminator. The generator creates latest data instances, while the discriminator evaluates them, attempting to distinguish between the generated data and real data. Through a process of continuous feedback and refinement, the generator learns to create increasingly realistic outputs that can fool the discriminator. In the case of deepfakes, this process is used to swap faces, alter speech, or even create entirely fabricated videos. According to a report by the Brookings Institution, the cost of creating a convincing deepfake has decreased dramatically in recent years, making the technology more accessible to a wider range of actors. Brookings Institution report on deepfakes

The Swift deepfake specifically leverages readily available footage and audio of the singer, feeding it into an AI model trained to replicate her likeness and voice. The model then generates new content, seamlessly blending the fabricated elements with the original source material. Experts in digital forensics have pointed out that subtle inconsistencies, such as unnatural blinking patterns and slight distortions in facial features, can sometimes reveal a deepfake. However, these clues are becoming increasingly difficult to detect as the technology improves.

Political Implications and the 2024 Election

The timing of the Swift deepfake is particularly noteworthy, coming just months before the 2024 presidential election. Taylor Swift, with her massive and highly engaged fanbase, is a significant cultural figure, and her potential endorsement of a candidate could have a substantial impact on voter turnout. The fact that this deepfake specifically targeted her and linked her to President Biden raises concerns about deliberate attempts to influence the election. While the creator has claimed it was a parody, the potential for such content to be misinterpreted or intentionally spread as genuine information is undeniable.

This incident is not isolated. Throughout the 2024 election cycle, there have been increasing reports of AI-generated misinformation targeting candidates and voters. The Federal Election Commission (FEC) is currently grappling with how to regulate the use of AI in political advertising, but the legal landscape remains unclear. The FEC website provides information on current regulations and ongoing discussions regarding AI and elections. Experts warn that the proliferation of deepfakes could erode public trust in the electoral process and make it more difficult for voters to discern fact from fiction.

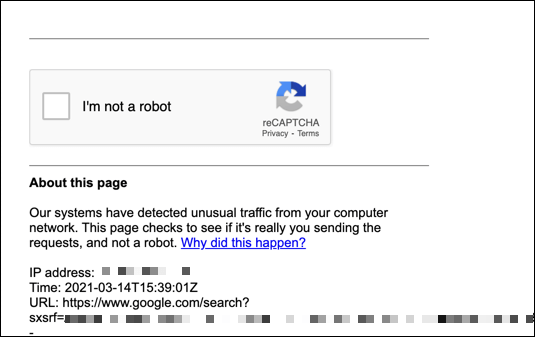

The spread of the Taylor Swift deepfake also highlights the role of social media platforms in combating misinformation. TikTok, where the video initially gained traction, has since removed the content and stated that it violates its policies on misleading information. However, the speed at which the video spread before its removal demonstrates the challenges of content moderation in the age of AI. Platforms are investing in AI-powered tools to detect and remove deepfakes, but these tools are not yet foolproof.

What Can Be Done?

Combating the threat of deepfakes requires a multi-faceted approach. This includes developing more sophisticated detection tools, educating the public about the risks of misinformation, and holding social media platforms accountable for the content that is shared on their platforms. Organizations like the Coalition for Responsible AI are working to develop ethical guidelines for the development and deployment of AI technologies. Coalition for Responsible AI website

Individuals can also play a role in combating deepfakes by being critical consumers of information. Before sharing a video or article online, it’s important to verify its authenticity by checking multiple sources and looking for signs of manipulation. Tools like reverse image search can help determine if a video has been altered or if it originates from a credible source. A healthy skepticism and a commitment to fact-checking are essential in navigating the increasingly complex information landscape.

The case of the Taylor Swift deepfake serves as a stark reminder of the potential for AI to be used to deceive and manipulate. As the technology continues to evolve, it’s crucial that we develop the tools and strategies necessary to protect ourselves from its harmful effects. The next key date to watch is the upcoming debates between President Biden and former President Trump, where experts anticipate a surge in AI-generated misinformation. Staying informed and vigilant will be more important than ever.

What are your thoughts on the rise of deepfakes? Share your comments below, and please consider sharing this article to help raise awareness about this important issue.