The internet, for all its promise of open access, is often punctuated by frustrating roadblocks. A common one, and the subject of increasing scrutiny, is the CAPTCHA – those distorted letters and images designed to prove you’re human. But increasingly, these tests are failing, not because bots are getting smarter, but because they’re being circumvented by a surprisingly low-tech solution: human farms. A recent video, circulating widely and prompting discussion about the ethics and economics of online security, details this very phenomenon. The core issue isn’t just about bypassing security measures. it’s about the exploitation inherent in a system that relies on cheap labor to validate our digital lives.

The video, posted on YouTube, focuses on a facility in Vietnam where workers are paid to solve CAPTCHAs for a fraction of a penny each. While the practice isn’t latest, the scale and apparent organization depicted in the footage are raising concerns. The video shows rows of individuals diligently working on computers, their sole task being to decipher the increasingly complex challenges thrown at them by websites attempting to filter out automated traffic. This isn’t about sophisticated hacking; it’s about a business model built on extremely low wages and a global demand for CAPTCHA solving. The demand for these services stems from a variety of sources, including bots used for scraping data, creating fake accounts, and launching denial-of-service attacks.

The Economics of Human-Powered Bots

The economics at play are stark. CAPTCHA solving services advertise rates as low as $0.50 for 1,000 CAPTCHAs solved. Workers in these facilities, according to the video and subsequent reporting, earn significantly less than that per thousand, often fractions of a cent per solved challenge. This creates a highly exploitative labor situation, particularly in countries with lower minimum wage standards. The profitability for the service providers, however, is substantial, fueled by the constant need for businesses to protect themselves from automated abuse. The demand for CAPTCHA services, and the circumvention of them, is a direct consequence of the ongoing arms race between security providers and malicious actors.

The video highlights the work of 2Captcha, a prominent CAPTCHA solving service. While 2Captcha doesn’t directly employ the workers shown in the video, it acts as a marketplace connecting those who need CAPTCHAs solved with individuals willing to do so. The company claims to offer a legitimate service, allowing users to automate tasks that require human verification. However, the ethical implications of profiting from such a labor model are increasingly under scrutiny. 2Captcha’s website states they “pay for every CAPTCHA solved,” but the video suggests a significant disparity between the price paid to workers and the revenue generated by the service.

Beyond CAPTCHAs: The Wider Implications

The issue extends beyond simply bypassing CAPTCHAs. These human farms are also used to train artificial intelligence models. Machine learning algorithms require vast amounts of labeled data to function effectively. CAPTCHA solving provides a readily available source of this data, allowing AI developers to improve the accuracy of their systems. This creates a feedback loop: AI gets better at solving CAPTCHAs, which leads to more complex CAPTCHAs, which in turn requires more human labor to solve, and so on. This dynamic raises questions about the sustainability and ethical implications of relying on human labor to fuel AI development.

the use of human farms raises concerns about the integrity of online systems. Fake accounts created using CAPTCHA solving services can be used to spread misinformation, manipulate social media trends, and engage in fraudulent activities. The ability to bypass security measures also poses a threat to online voting systems and other critical infrastructure. The potential for abuse is significant, and the lack of transparency surrounding these operations makes it difficult to assess the full extent of the problem.

The Search for Alternatives

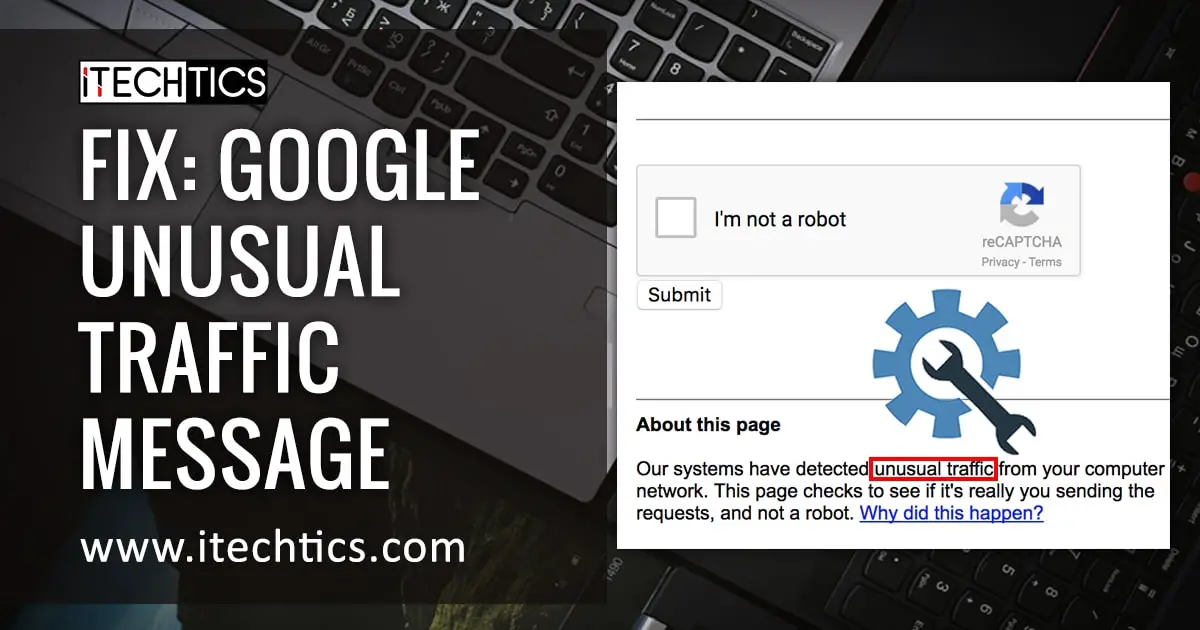

The limitations of CAPTCHAs have prompted the development of alternative security measures. Google’s reCAPTCHA v3, for example, uses risk analysis to determine whether a user is human or a bot, without requiring them to solve a challenge. This approach relies on analyzing user behavior and other contextual factors to assess risk. Other alternatives include biometric authentication, such as fingerprint scanning and facial recognition, and device fingerprinting, which identifies users based on their device’s unique characteristics. However, each of these alternatives also has its own drawbacks, including privacy concerns and the potential for bias.

Invisible CAPTCHA alternatives, like reCAPTCHA v3, are gaining traction, but they aren’t foolproof. They rely on complex algorithms and can sometimes misidentify legitimate users as bots. This can lead to frustration and inconvenience for users, and it can also disproportionately affect certain groups, such as those with disabilities or those who use privacy-focused browsers. The challenge is to find a balance between security and usability, while also protecting user privacy and ensuring fairness.

The revelation of these human farms underscores a fundamental tension in the digital world: the desire for seamless online experiences versus the need for robust security. As long as there is economic incentive to bypass security measures, and as long as there is a readily available supply of cheap labor, the problem is likely to persist. Addressing this issue requires a multi-faceted approach, including stricter regulation of CAPTCHA solving services, increased investment in alternative security measures, and a broader conversation about the ethical implications of relying on human labor to validate our digital lives.

Looking ahead, the focus will likely shift towards more sophisticated and adaptive security measures. The development of AI-powered security systems that can detect and respond to evolving threats is crucial. However, it’s also crucial to recognize that technology alone is not the answer. Addressing the underlying economic and social factors that drive the exploitation of human labor is essential to creating a more secure and equitable digital future. For more information on online security threats, resources are available from the Cybersecurity and Infrastructure Security Agency (CISA).

What do you think about the ethics of CAPTCHA solving and the use of human farms? Share your thoughts in the comments below, and please share this article with others to raise awareness about this important issue.