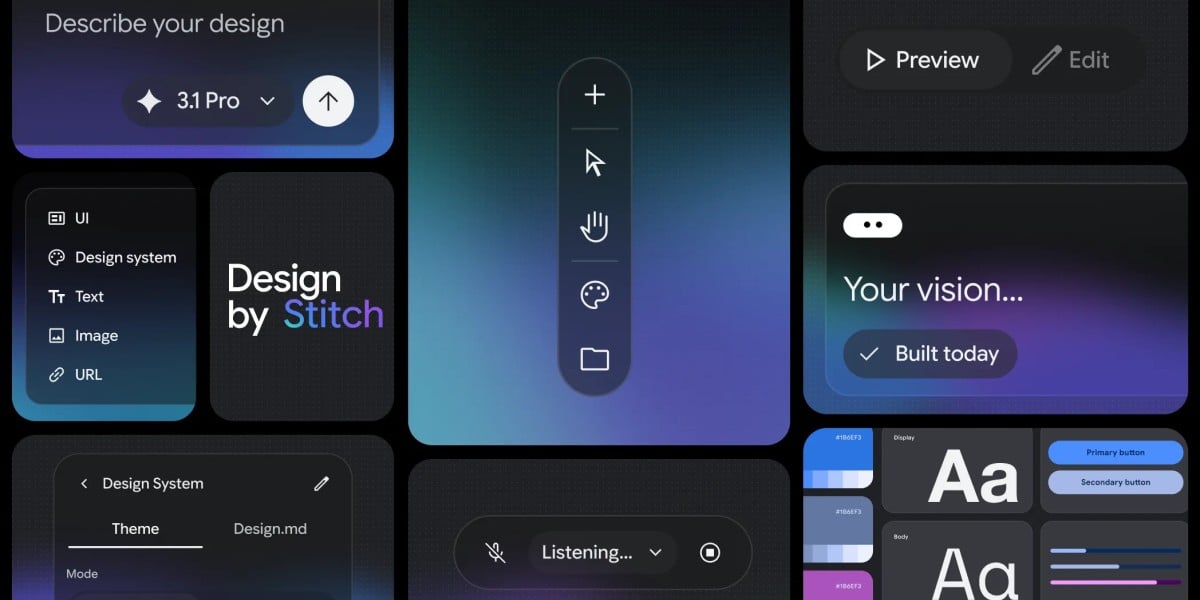

The line between describing a design and simply *telling* a computer to build it is blurring. Google this week unveiled a significant update to its Stitch design tool, now powered by what the company is calling “vibe design.” The concept, born from the broader trend of “vibe coding” in software development, allows users to create user interfaces through natural language – and even spoken commands.

Google Labs product manager Rustin Banks announced the changes in a blog post on Wednesday, observing that “Over the last year, AI has fundamentally changed how we build, turning simple descriptions into functional software.” Stitch, Google’s tool for UI design, has been completely redesigned with this shift in mind.

Instead of starting with traditional wireframes, designers can now simply explain the objective of their project, the desired user experience, or even cite existing designs for inspiration. The tool’s new AI-native canvas is described as “infinite,” providing ample space for ideas to evolve from initial concepts to fully functional prototypes. And in a move that feels distinctly futuristic, users can now speak directly to the canvas, receiving real-time feedback and adjustments from the design agent.

“You can speak directly to your canvas,” Banks explained. “The agent can give you real-time design critiques, design a new landing page by interviewing you, and make real-time updates – like ‘give me three different menu options,’ or ‘show me this screen in different color palettes’ – as you speak.”

Blending Design and Code with an AI Agent

The core of the update lies in Stitch’s new “design agent,” which can reason across the entire project’s history. This allows for a more cohesive and iterative design process, where the AI can understand the context of previous decisions and suggest relevant changes. Google has also introduced an “Agent manager” to help users work on multiple ideas in parallel, keeping everything organized.

But “vibe design” isn’t meant to be a solo endeavor. Google has created a software development kit (SDK) and a Model Card Protocol (MCP) server for Stitch, allowing it to integrate with existing AI coding assistants like Antigravity, Gemini CLI, Claude Code, and Cursor. This integration aims to bridge the gap between design and development, allowing for a seamless transition from concept to code.

From Concept to Prototype, Faster

The potential impact of this technology is significant, particularly for smaller teams and individual founders. Banks suggests Stitch can help “a professional designer looking to explore dozens of variations or a founder manifesting your first software idea,” enabling them to achieve results “in minutes rather than days.”

The concept of “vibe coding” itself has gained traction as AI coding assistants become more sophisticated, even if the initial results often require significant refinement. As The Register noted, the term acknowledges that these tools often produce “ropey” code that needs further work.

What’s Next for Stitch and AI-Powered Design?

Google’s move signals a broader trend toward more intuitive and accessible design tools. While the promise of generating functional software from simple descriptions is still evolving, Stitch’s update represents a significant step forward. The company has not yet announced a specific release date for the updated features beyond “coming soon,” but is encouraging users to explore the possibilities of AI-assisted design.

The success of Stitch will likely depend on how well the AI agent understands and responds to nuanced design requests. Whether the tool can truly deliver on its promise of rapid prototyping and streamlined workflows remains to be seen, but the initial response suggests a growing appetite for this new approach to UI development.

Share your thoughts on the future of AI-powered design in the comments below.