For a social media platform like Snapchat, the distance between a trending concept and a live feature is measured in days, not months. To maintain this pace, Snap has shifted its underlying data architecture to integrate open libraries for accelerated data processing, leveraging NVIDIA‘s GPU-accelerated software on Google Cloud infrastructure to drastically reduce the time it takes to validate new app updates.

The move addresses a massive computational hurdle: the A/B testing cycle. Before any feature reaches Snapchat’s more than 940 million monthly active users, it undergoes rigorous controlled experiments. These tests analyze nearly 6,000 different metrics—ranging from user engagement and app performance to monetization—to ensure a feature improves the experience rather than degrading it.

The scale of this operation is immense. Snap runs thousands of experiments every month, which requires processing over 10 petabytes of data within a tight three-hour window each morning. By migrating these workloads from traditional CPUs to GPUs using Apache Spark accelerated by NVIDIA cuDF, the company has achieved 4x speedups in runtime while utilizing the same number of machines.

This transition represents more than just a speed boost. it is a strategic pivot to prevent computing costs from scaling linearly with the company’s ambitions. By pairing NVIDIA CUDA-X libraries with Google Kubernetes Engine (GKE), Snap has built a full-stack platform capable of handling data processing at a global scale.

Flattening the Scaling Curve

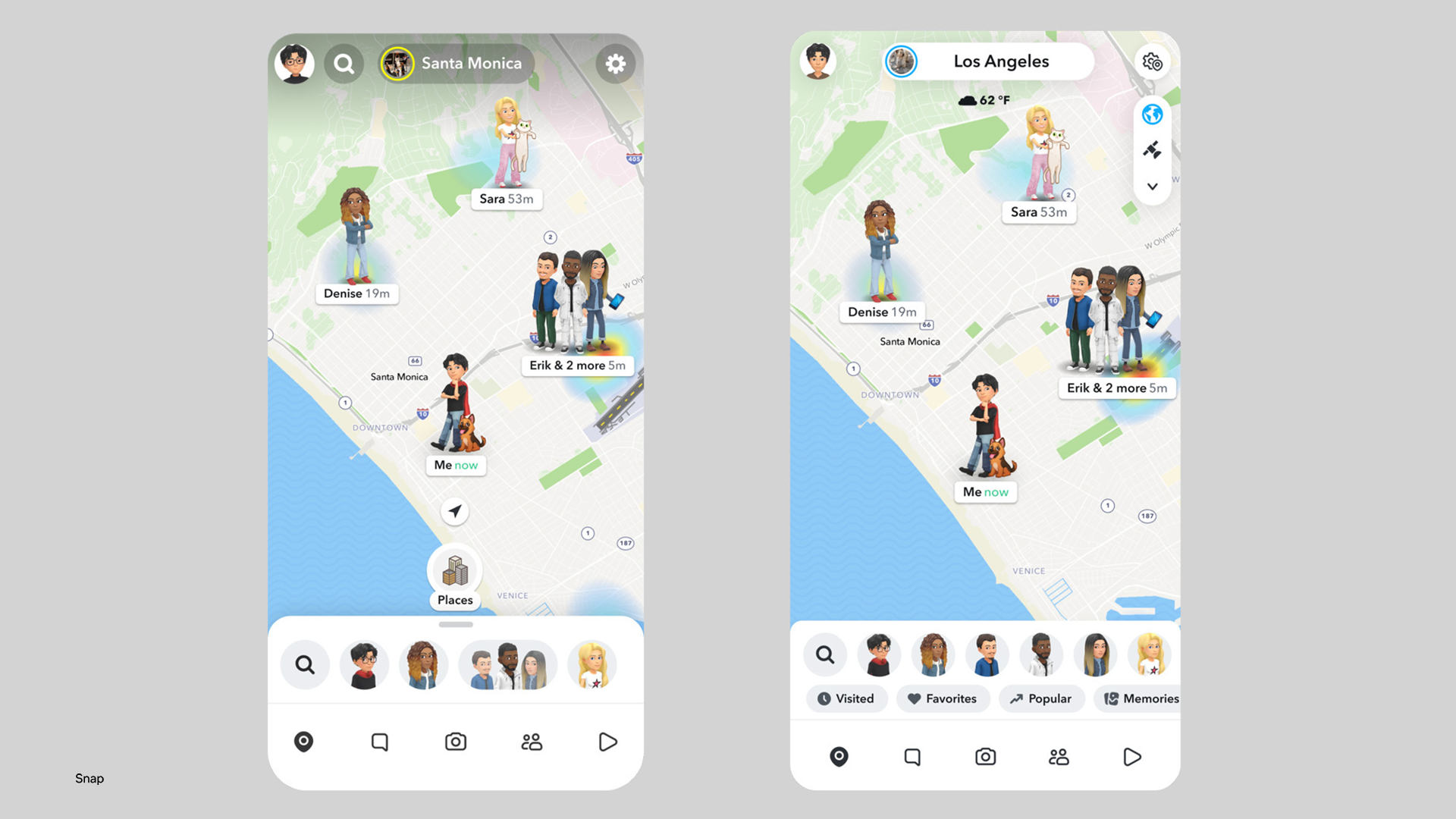

The necessity for this migration became clear as Snap’s roadmap for experimentation grew. The company continuously rolls out a mix of high-visibility features, such as AI-generated stickers and arrival notifications, alongside invisible performance optimizations and OS compatibility updates. Under the previous CPU-only workflow, the cost of expanding these tests to more users and metrics was projected to turn into unsustainable.

“We were projecting an ambitious roadmap to scale up experimentation that would have blown up our computing costs based on our existing infrastructure,” said Prudhvi Vatala, senior engineering manager at Snap. “Switching to GPU-accelerated pipelines with cuDF gave us a way to flatten the scaling curve, and the results were tremendous.”

The adoption of cuDF allowed Snap’s developers to deploy existing Apache Spark applications on GPUs without requiring extensive code changes. This seamless integration meant the team could migrate their most critical pipelines without a complete rewrite of their data science workflows.

The financial impact of the shift was immediate. Based on internal data collected between January 1 and February 28, Snap realized 76% daily cost savings using NVIDIA GPUs on GKE compared to their previous CPU-only workflows.

Optimizing Infrastructure and Resource Allocation

To ensure the migration was efficient, Snap didn’t simply swap hardware; they optimized the entire pipeline. The team utilized a suite of cuDF microservices to automatically qualify, test, and configure Spark workloads for GPU acceleration. This automated approach removed much of the manual overhead typically associated with hardware migration.

Working alongside NVIDIA experts, Snap optimized its pipelines on Google Cloud’s G2 virtual machines, which are powered by NVIDIA L4 GPUs. This optimization led to a significant reduction in the required hardware footprint. While initial projections suggested the need for approximately 5,500 concurrent GPUs, data collected between January 1 and March 13 showed that the system only required 2,100 GPUs to handle the workload.

| Metric | CPU-Only Workflow | GPU-Accelerated (cuDF) |

|---|---|---|

| Runtime Speed | Baseline | 4x Speedup |

| Daily Operating Cost | Baseline | 76% Reduction |

| Concurrent GPUs Required | N/A | 2,100 (vs. 5,500 projected) |

| Data Volume (Daily Window) | 10+ Petabytes | 10+ Petabytes |

Joshua Sambasivam, a backend engineer on the A/B testing team, described the results as “pretty crazy,” noting that the cost savings far exceeded the team’s initial expectations. He characterized the Spark accelerator as a “perfect match” for the specific nature of Snap’s data workloads.

Broadening the Scope of GPU Acceleration

While the primary focus of the migration was the A/B testing framework, Snap views this as the beginning of a larger infrastructure overhaul. The company has already migrated its two largest data pipelines, but the success of the cuDF integration has revealed further opportunities to optimize other production workloads.

“Experimentation is at the core of our company,” Vatala said. “Changing our data infrastructure from CPUs to GPUs allows us to efficiently scale this experimentation to more features, more metrics and more users over time. The more experiments we’re able to run, the more innovative experiences we can deliver for Snapchat users.”

The shift toward open libraries for accelerated data processing highlights a broader trend in the industry: the move away from general-purpose CPU computing for big data tasks in favor of specialized GPU acceleration. For companies operating at the scale of nearly a billion users, the ability to process petabytes of data in a three-hour window is no longer just a technical advantage—it is a financial necessity.

The next milestone for this project involves the broader integration of the Spark accelerator across various production teams beyond the A/B testing group. Further technical details regarding this implementation are scheduled to be discussed during Prudhvi Vatala’s session at NVIDIA GTC on Tuesday, March 17, at 1 p.m. PT.

We seek to hear from you. Do you think GPU acceleration will become the standard for all big data processing, or will CPUs maintain a foothold in specific enterprise workloads? Share your thoughts in the comments below.