The intersection of artificial intelligence and creative expression has reached a new inflection point with the release of Sora, OpenAI’s text-to-video model. By transforming simple written prompts into complex, high-fidelity cinematic scenes, the technology is shifting the conversation from whether AI can generate video to how it will fundamentally alter the economics of visual storytelling and digital content creation.

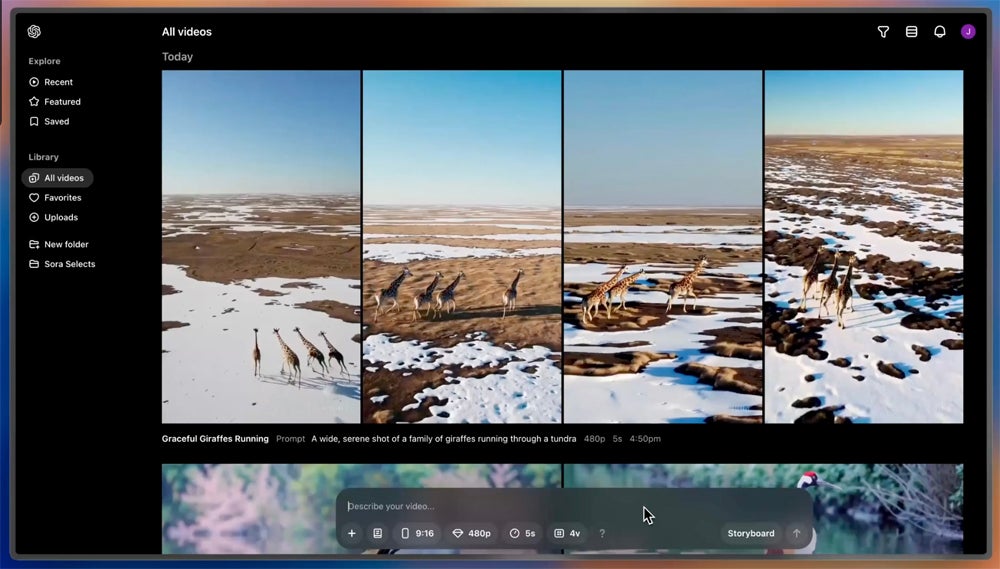

Sora represents a significant leap in temporal consistency and physical simulation. Unlike previous iterations of generative video, which often suffered from “hallucinations”—objects disappearing or morphing unnaturally—Sora demonstrates an advanced ability to maintain the identity of characters and the layout of environments across shots. This capability allows for the creation of videos up to a minute long, a duration that was previously a major technical barrier for diffusion-based models.

The implications for the creative industry are immediate. From rapid prototyping in film pre-visualization to the generation of high-quality B-roll for journalists and marketers, the tool reduces the barrier to entry for high-end visual production. However, the rollout has been cautious, with OpenAI limiting access to a “red team” of experts to identify potential harms before a wider public release.

The Mechanics of Text-to-Video Generation

At its core, Sora utilizes a transformer architecture—similar to the one powering GPT-4—but treats video as a sequence of “patches.” By breaking down video data into these small, manageable units, the model can process visual information with the same efficiency that a large language model processes tokens of text. This approach allows the system to learn the underlying physics of a scene, such as how light reflects off a surface or how a person moves through a crowded street.

The result is a level of photorealism that challenges the human eye’s ability to distinguish between captured and generated footage. The model handles complex camera movements, such as sweeping pans and drones-eye views, with a stability that suggests a deep understanding of 3D space. This is not merely a “collage” of existing clips, but a generative process that creates new pixels based on learned patterns of the physical world.

Despite these advances, the technology is not without flaws. The model occasionally struggles with complex cause-and-effect physics—such as a person taking a bite of a cookie, but the cookie remaining whole. These “edge cases” are the primary focus of current safety testing and iterative refinement.

Industry Impact and the Displacement Debate

The introduction of high-fidelity AI video is triggering a wave of anxiety across the global production landscape. Visual effects (VFX) artists, stock footage libraries and traditional animators are facing a future where the cost of generating a scene may drop toward zero. The primary concern is not just the loss of jobs, but the devaluation of the technical skill required to execute a vision.

Conversely, many creators view Sora as a “force multiplier.” By automating the tedious aspects of production—such as lighting a scene or rendering a background—directors can focus more on narrative and conceptualization. The ability to iterate on a visual concept in seconds rather than days could lead to a surge in independent filmmaking and experimental digital art.

The ethical considerations are equally pressing. The potential for “deepfakes” and misinformation is amplified when the quality of generated video becomes indistinguishable from reality. To combat this, OpenAI has committed to implementing C2PA metadata—a digital “watermark” that identifies the content as AI-generated—to ensure transparency for the end user.

Comparing AI Video Generations

| Feature | Early AI Video (2022-23) | Sora (Current State) |

|---|---|---|

| Duration | 3–10 seconds | Up to 60 seconds |

| Consistency | Low (morphing objects) | High (stable characters/scenes) |

| Physics | Abstract/Fluid | Approximate Real-world Physics |

| Resolution | Low/Blurry | High-Definition/Cinematic |

Navigating the Safety and Regulatory Landscape

The rollout of Sora is being conducted under a strict safety framework. OpenAI has partnered with a diverse group of “red teamers”—including artists, domain experts, and policymakers—to stress-test the model against the creation of harmful content, hate speech, or deceptive imagery. This process is designed to build “guardrails” that prevent the model from generating likenesses of real people or explicit violence.

Regulators in the European Union and the United States are closely monitoring these developments. The focus is on copyright law: whether the data used to train these models constitutes “fair use” or an infringement of the intellectual property of the original creators. As the legal system catches up to the technology, the outcome of these court cases will likely dictate the financial models for future AI tools.

For those affected by these shifts, the path forward involves a transition toward “AI-augmented” workflows. Which means learning to prompt and curate AI outputs rather than executing every frame by hand. The shift is comparable to the move from hand-drawn cells to CGI in the 1990s; the tools changed, but the need for a human eye and a creative vision remained.

What Comes Next for Generative Media

The immediate future of Sora involves a transition from a closed research preview to a more accessible tool for a broader set of creators. As the model is refined, we can expect improvements in “prompt adherence”—the ability of the AI to follow complex, multi-step instructions without omitting details.

The next confirmed checkpoint for the industry will be the ongoing legal challenges regarding training data and the potential release of a public beta or API, which would allow third-party developers to integrate Sora’s capabilities into existing software suites. Until then, the world remains in a period of observation, watching as the line between the captured and the created continues to blur.

We aim for to hear from you. How do you see AI video affecting your industry or your consumption of media? Share your thoughts in the comments below.