The intersection of generative AI and professional creativity has reached a pivotal moment with the release of Sora, OpenAI’s text-to-video model. By transforming simple written prompts into complex, high-fidelity cinematic scenes, the tool is shifting the conversation from whether AI can create video to how it will fundamentally alter the economics of visual storytelling and digital content production.

For those of us who spent years in software engineering before moving into reporting, the technical leap here is striking. Sora isn’t just stitching together existing clips; This proves a diffusion transformer that treats video as a series of patches, effectively learning the physics and geometry of a 3D world from a 2D dataset. This allows for a level of temporal consistency—the ability for an object to remain the same as the camera moves—that has eluded previous iterations of AI video.

While the capabilities are impressive, the rollout has been cautious. OpenAI has not yet released Sora to the general public, instead granting access to a “red team” of safety experts and a select group of visual artists, designers, and filmmakers to stress-test the system and identify potential harms. This controlled release highlights the tension between rapid innovation and the ethical imperatives of safety and copyright.

The Mechanics of Text-to-Video Generation

To understand why Sora represents a leap forward, one must look at its architecture. Unlike earlier models that struggled with “hallucinations”—where a person might suddenly grow an extra limb or a building might melt into the background—Sora utilizes a transformer architecture similar to the one powering ChatGPT, but applied to visual patches.

The model is trained on a massive dataset of videos and images, allowing it to simulate a rudimentary understanding of the physical world. But, this “understanding” is statistical, not conscious. The model knows that water typically ripples when a stone hits it because it has seen thousands of examples of that event, not because it understands fluid dynamics. This leads to occasional “physics failures,” such as a character eating a piece of cookie but the cookie remaining whole, or a person walking backward through a scene.

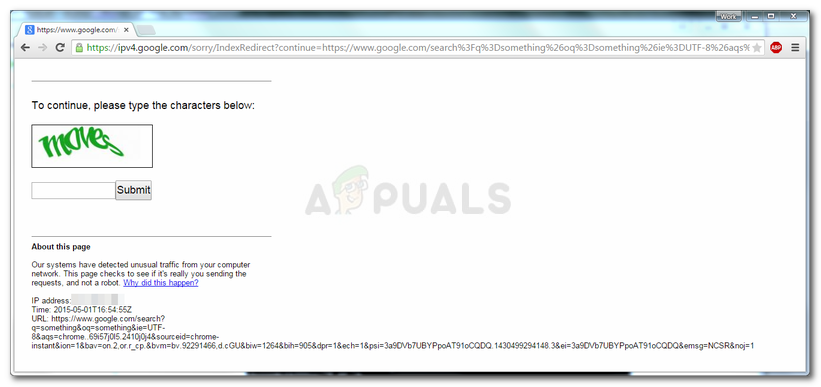

These glitches are precisely why the red-teaming process is critical. OpenAI is working to mitigate the creation of deepfakes and misinformation by implementing C2PA metadata—a digital watermark that identifies the content as AI-generated—and by training the model to refuse requests that violate safety policies, such as generating depictions of public figures or graphic violence.

Impact on the Creative Economy and Labor

The introduction of high-fidelity AI video raises urgent questions about the future of the creative workforce. From storyboard artists to VFX houses, the ability to generate a photorealistic scene from a prompt threatens to automate stages of production that previously required hundreds of man-hours and significant budgets.

Industry stakeholders are currently divided into two camps. Some see Sora as a “force multiplier” that allows independent creators to produce cinema-quality visuals without a Hollywood budget. Others view it as an existential threat to entry-level roles in the industry, such as rotoscoping or basic animation, where the “grunt work” is often how new artists learn their craft.

The legal landscape remains equally volatile. The training of these models often involves scraping vast amounts of data from the internet, leading to ongoing debates and lawsuits regarding fair use and intellectual property. The U.S. Copyright Office has historically maintained that works created entirely by AI without human creative control cannot be copyrighted, a ruling that could significantly impact the commercial viability of AI-generated films.

Comparing Current AI Video Capabilities

| Feature | Traditional CGI/VFX | Early AI Video (Gen-1/2) | Sora (Diffusion Transformer) |

|---|---|---|---|

| Production Time | Weeks/Months | Minutes | Seconds/Minutes |

| Consistency | Perfect | Low (Flickering) | High (Temporal Stability) |

| Control | Frame-by-frame | Prompt-based | Prompt + Scene Simulation |

| Cost | High Capital | Low/Subscription | TBD (Enterprise/Pro) |

The Road to Public Access and Safety

The primary hurdle for Sora’s general release is not the technology, but the safety framework. The potential for the tool to be used in the creation of non-consensual intimate imagery or political disinformation is a significant concern for regulators and the company itself. OpenAI’s current strategy involves a tiered rollout, focusing on feedback from the creative community to refine the model’s guardrails.

Beyond safety, the computational cost of running such a model is immense. Generating a 60-second high-definition clip requires substantial GPU power, suggesting that when Sora does launch, it may initially be available as a high-cost subscription or through an API for enterprise partners rather than a free consumer tool.

For the average user, the immediate impact will likely be seen in the “AI-augmented” content on social media. We are moving toward a hybrid era where the line between captured footage and generated imagery becomes nearly invisible, necessitating a new form of digital literacy for the general public.

The next critical milestone will be the publication of the red-teaming reports and the eventual announcement of a public beta or API access. As the industry awaits these updates, the focus remains on whether the tool will be integrated into existing creative suites or exist as a standalone disruptor.

Do you think AI video will empower creators or replace them? Share your thoughts in the comments below and subscribe for more updates on the evolving AI landscape.