The intersection of artificial intelligence and creative expression is undergoing a fundamental shift as generative video tools move from experimental curiosities to professional-grade production assets. This evolution is best exemplified by the recent release of Sora, OpenAI’s text-to-video model, which has sparked a global conversation about the future of cinematography, digital storytelling, and the authenticity of visual media.

By transforming simple text prompts into complex, high-fidelity scenes, Sora is challenging the traditional boundaries of the film industry. The tool does not merely animate static images but simulates physical worlds with a level of consistency and detail that was previously reserved for high-budget CGI studios. This leap in generative video technology represents more than just a technical milestone; it is a disruption of the creative workflow that has remained largely unchanged since the advent of digital editing.

While the technology promises to democratize high-end visual effects, it as well introduces significant challenges regarding safety, misinformation, and the displacement of human artists. OpenAI has acknowledged these risks, opting for a phased rollout that includes “red teaming” by experts in misinformation and artist feedback to refine the model’s output and safety guardrails.

The Mechanics of Motion and Consistency

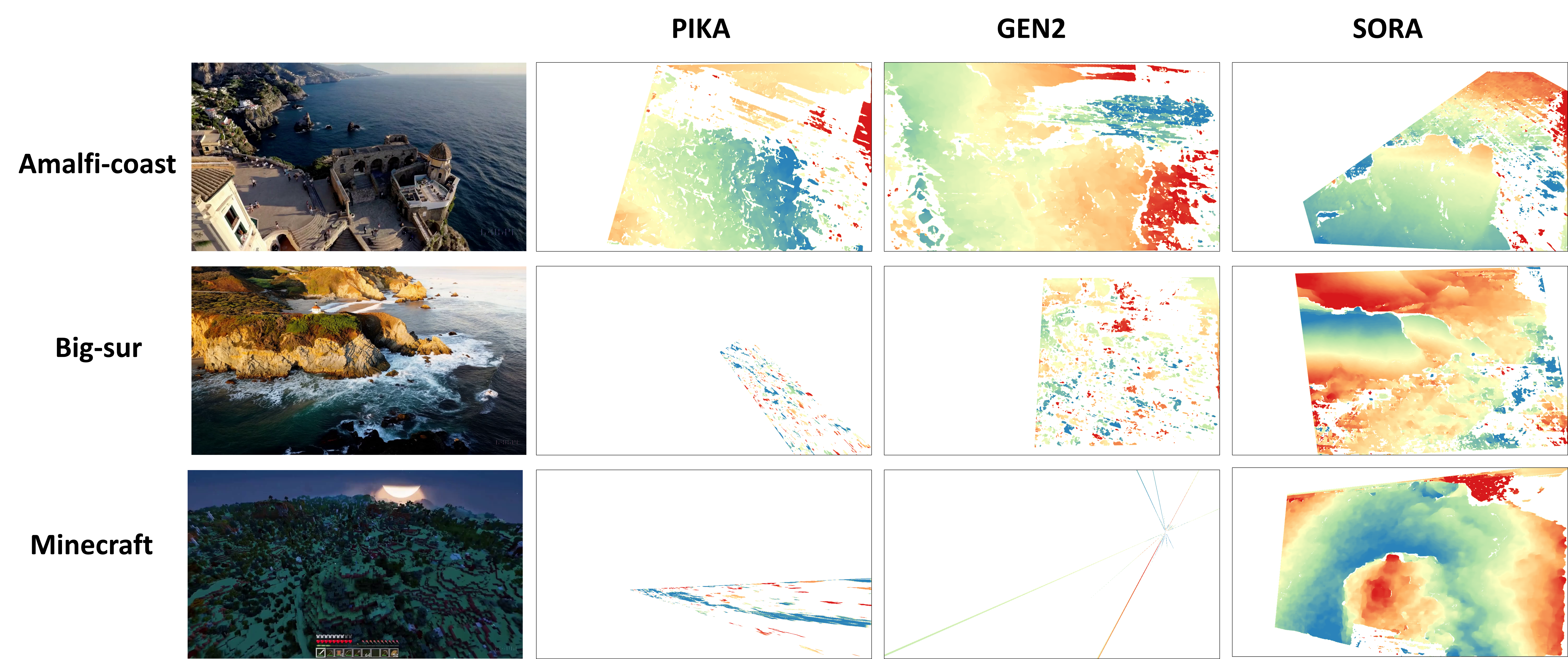

Unlike previous iterations of AI video, which often suffered from “morphing” or erratic movements, Sora utilizes a transformer architecture to maintain temporal consistency. This means that if a character walks behind a tree and reappears, the model remembers the character’s appearance and position, creating a coherent narrative flow across a single shot.

The model’s ability to handle complex camera movements—such as sweeping pans and drones-eye views—suggests a deep understanding of 3D space, even though it is trained on 2D data. This capability allows creators to generate cinematic sequences that mimic real-world physics, although the system still occasionally struggles with “causal” physics, such as the way a cookie might crumble when bitten or the precise direction of a liquid pour.

For professional creators, this means the barrier to entry for producing a visual concept or a “mood reel” has vanished. What once required a team of storyboard artists and 3D modelers can now be prototyped in minutes, shifting the role of the director from one of technical execution to one of curated iteration.

Navigating the Ethical and Professional Fallout

The rapid ascent of generative video has sent ripples through labor unions and creative guilds. The primary concern is not just the replacement of entry-level jobs, but the potential for “synthetic media” to be used without consent or compensation for the artists whose work may have informed the training data.

To combat the potential for deepfakes and political manipulation, OpenAI is integrating C2PA metadata into Sora-generated videos. This digital watermarking allows viewers to verify if a clip was AI-generated, a critical step as the world enters an era where seeing is no longer believing. However, critics argue that metadata can be stripped, and the sheer volume of synthetic content could overwhelm existing verification systems.

The impact on the industry can be broken down into several key areas of disruption:

- Pre-visualization: Rapid prototyping of scenes before physical filming begins.

- Stock Footage: The potential obsolescence of generic B-roll libraries in favor of custom-generated clips.

- Indie Filmmaking: Lowering the financial threshold for creators to achieve “Hollywood-level” visuals.

- Advertising: The ability to create hyper-personalized video ads tailored to specific demographics in real-time.

Comparing the Generative Landscape

Sora does not exist in a vacuum. It enters a competitive field where other players are racing to solve the same problems of consistency and resolution. While OpenAI focuses on high-fidelity simulation, other models emphasize speed or open-source accessibility.

| Feature | Sora (OpenAI) | Runway Gen-2 | Pika Labs |

|---|---|---|---|

| Primary Strength | Temporal Consistency | Control & Editing Tools | Animation & Stylization |

| Max Duration | Up to 60 Seconds | Shorter segments | Short loops/clips |

| Access Model | Controlled/Red-Teaming | Public/Subscription | Public/Discord-based |

| Physics Simulation | High (Simulated) | Moderate | Moderate |

The Road Toward Public Release

The transition from a research preview to a public tool is fraught with tension. OpenAI’s decision to keep Sora in a limited release phase highlights the volatility of the technology. The company is currently working with a small group of visual artists, designers, and filmmakers to understand how the tool integrates into professional pipelines and where it fails most spectacularly.

Beyond the technical refinements, the legal landscape remains unsettled. Courts in various jurisdictions are currently weighing whether the use of copyrighted material for training generative AI constitutes “fair use” under U.S. Copyright Law. The outcome of these cases will likely determine the financial model for future AI video tools and whether artists will receive royalties for their contributions to the training sets.

As the technology matures, the focus is shifting toward “hybrid workflows,” where AI handles the background and environment while human actors and directors maintain control over performance and narrative nuance. This synergy aims to preserve the “human soul” of cinema while leveraging the efficiency of the machine.

The next confirmed milestone for the technology involves the continued expansion of the red-teaming phase and the potential integration of Sora into other OpenAI ecosystem tools, though a general public release date has not yet been officially announced.

We want to hear from you. How do you see generative video changing your industry or your creative process? Share your thoughts in the comments below.