For anyone managing a mountain of digital information, the friction of switching between tools is often the biggest hurdle to actual productivity. I have spent years refining my research workflow, moving from traditional folders to sophisticated tagging systems, but the real breakthrough happened when I stopped treating my AI tools as separate destinations. By pairing NotebookLM with Gemini, I have found the biggest upgrade I’ve made for my research, transforming a fragmented process into a cohesive intellectual workspace.

Coming from a background in software engineering, I tend to view productivity through the lens of “latency”—not just technical latency, but cognitive latency. The mental cost of jumping from a primary LLM interface to a specialized research notebook is a tax on focus. For a long time, I used NotebookLM as a standalone vault: a place to dump sources, generate briefs, and cross-reference documents that would otherwise vanish into a forgotten folder. It served its purpose, but it existed in a silo.

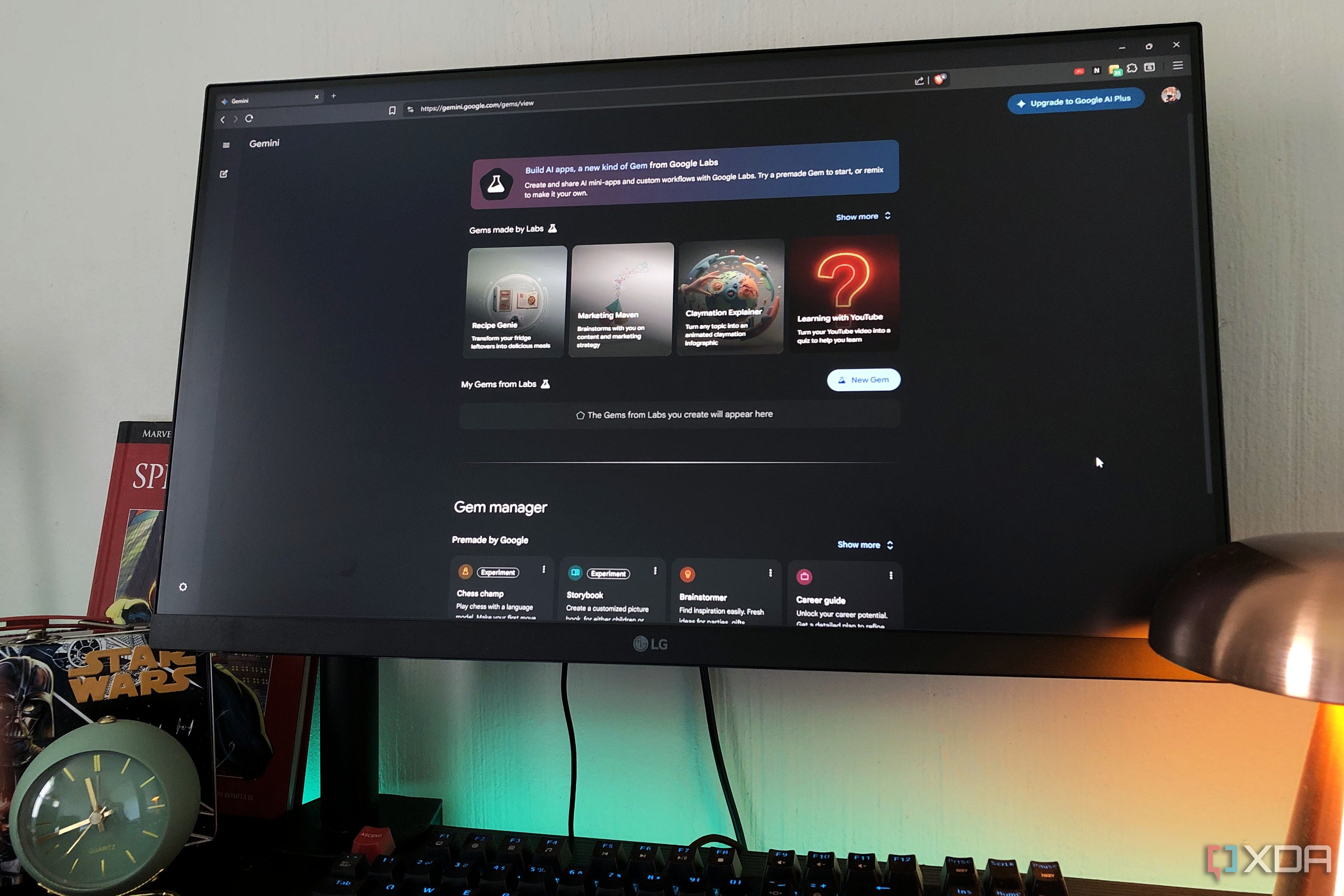

The shift occurred when I integrated these two environments. Rather than treating the notebook as a separate destination to visit and leave, using NotebookLM through the Gemini ecosystem allows the research to live where the drafting and brainstorming happen. This integration removes the “context switch” that often breaks a writer’s flow, allowing the AI to pull from a grounded set of personal sources while maintaining the conversational flexibility of a general-purpose assistant.

Moving Beyond the Standalone Research Vault

To understand why this pairing is a game-changer, it is necessary to look at what NotebookLM does differently from a standard chatbot. Most AI assistants rely on their general training data, which can lead to “hallucinations” or vague generalizations. NotebookLM uses a technique called grounding, where the AI’s responses are strictly tethered to the documents you upload. This makes it an indispensable tool for journalists and researchers who cannot afford to be “approximately correct.”

In my previous workflow, the process looked like this: I would conduct research in Gemini, find a key document, upload it to NotebookLM, go to the NotebookLM tab to analyze it, and then copy those insights back into a separate document for drafting. It was a functional loop, but it was inefficient. By bringing the power of the notebook into the Gemini interface, the boundary between “gathering information” and “synthesizing information” disappears.

This integration is particularly effective for complex projects that require cross-referencing multiple long-form documents. Whether it is a 50-page white paper on cybersecurity trends or a series of technical specifications for a new gadget, the ability to query a specific corpus of data without leaving the main chat interface accelerates the speed of synthesis.

The Impact on Cognitive Load and Workflow

The primary benefit of this pairing is the reduction of “tool fatigue.” In the modern tech stack, we are often encouraged to use the “best-in-class” tool for every micro-task, but this leads to a fragmented experience. When your research tool is an extension of your primary AI assistant, you can move seamlessly from a high-level query to a deep-dive citation.

For those wondering how this changes the actual output, the difference is most visible in the accuracy of the briefs. When the AI is grounded in a specific notebook, it doesn’t just tell you what is generally true about a topic; it tells you what your sources say about that topic. This distinction is critical for professional reporting and technical analysis.

| Feature | Standalone NotebookLM | Gemini + NotebookLM Integration |

|---|---|---|

| Context Switching | High (Multiple tabs/apps) | Low (Unified interface) |

| Information Retrieval | Manual search within notebook | Conversational retrieval via Gemini |

| Drafting Speed | Slower (Copy/Paste loop) | Faster (Direct synthesis) |

| Source Grounding | Strong (Tethered to docs) | Strong (Tethered via integration) |

Practical Applications for Tech Reporting

In the context of covering start-up culture and AI developments, the volume of information is overwhelming. I often deal with a mix of press releases, investor decks, and technical documentation. Pairing these tools allows me to create a “living knowledge base” for every story I cover.

For example, when analyzing a new cybersecurity framework, I can upload the official documentation to a notebook. Using Gemini, I can then ask, “Based on the uploaded specs, what are the three biggest vulnerabilities this framework addresses?” The AI retrieves the answer from the grounded source, but I can immediately follow up with a general query like, “How does this compare to the industry standards used by other major firms?” without switching windows.

This hybrid approach combines the precision of grounded research with the breadth of a large language model. It allows for a level of nuance that is difficult to achieve when using either tool in isolation.

Who Benefits Most from This Setup?

While This represents a massive upgrade for journalists, the utility extends to several other professional cohorts:

- Software Engineers: Pairing technical documentation and API references with a coding assistant to reduce time spent searching through manuals.

- Students and Academics: Managing vast libraries of PDFs and lecture notes while drafting thesis chapters.

- Legal Professionals: Cross-referencing case law and discovery documents while preparing legal briefs.

- Product Managers: Synthesizing user feedback and market research into product requirement documents (PRDs).

The Future of Grounded Intelligence

The evolution of Gemini and its integration with specialized tools like NotebookLM suggests a move toward “agentic” workflows. We are moving away from a world where we “prompt” an AI to get an answer, and toward a world where we provide the AI with a curated environment—a digital library—and ask it to operate within those boundaries.

The next step in this evolution will likely involve deeper integration with real-time data streams and a more seamless way to organize these “notebooks” across different projects. As Google continues to refine the interplay between its general-purpose models and its specialized research tools, the gap between raw data and finished insight will continue to shrink.

For now, the most immediate improvement for any researcher is to stop treating these tools as separate apps. The power isn’t in the individual tool, but in the pipeline you build between them. By centering your research around a grounded notebook and accessing it through a flexible interface, you eliminate the friction that kills creativity.

As Google continues to roll out updates to the Gemini ecosystem, users should keep an eye on official Google Keyword updates for new integration features and expanded context window capabilities.

Have you changed your research workflow with AI? I’d love to hear how you’re organizing your sources—share your thoughts in the comments below.