Nvidia is facing a complex set of logistical and technical hurdles that may hinder the rollout of its next-generation AI hardware. According to the latest data from industry analysis firm TrendForce, supply chain challenges risk delaying Nvidia’s Rubin GPUs, potentially leading to later shipping dates and lower initial volumes than the company and investors had originally anticipated.

The Rubin architecture represents the next leap in Nvidia’s aggressive roadmap to maintain its dominance in the AI accelerator market. However, the transition to this new generation is proving technically demanding. TrendForce now projects that Rubin will account for 22 percent of Nvidia’s high-end GPU shipments in 2026, a notable decrease from their previous forecast of 29 percent.

As a former software engineer, I’ve seen how the “last mile” of hardware validation can derail even the most precise timelines. In this case, the delays aren’t just about shipping containers or chip yields, but the fundamental physics of power and heat. The Rubin systems are pushing the limits of current data center infrastructure, requiring a level of integration that is proving difficult to scale quickly.

The shift in shipment mix suggests that while the demand for AI compute remains insatiable, the physical reality of deploying these chips is creating a bottleneck. This creates a ripple effect across the AI ecosystem, from the cloud service providers waiting to upgrade their clusters to the developers whose next-generation models rely on the specific memory bandwidth Rubin promises.

The Technical Bottlenecks: Memory, Power, and Cooling

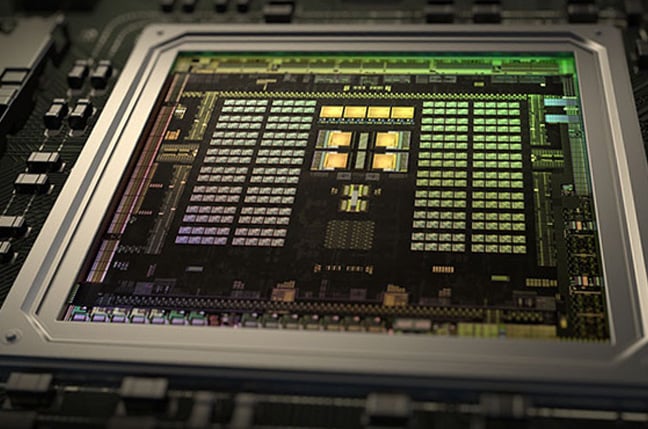

The delays facing the Rubin lineup are rooted in several critical technical dependencies. The most significant of these is the validation of HBM4 (High Bandwidth Memory 4). This newer memory standard is essential for the massive data throughput required by the largest LLMs, but the time required to ensure stability and performance at scale has extended the timeline.

Beyond memory, Nvidia is navigating a complex migration to its faster ConnectX-9 network interface cards (NICs). In a massive AI cluster, the chip is only as fast as the network connecting it to its peers; any friction in the adoption of these NICs directly impacts the overall system availability.

Then there is the issue of thermal management. The Rubin GPUs exhibit higher overall power consumption, which has necessitated a move toward more advanced liquid cooling requirements. Traditional air-cooled data centers are simply not equipped to handle the heat density of these new accelerators, meaning the “delay” is partly a matter of waiting for the physical infrastructure of the world’s data centers to catch up to the silicon.

A Shift in Product Mix: Blackwell and Hopper

While Rubin faces headwinds, Nvidia’s current-generation Blackwell GPUs are stepping up to fill the gap. Analysts now anticipate that Blackwell shipments—including the GB300 and B300 models—will account for 71 percent of Nvidia’s GPU sales this year.

Conversely, the older Hopper architecture is seeing a decline in its share of the mix. TrendForce expects Hopper accelerators to make up about 7 percent of shipments this year, down from a previous estimate of 10 percent. This decline is compounded by geopolitical friction, specifically regarding the H200 GPUs intended for the Chinese market.

The situation in China has been a volatile mix of regulatory bans and strategic reversals. In December, the Trump administration indicated it would allow exceptions to export rules for high-end AI accelerators, with formal approval following in January. This theoretically allowed Nvidia to sell H200s to Chinese customers, provided the company paid a quarter of the revenue from those sales to the U.S. Government.

Despite the U.S. Green light, the deal faced months of hesitation from Beijing. During a recent GTC event, CEO Jensen Huang noted that Nvidia was resuming manufacturing capacity for H200s to meet existing purchase orders from China, but the geopolitical uncertainty continues to weigh on the total shipment volumes for the Hopper line.

Nvidia GPU Shipment Forecast Comparison

| GPU Architecture | Previous Forecast | Current Forecast | Primary Driver |

|---|---|---|---|

| Rubin (2026) | 29% | 22% | HBM4 & Cooling delays |

| Blackwell | Lower | 71% | Filling the volume gap |

| Hopper | 10% | 7% | China export volatility |

The Rise of LPUs and the Memory Price Crisis

Amidst the GPU turbulence, TrendForce is bullish on a different piece of silicon: Nvidia’s newly announced Groq LPUs (Language Processing Units). Unlike traditional GPUs, these chips do not rely on conventional DRAM memory. Instead, they are designed to work alongside GPUs like Rubin to accelerate the “decode phase” of the inference pipeline—essentially speeding up the rate at which a model generates tokens.

Because these LPUs rely on limited on-chip SRAM, they must be deployed in vast quantities to be effective. TrendForce expects demand for these units to reach several hundred thousand this year, potentially doubling by 2027. This represents a strategic pivot toward specialized inference hardware to complement the general-purpose power of GPUs.

However, this appetite for AI infrastructure is driving a crisis in the broader memory market. Consumer DRAM prices are surging, with TrendForce warning of another 45-50 percent increase in the second quarter. This follows a massive 75-80 percent jump in the first quarter.

For the average consumer, this means DDR5 memory and SSDs are now selling for more than triple their retail prices from a year ago. The cyclical nature of the memory market, combined with the unprecedented demand for AI-grade HBM, is effectively starving the consumer market of affordable capacity.

Nvidia has not yet officially commented on the specific delays regarding the Rubin lineup. The company’s ability to resolve the HBM4 validation and liquid cooling hurdles will be the primary indicator of whether these projections hold or if the timeline slips further.

The next critical checkpoint for the industry will be the upcoming quarterly earnings reports and official product availability updates, where Nvidia is expected to provide more clarity on the Blackwell ramp-up and the Rubin production schedule.

Do you think the shift toward liquid cooling will fundamentally change how data centers are built, or is this a temporary hurdle for the AI boom? Share your thoughts in the comments.