For years, the narrative surrounding the explosion of generative AI has focused on the “power wall”—the looming moment when the insatiable energy demands of massive data centers outstrip the capacity of the electrical grid. To many, AI has been viewed as a static, heavy load: a digital monolith that draws immense amounts of electricity regardless of whether the grid is struggling or surging.

That paradigm shifted last week at CERAWeek, the premier gathering of energy policymakers and technologists. NVIDIA and Emerald AI unveiled a strategic framework to transform these facilities into power-flexible AI factories, treating them not as liabilities to the grid, but as intelligent assets capable of responding to real-time energy conditions.

By integrating accelerated computing with real-time energy orchestration, this new approach allows AI deployments to connect to the grid faster and operate more efficiently. Rather than requiring utilities to overbuild infrastructure to handle peak demand, these flexible factories can “flex” their power consumption, supporting overall system reliability although continuing to generate the high-value AI tokens that drive the modern economy.

This initiative represents a fundamental rethink of the “power-to-rack” pipeline. It moves the industry away from simply building larger power plants and toward a model of “extreme codesign,” where the software, the chip, and the electrical grid are engineered as a single, cohesive system.

Turning the Power Drain into a Grid Asset

At the heart of this shift is the combination of the NVIDIA Vera Rubin DSX AI Factory reference design and Emerald AI’s Conductor platform. Together, they create a unified architecture that merges compute power, networking, and energy control. This allows a data center to dynamically adjust its workload based on the health and availability of the surrounding grid.

For those of us who have spent time in the trenches of software engineering, this is essentially the application of “load balancing” to the physical world. Instead of just balancing traffic across servers, the system balances the factory’s energy draw against the grid’s capacity.

This efficiency is measured by a new, defining metric: tokens per second per watt. As NVIDIA CEO Jensen Huang noted in a recent conversation with Lex Fridman, power is a primary concern, but not the only one. The goal is to improve this performance-per-watt ratio by orders of magnitude every year. According to NVIDIA, the progress has been staggering; from the Kepler GPU in 2012 to the Vera Rubin platform today, the number of tokens generated within the same power budget has increased by more than one million times.

To implement this at scale, a coalition of energy giants is stepping in. Companies including AES, Constellation, Invenergy, NextEra Energy, Nscale Energy & Power, and Vistra are collaborating on optimized generation strategies. These include hybrid projects with co-located power sources, which accelerate the time it takes for a factory to move online while providing stability to the broader electrical ecosystem.

Accelerating Infrastructure with Digital Twins and Robotics

Building the physical infrastructure for the intelligence era cannot happen at the pace of traditional construction. To bridge this gap, NVIDIA’s ecosystem partners are deploying AI and simulation to compress timelines that previously took years.

In the solar sector, Maximo—a robotics company incubated at AES—recently completed a 100-megawatt robotic solar installation at the Bellefield site. By using AI-driven robotics and the NVIDIA Isaac Sim framework, Maximo demonstrated that autonomous installations can operate at utility scale, increasing speed and safety while reducing the labor bottleneck.

Nuclear energy is seeing a similar digital transformation. TerraPower, in collaboration with SoftServe, is using an NVIDIA Omniverse-powered digital twin platform to streamline the siting and design of advanced nuclear plants. By simulating early-stage engineering, the company aims to reduce design cycles from years to months for its Natrium energy plants, ensuring faster grid integration.

Recognizing that hardware is only as good as the people operating it, Adaptive Construction Solutions has launched a national registered apprenticeship initiative with NVIDIA. The program is designed to train a skilled workforce in the critical trades necessary to build and maintain these complex AI-driven power systems.

Solving the ‘Power-to-Rack’ Challenge

The final piece of the puzzle is the physical integration of energy and compute. GE Vernova, Schneider Electric, and Vertiv are focusing on the “power-to-rack” challenge, ensuring that AI infrastructure is designed as an integrated energy system from day one.

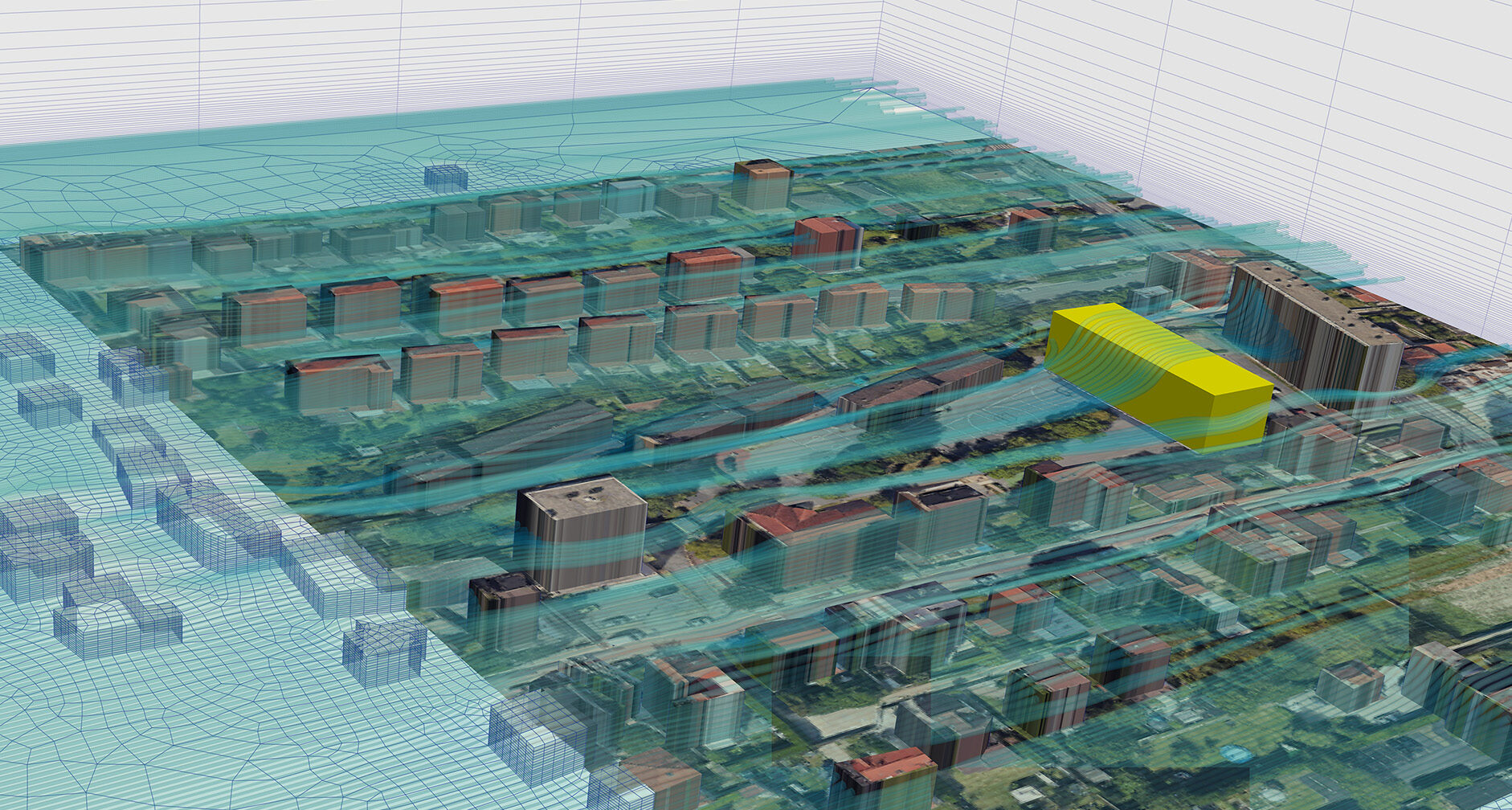

GE Vernova is utilizing high-fidelity digital twins aligned with the NVIDIA Omniverse DSX Blueprint. This allows developers to simulate how an AI factory’s load will interact with substations and grid behavior before a single piece of equipment is installed, reducing the risk of interconnection failures.

Schneider Electric has introduced new validated reference designs and lifecycle digital twin architectures developed with AVEVA. By simulating cooling and power controls within Omniverse, operators can optimize the performance per watt and validate their designs before the buildout begins.

Complementing this is Vertiv’s approach to converged, simulation-ready physical infrastructure. By using repeatable power and cooling building blocks integrated with the Vera Rubin DSX design, Vertiv aims to reduce the complexity of deployment, allowing AI factories to scale with greater confidence and speed.

The AI Factory Ecosystem: Key Contributions

| Partner | Focus Area | Primary Technology/Goal |

|---|---|---|

| Emerald AI | Energy Orchestration | Conductor platform for grid-responsive compute |

| TerraPower | Nuclear Energy | Digital twins to shorten Natrium plant design |

| Maximo | Solar Robotics | Autonomous utility-scale solar installation |

| GE Vernova | Grid Simulation | System-level modeling of factory loads |

| Schneider Electric | Power & Cooling | Validated reference designs for performance per watt |

| Vertiv | Physical Infrastructure | Repeatable, simulation-ready power blocks |

This collective effort supports what Jensen Huang describes as the “five-layer AI cake,” a computing paradigm where energy serves as the foundational layer. Without a resilient, flexible power base, the layers above—chips, infrastructure, models, and applications—cannot scale.

The next phase of this rollout will likely focus on the deployment of the first wave of hybrid, co-located power projects and the expansion of the national apprenticeship programs to meet the immediate demand for skilled labor. As these power-flexible AI factories move from reference designs to operational reality, the industry will have a clearer picture of how much “flex” the grid can actually handle.

Do you think the energy grid can preserve up with the AI boom, or are we just delaying the inevitable? Share your thoughts in the comments.