Amazon Web Services is evolving one of its most fundamental building blocks to meet the specific demands of the generative AI era. The company is transforming its S3 storage service into a file system for AI agents, a move designed to bridge the gap between massive-scale object storage and the precise, nimble file operations required by autonomous AI systems.

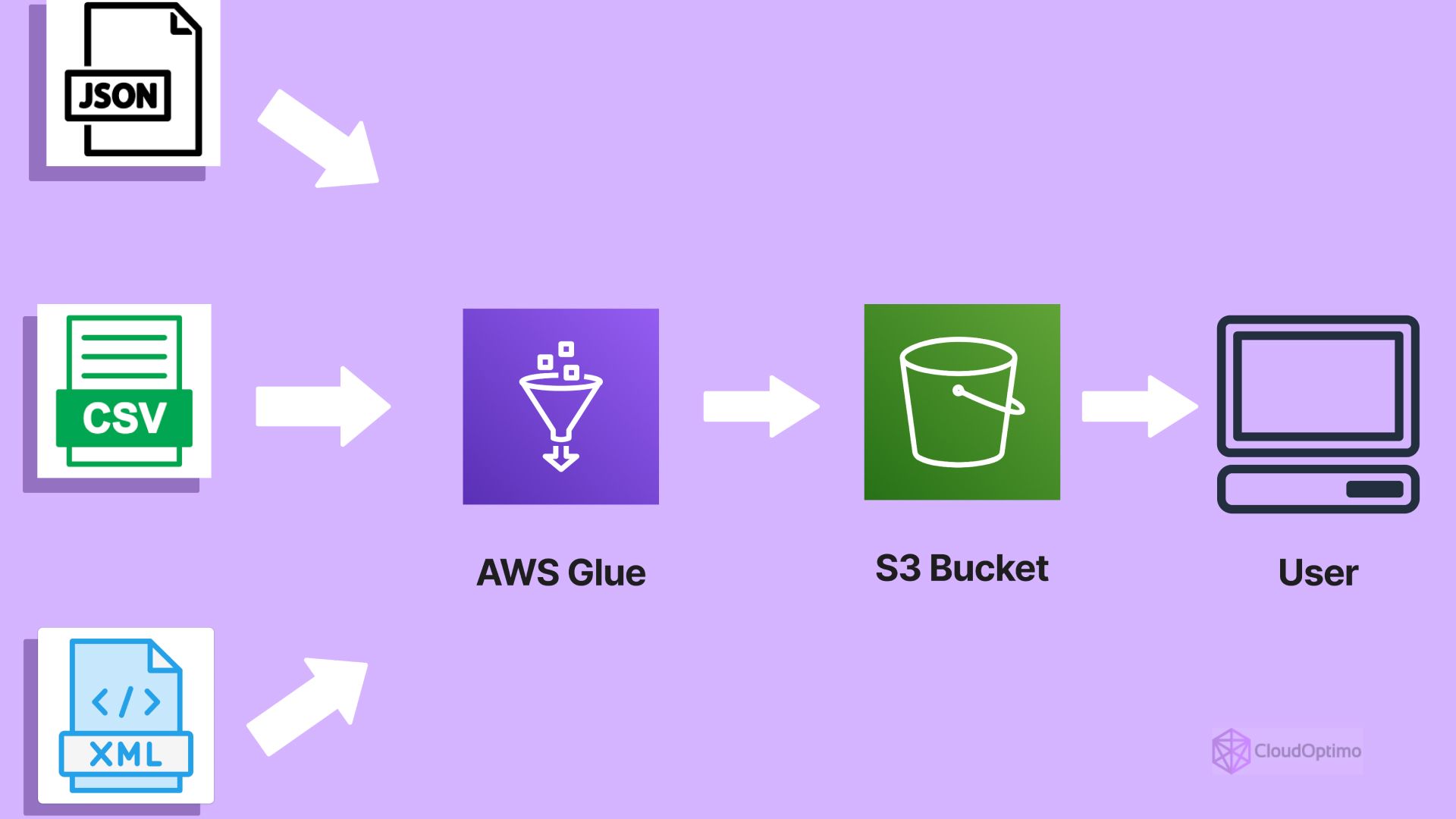

For years, S3 has functioned as a “bucket” for unstructured data—ideal for storing vast amounts of information but cumbersome for applications that need to modify small pieces of a file or maintain a strict hierarchy of permissions. By introducing native file system capabilities, AWS is attempting to remove the “glue code” that has historically slowed down the deployment of AI agents that need to read, write, and remember data in real time.

The shift addresses a critical bottleneck in AI development: the friction between how data is stored at scale and how an AI agent actually interacts with that data to perform tasks. By allowing agents to treat S3 as a native file system, developers can now build systems that store memory and share data without the latency or complexity of traditional object storage interfaces.

Solving the ‘Glue Code’ Problem in AI Infrastructure

In the current cloud landscape, developers often face a choice between the scalability of object storage and the functionality of a traditional file system. To get the best of both worlds, many have relied on FUSE-based (Filesystem in Userspace) tools to simulate a file system on top of S3. These include open-source utilities like s3fs or AWS’s own Mountpoint.

While these tools provided a workable workaround, they often came with significant trade-offs. According to Kaustubh, a technical lead involved in the effort, these simulations frequently lacked proper locking mechanisms, consistency guarantees, and efficient ways to handle incremental updates. For an AI agent—which may need to update a specific line of a log or a piece of its “memory” without rewriting an entire multi-gigabyte object—these limitations were a major hurdle.

The latest S3 Files approach integrates these operations natively. By supporting native file permissions, locking, and incremental updates, AWS is effectively turning a storage warehouse into a workspace. This means existing file-based tools can work with S3 without requiring developers to rewrite their entire application logic for object storage.

“Agents also become easier to build, as they can directly read and write files, store memory, and share data. It reduces the need for extra glue code like sync jobs, caching layers, and file adapters,” Jain said.

Why This Matters for Autonomous Agents

To understand the impact, We see helpful to gaze at how an AI agent functions. Unlike a standard chatbot, an agent is designed to execute a sequence of tasks—such as researching a topic, writing a report, and then updating a database. This requires a form of “short-term memory” and “long-term storage” that is easily accessible.

When an agent is forced to use standard object storage, it must often download a file, modify it locally, and then re-upload the entire object back to the cloud. This process is not only slow but creates risks regarding data consistency if multiple agents are trying to access the same file. Native file system support allows for “atomic” updates, where only the changed portion of a file is modified, drastically reducing latency and compute overhead.

Comparing Storage Paradigms

| Feature | Standard S3 (Object) | S3 Files (File System) |

|---|---|---|

| Update Method | Full object overwrite | Incremental updates |

| Concurrency | Limited locking | Native file locking |

| Integration | API-based (Put/Get) | Standard file-based tools |

| AI Agent Utility | Bulk data lakes | Active memory & state |

The Broader Implications for Developer Workflows

As a former software engineer, I’ve seen firsthand how “infrastructure friction” can kill a project’s momentum. The requirement to build custom caching layers or synchronization jobs just to produce a cloud bucket behave like a hard drive is a classic example of technical debt. By removing this layer, AWS is lowering the barrier to entry for startups building agentic workflows.

This transition also aligns with the broader trend of “stateful” AI. For an agent to be truly useful, it needs to maintain a state—a record of what it has done and what it needs to do next. Providing a reliable, scalable file system allows that state to be persisted across different compute instances, meaning an agent can be “paused” on one server and “resumed” on another without losing its place in a complex task.

The move also simplifies the security model. Native support for permissions means that developers can apply granular access controls to specific files or directories within S3, rather than relying on broader bucket policies that can be cumbersome to manage as an application scales.

Next Steps and Implementation

The introduction of these capabilities is part of a larger push by AWS to optimize its ecosystem for the Amazon Bedrock and SageMaker environments. As more enterprises move from simple prompt-and-response AI to fully autonomous agents, the demand for high-performance, stateful storage will only increase.

Developers looking to implement these changes should monitor the official AWS documentation for the rollout of S3 Files and the specific API updates that enable native locking and incremental writes. The focus moving forward will likely be on how these file systems integrate with real-time data streams and vector databases to provide a comprehensive “brain” for AI agents.

We invite our readers to share their experiences with S3 integration and AI agent development in the comments below.