The global race to integrate artificial intelligence into the enterprise is no longer just a battle of algorithms and large language models; it has evolved into a massive capital expenditure campaign centered on the physical layers of computing. Whereas headlines often focus on the “magic” of generative AI, the actual engine of this transformation is a sprawling network of data centers, high-performance silicon, and edge computing nodes.

This shift is underscored by staggering financial projections. According to Gartner, worldwide spending on AI is forecast to reach $2.53 trillion in 2026. More tellingly, infrastructure is the primary driver of this investment, with spending in that sector alone expected to hit $1.37 trillion—representing more than 54 percent of all AI-related expenditures.

For those of us who spent years in software engineering before moving into reporting, this “hardware pivot” is a familiar cycle, but the scale here is unprecedented. We are seeing a convergence where traditional server and storage giants are being forced to reinvent themselves as “AI factories,” while scrappy software vendors are building the resilience layers necessary to keep these massive systems from collapsing under their own complexity.

The 2026 CRN AI 100 highlights 25 vanguards in this space, identifying the companies providing the CPUs, GPUs, and edge orchestration required to move AI from a pilot project to a production-grade reality. These companies are the architects of the “physical AI” era, bridging the gap between a cloud-based prompt and a real-world industrial application.

The Silicon Foundation and the GPU Hunger

At the center of the infrastructure surge is the relentless demand for compute. Nvidia remains the most visible player, providing the GPUs and cloud services that serve as the industry’s baseline. However, the ecosystem is diversifying as enterprises seek alternatives to avoid vendor lock-in and manage costs.

AMD has positioned itself as a primary challenger, offering a comprehensive stack of CPUs and GPUs supported by the ROCm libraries and Vitis AI inference software. Similarly, Intel is leveraging its Xeon processors and Gaudi accelerators to provide high-performance alternatives, utilizing the OpenVINO toolkit to optimize models across diverse hardware platforms.

This silicon layer is further extended by companies like Qualcomm, whose Snapdragon processors are pushing “agentic AI” toward the edge. By moving inference from the data center to the device—be it a smartphone, an automobile, or an IoT sensor—Qualcomm is reducing the latency and bandwidth costs that currently plague centralized AI models.

Redefining the Data Center as an AI Factory

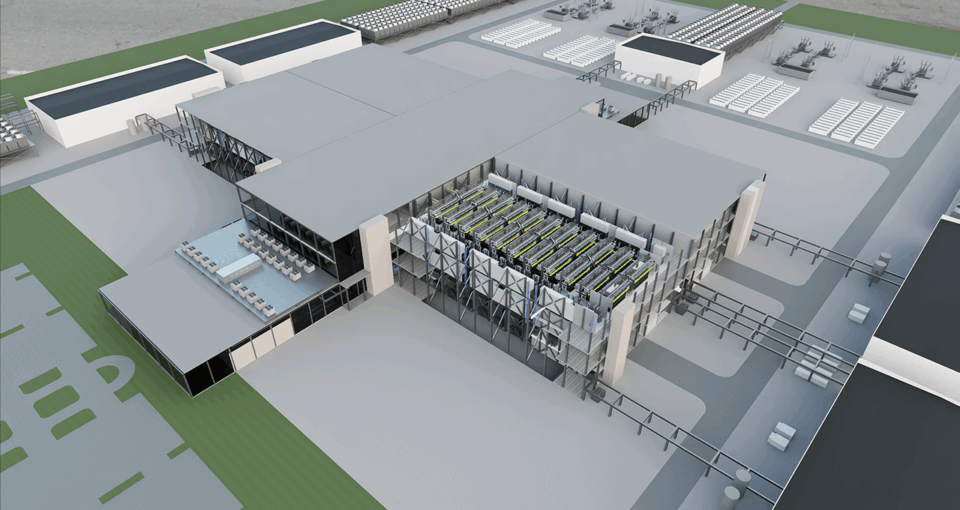

The traditional data center is being replaced by the “AI Factory,” a concept where compute, storage, and networking are tightly integrated to eliminate bottlenecks. When a GPU sits idle because We see waiting for data from a sluggish storage array, the ROI of the entire system plummets. This has created a massive opportunity for high-performance storage and networking specialists.

Dell Technologies and HPE are leading this transition. Dell’s AI Factory approach provides an end-to-end portfolio of infrastructure, while HPE has introduced the Private Cloud AI, which aims to deploy secure AI workbenches in a matter of hours. These giants are no longer just selling boxes; they are selling the orchestration of an entire AI lifecycle.

Storage is where the most critical bottlenecks occur. Companies like DDN are pushing GPU utilization toward 99 percent to ensure that training pipelines remain fluid. Meanwhile, Everpure (formerly known as Pure Storage) and NetApp are focusing on “AI-ready” data pipelines, ensuring that the massive volumes of unstructured data required for training are cleaned, indexed, and delivered at scale.

| Metric | 2025 (Estimated) | 2026 (Forecast) | 2027 (Forecast) |

|---|---|---|---|

| Total AI Spending | – | $2.53 Trillion | – |

| Infrastructure Spending | ~$0.94 Trillion | $1.37 Trillion | ~$1.75 Trillion |

| % of Total AI Spend | – | 54% | – |

The Rise of Edge Intelligence and Agentic Ops

The next frontier is the “edge”—the physical location where data is actually generated. Moving AI to the edge allows for real-time decision-making without the demand to round-trip data to a central cloud. This is where the 2026 CRN AI 100 sees a surge in specialized orchestration and hardware.

Acer is emerging as a key supplier here, integrating Nvidia’s Grace Blackwell GB10 Superchips into its Veriton GN100 AI Mini workstations. This allows enterprises to run complex models in a small physical footprint. Similarly, Lenovo’s Hybrid AI portfolio, including ThinkEdge servers, allows for AI inferencing to happen locally, reducing the reliance on distant data centers.

Orchestrating these distributed environments requires a modern layer of software. Zededa is focusing on edge orchestration to manage the full AI stack across heterogeneous hardware, while StorMagic provides the hyperconverged infrastructure (HCI) necessary to run AI workloads at the edge. These tools are essential for companies managing thousands of remote nodes across different geographies.

The Resilience Layer: Security and Data Management

As AI models ingest more proprietary corporate data, the risk of data leakage and system failure grows. The “scrappy” software vendors mentioned in the AI 100 are filling a critical gap: AI resilience. If a data center hosting a primary LLM goes offline, the business impact is now catastrophic.

Cohesity and Veeam Software are evolving from traditional backup providers into AI data management specialists. Cohesity’s Gaia platform allows AI agents to mine value from historical unstructured data, while Veeam is implementing context-aware LLM firewalls to sanitize data before it ever reaches a model. This ensures that “safe AI” is not just a policy, but a technical reality.

Networking also plays a defensive role. Cisco Systems is integrating AI into its security and observability portfolios, using purpose-built silicon and “agentic operations” to optimize workloads on a global scale. By employing Hybrid Mesh Firewalls, Cisco is helping enterprises implement zero-trust segmentation, ensuring that an AI-driven breach in one sector of the network doesn’t compromise the entire infrastructure.

The trajectory of AI infrastructure is moving toward a state of total convergence. We are moving away from a world of separate “servers,” “storage,” and “networks” and toward a unified “AI fabric” where the hardware is invisible and the data flows seamlessly from the edge to the core. The companies listed in the 2026 CRN AI 100 are not just vendors; they are the ones building the plumbing for the next decade of computing.

The next major checkpoint for the industry will be the 2027 spending cycle, where Gartner expects infrastructure growth to continue at a rate of 28 percent. As the first wave of “AI Factories” comes online, the focus will likely shift from raw capacity to operational efficiency and energy sustainability.

Do you think the current spending on AI infrastructure is sustainable, or are we seeing a “hardware bubble”? Share your thoughts in the comments below.