Artificial intelligence can now generate lines of code faster than any human can possibly type. For a new generation of developers, the process of building software has shifted from meticulous manual architecture to something more intuitive—and chaotic—known as “vibe coding.” Using tools like Anthropic’s Claude Code and OpenAI’s Codex, developers are shipping products at a velocity that was unthinkable only a year ago.

The momentum is dizzying. Boris Cherny, the creator of Claude Code, has noted that the latest version of the tool was written entirely by Claude Code itself. This recursive loop of AI building AI suggests a future where the act of writing software is nearly instantaneous. However, as the barrier to entry drops and the speed of deployment spikes, a critical friction point has emerged: AI coding tools are accelerating software development—but trust is becoming the bottleneck.

From my time as a software engineer, I remember the anxiety of a production deployment—the double-checking of every semicolon and the rigorous peer reviews intended to catch a single catastrophic bug. In the “vibe coding” era, that rigor is often traded for speed. While the results can be impressive, the lack of a verification layer is introducing subtle vulnerabilities and “AI slop” into professional codebases, creating a precarious environment for the companies relying on this software.

The fragility of the “vibe”

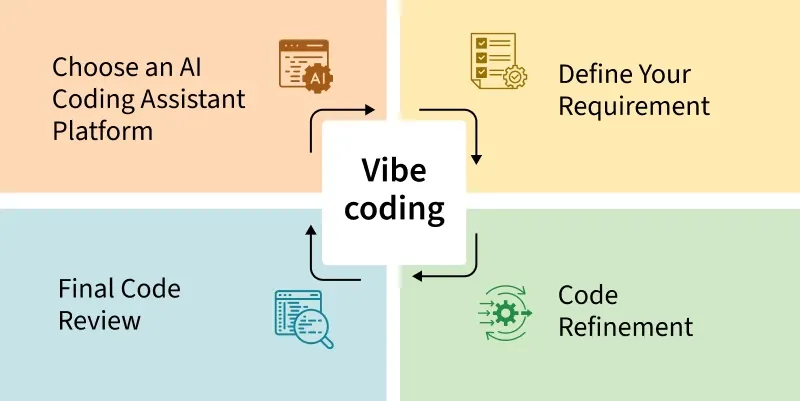

Vibe coding refers to a style of development where the programmer provides high-level prompts and “vibes” with the AI, iterating rapidly based on output rather than deep structural planning. This approach works remarkably well for prototypes and modest-scale apps, but it struggles with the stability required for enterprise-grade systems. The risk is not just a total crash, but the introduction of “silent” bugs—errors that don’t break the system immediately but create security holes or performance drags that are difficult to trace.

Even the tools leading this charge are not immune to the pitfalls of rapid, AI-driven iteration. Claude Code recently came under scrutiny after its own source code was accidentally leaked due to a packaging mistake. For the developer community, this served as a stark reminder: when you automate the process of shipping code, you similarly automate the process of shipping mistakes.

This instability has already caught the attention of platform gatekeepers. Apple has recently escalated its crackdown on AI-powered app builders, removing the tool “Anything” from the App Store. The company cited rules against apps that execute unreviewed code, reflecting a growing concern that AI-generated software could bypass traditional review processes and flood the ecosystem with low-quality or dynamically changing applications.

The enterprise verification gap

For a solo founder, a bug in a prototype is a learning experience. For a Fortune 500 company, a minor vulnerability in a sprawling codebase can be a disaster. In large-scale environments, where millions of code changes flow through a system annually, the bottleneck has shifted. The challenge is no longer how to write the code, but how to verify that the code is correct, secure, and compliant with internal governance.

Itamar Friedman, cofounder and CEO of Qodo (formerly CodiumAI), argues that today’s Large Language Models (LLMs) are designed to complete tasks, not to question them. This creates a “governance gap” where the AI will happily provide a solution that works in isolation but violates a company’s specific security protocols or architectural standards.

To address this, Qodo recently raised $70 million in Series B funding to develop what Friedman calls “artificial wisdom.” The goal is to move beyond simple code generation and toward a “trust layer” that can enforce the “tribal knowledge” of an organization.

Bridging the gap: Generation vs. Governance

| Feature | Vibe Coding (Standard LLMs) | Governance-Led AI (e.g., Qodo) |

|---|---|---|

| Primary Goal | Task completion and speed | Code integrity and compliance |

| Context | General training data | Organization-specific “tribal knowledge” |

| Verification | Human manual review | Automated rules based on past PRs |

| Risk Profile | Higher chance of “AI slop” | Focus on reducing technical debt |

The quest for “official wisdom”

In a large organization, “good” code isn’t just about whether the program runs; it’s about whether it follows the specific way that company handles data, manages memory, or structures its APIs. This is the “tribal knowledge” that usually takes a new hire months to learn through mentorship and failed pull requests.

Friedman explains that Qodo attempts to automate this learning process by analyzing an organization’s historical data—including past pull requests, developer comments, and previous changes—to create a set of automated rules. By turning historical human judgment into an enforceable layer, the tool can flag AI-generated code that violates the company’s internal standards before it ever reaches production.

This approach is already being utilized by major industrial players, including Nvidia, Walmart, Ford, and Texas Instruments. These companies face a dual pressure: they want the competitive advantage of AI-driven speed, but they cannot afford the risk of compromising systems that support millions of customers or critical infrastructure.

The current landscape overestimates the short-term trust we can place in LLMs and underestimates the necessity of a robust trust layer. Without a way to automatically verify that AI-generated code adheres to specific organizational constraints, the speed gained in writing software will be lost in the hours spent debugging and patching it.

As the industry moves forward, the focus is shifting toward “flow engineering”—systems where one model generates code and another, more critical model critiques it. The next major milestone for the sector will be the integration of these trust layers directly into the IDEs (Integrated Development Environments) used by millions, potentially turning the “vibe” into a verifiable science.

We are entering an era where the most valuable skill for a developer may no longer be the ability to write code, but the ability to audit it. The tools are here; the trust is still being built.

Do you trust AI to handle your production codebase, or are you sticking to manual reviews? Share your thoughts in the comments below.