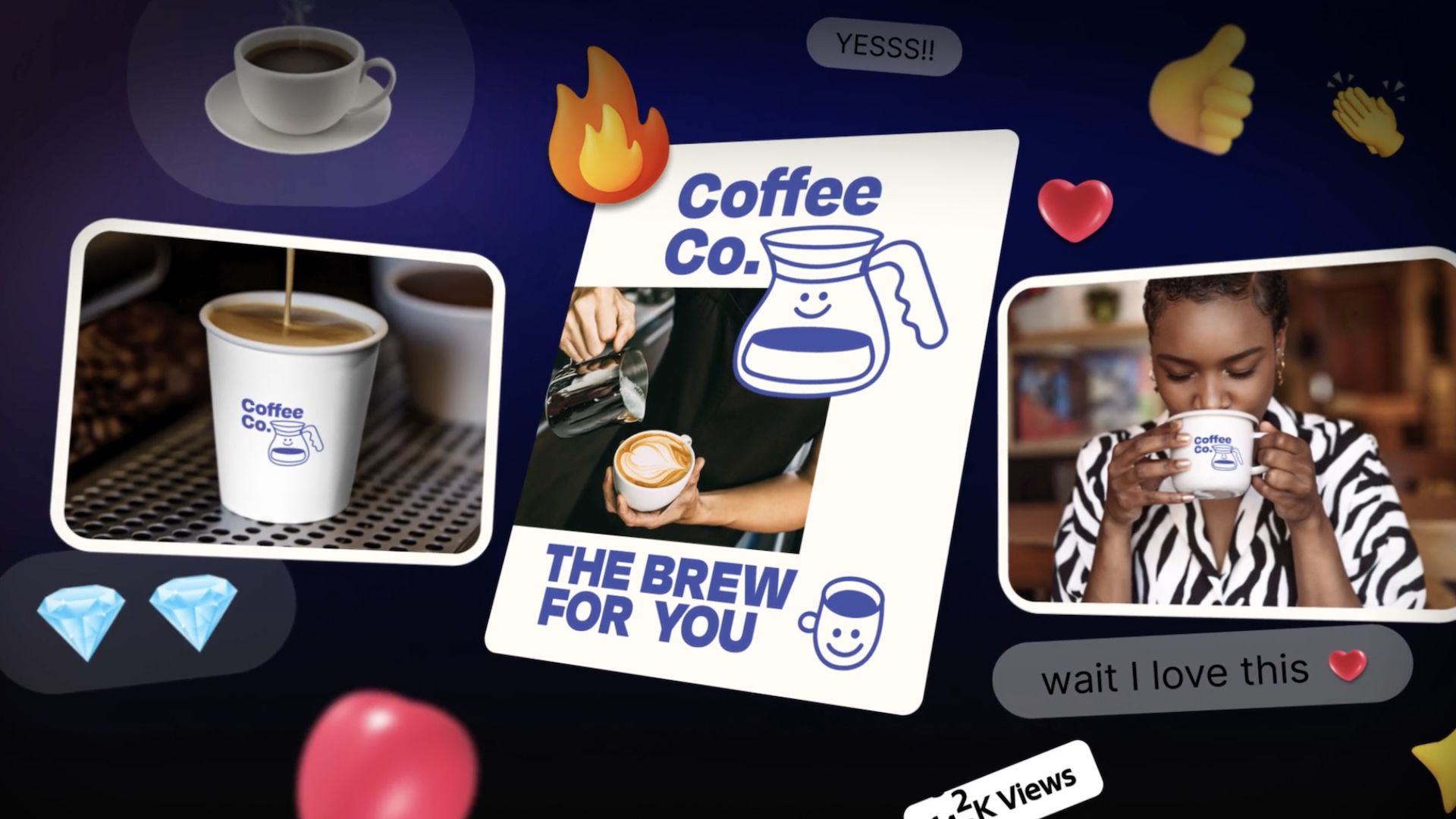

Adobe is positioning its latest AI evolution not as a simple set of tools, but as a fundamental shift in the creative process. With the introduction of the Adobe Firefly AI Assistant, the software giant is moving toward what it calls “agentic creativity”—a model where an AI agent doesn’t just execute a single command, but orchestrates complex workflows across the entire Creative Cloud suite.

For years, generative AI in design has largely functioned as a point-solution: you use a prompt to generate an image in Photoshop or a text effect in Illustrator. The Firefly AI Assistant changes this dynamic by introducing a single conversational interface capable of coordinating tasks across Photoshop, Premiere, Lightroom, Express, and Illustrator. Instead of the user manually moving assets between apps and repeating instructions, the assistant can theoretically handle the “connective tissue” of a project, allowing creators to describe a desired outcome in plain language and letting the agent determine the best path to achieve it.

This move toward conversational editing reflects a broader industry trend where AI is evolving from a passive tool into an active collaborator. By integrating these capabilities into a unified interface, Adobe is attempting to reduce the technical friction that often slows down professional production, effectively turning the software suite into a responsive ecosystem rather than a collection of separate programs.

Defining the ‘Creative Director’ Role

The transition to agentic AI often sparks anxiety among professionals about the devaluation of technical skill. Adobe appears to be preempting this by reframing the user’s role. According to David Wadhwani, an executive at Adobe, the goal is to preserve “humans in the loop,” shifting the creator’s responsibility from manual execution to high-level curation.

“Our belief is that agentic AI should serve human creativity, making creation more accessible, expressive and powerful than ever before. We’re developing a recent Adobe creative agent, a new way of working that empowers you to become the creative director of your own story. You set the vision, apply your taste and make the calls that only you can make,” Wadhwani stated.

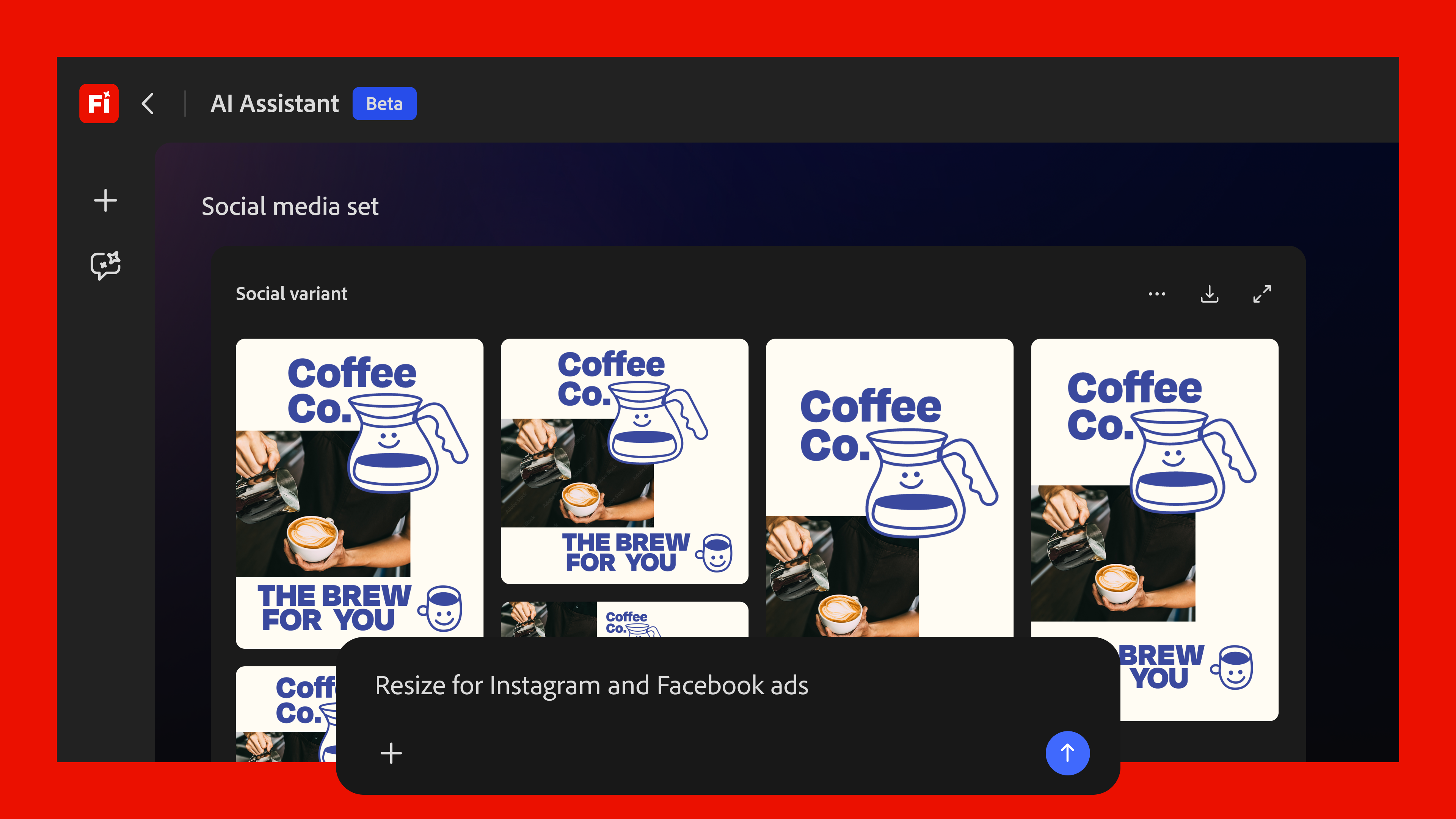

In this framework, the Adobe Firefly AI Assistant acts as a production house. The user provides the vision and the “taste,” while the agent manages the orchestration of various models, tools, and production processes. This represents intended to eliminate the repetitive, time-consuming tasks that typically occupy a significant portion of a designer’s day, such as resizing assets for multiple platforms or coordinating color palettes across different file types.

Beyond the Prompt: Interactive Controls and Consistency

While text-to-image and text-to-video prompts are the most visible parts of generative AI, Adobe is integrating more granular controls to ensure the output is professional-grade. The AI Assistant will not rely solely on text; it will incorporate interactive elements like buttons and sliders that adapt based on the specific project. This hybrid approach allows users to guide the AI with precision, avoiding the “slot machine” effect where creators repeatedly prompt and hope for a lucky result.

To solve the problem of visual drift—where AI-generated images vary too much in style across a single project—Adobe is leaning into its Firefly Custom Models. These allow brands and individual artists to train the AI on their own specific aesthetic, ensuring that the assistant’s output adheres to a consistent visual identity. By combining custom models with the agent’s ability to orchestrate workflows, Adobe is attempting to create a professional pipeline that is both automated and highly controlled.

An Open Ecosystem of Models

Recognizing that no single AI model can dominate every creative niche, Adobe is expanding Firefly’s capabilities through partner integrations. The company is incorporating a variety of external models to enhance its video and image editing tools. This strategy allows Adobe to leverage the specialized strengths of different AI providers—such as those from Google, Runway, and Luma AI—while keeping them contained within the familiar Creative Cloud environment.

By acting as a hub for multiple generative models, the Firefly AI Assistant can potentially switch between a high-fidelity image model for a still and a specialized motion model for a video clip, all while maintaining the user’s overarching project settings. This “model-agnostic” approach suggests that Adobe is less interested in winning the “best model” war and more interested in winning the “best workflow” war.

The Firefly AI Assistant is scheduled to enter public beta in the coming weeks, providing the first opportunity for users to test whether “agentic creativity” can actually survive the rigors of professional production pipelines.

We invite readers to share their thoughts on the shift toward agentic AI in the comments below. Do you spot this as a tool for empowerment or a risk to technical craft?