The race for artificial intelligence supremacy is increasingly becoming a war of attrition over compute power. In a move that signals both the exploding demand for large language models and a strategic shift in the cloud infrastructure market, Anthropic has reportedly signed a $1.8 billion cloud computing deal with Akamai Technologies.

The deal, first reported by Bloomberg on Friday, comes as Anthropic struggles to keep pace with the rapid adoption of its Claude AI. While the company has deep ties with giants like Amazon and Google, this partnership suggests a diversification strategy aimed at securing the massive amounts of processing power required to maintain performance as its user base scales.

For Akamai, the agreement is a transformative win. Long known as the industry standard for content delivery networks (CDNs) and cybersecurity, Akamai has been aggressively pivoting toward “generalized cloud” services. The confirmation of a massive contract with a “frontier model provider”—which Bloomberg identifies as Anthropic—validates Akamai’s attempt to compete with the hyperscalers in the AI era.

The Scaling Crisis: Claude’s 80x Growth

The sheer scale of the compute requirement is driven by a surge in how developers are using Claude. Speaking at the “Code with Claude” developer conference in San Francisco, Anthropic CEO Dario Amodei revealed that the company has seen “80x growth” in annualized revenue and usage. This spike is largely attributed to the model’s increasing adoption for complex coding tasks and enterprise automation.

From a technical standpoint, scaling a model to meet 80x growth isn’t as simple as adding more servers. It requires a massive orchestration of GPUs, high-speed interconnects and power-dense data centers. When a model becomes a primary tool for coding—where latency and reliability are non-negotiable—the infrastructure must be flawless. By partnering with Akamai, Anthropic is likely seeking to leverage Akamai’s distributed edge architecture to reduce latency and increase the availability of Claude’s inference capabilities.

Akamai’s Pivot and Market Surge

The market reacted almost instantly to the news. On Thursday, Akamai announced its first-quarter financial results, mentioning a significant deal with a “leading frontier model provider” without naming the partner. Once the connection to Anthropic became clear, Akamai’s stock surged, closing the regular session up 26.58% at $147.71, with late trading pushing gains as high as 29.62%.

This deal provides a critical proof of concept for Akamai’s cloud strategy. By attracting a high-profile AI lab, Akamai is proving it can handle the most demanding workloads in the modern tech stack. The company’s financial health already showed strength prior to the announcement, beating analyst expectations on both the top and bottom lines.

| Metric | Q1 Reported Value | Analyst Estimate Variance |

|---|---|---|

| Revenue | $1.074 Billion | +0.17% |

| Earnings Per Share (EPS) | $1.61 | +8.78% |

| Year-to-Date Stock Gain | 73.57% | N/A |

Strategic Implications for the AI Ecosystem

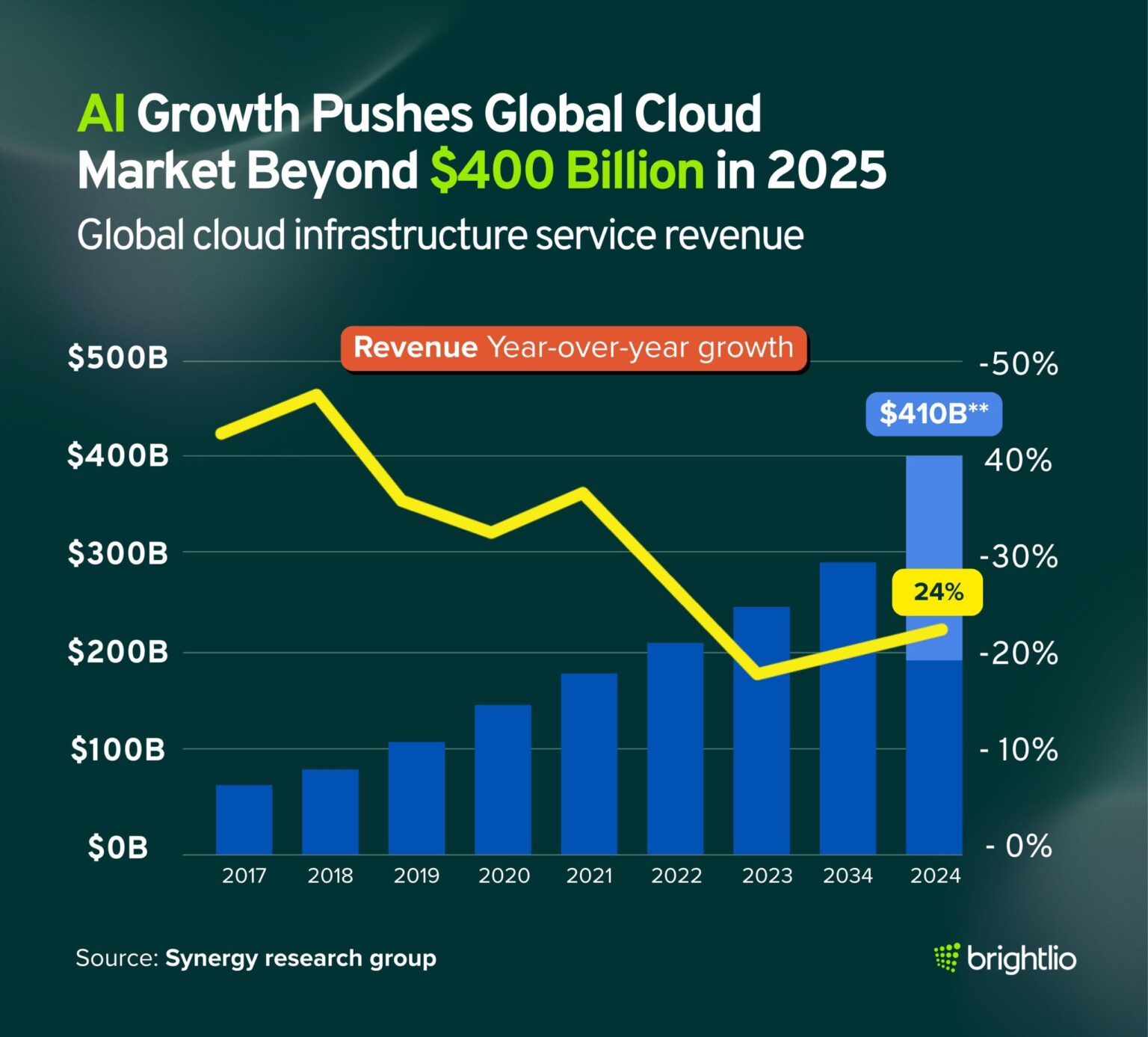

The $1.8 billion commitment highlights a broader trend: the “compute crunch.” As frontier models grow in size and capability, the demand for H100s and subsequent GPU generations has created a bottleneck that only the most well-capitalized firms can navigate.

This partnership creates a new dynamic in the cloud hierarchy. For years, the “Large Three” (AWS, Azure, and Google Cloud) have held a virtual monopoly on the infrastructure required to train and deploy frontier models. Anthropic’s move toward Akamai suggests that AI labs are eager to avoid vendor lock-in and are willing to bet on alternative cloud providers who can offer competitive pricing or specialized edge capabilities.

However, some questions remain. It is currently unclear whether this deal is focused primarily on inference—the process of running the model for users—or if Akamai is providing the raw compute necessary for training new versions of Claude. Given Akamai’s strength in edge computing, a focus on inference would allow Anthropic to push the model’s “brain” closer to the end-user, significantly speeding up response times for developers worldwide.

Disclaimer: This article contains financial data and market analysis. It is intended for informational purposes only and does not constitute investment advice.

The industry now looks toward Akamai’s next quarterly filing and potential official confirmation from Anthropic regarding the specific technical scope of the partnership. As the “Code with Claude” momentum continues, the ability to secure stable, scalable compute will likely be the deciding factor in who leads the next generation of AI automation.

Do you think the diversification of AI cloud providers will lower costs for developers, or will the compute crunch keep prices high? Let us know in the comments or share this story on social media.