The rush to integrate generative AI into corporate workflows has revealed a humbling reality for many executives: an AI is only as capable as the data it can access. While the promise of automated insights and hyper-efficiency is alluring, a significant gap remains between the desire to innovate and the technical readiness of the underlying corporate archives. For most organizations, the hurdle isn’t the choice of a Large Language Model, but rather the chaotic state of their internal data management.

To truly leverage Künstliche Intelligenz: Wie Firmen ihre Daten managen sollten, companies must shift their focus from the “brain” of the AI to the “library” it reads from. Without a rigorous strategy for data curation, businesses risk “garbage in, garbage out,” where AI models produce hallucinations or inaccurate outputs based on fragmented, outdated, or contradictory internal documents. The transition from legacy data silos to an AI-ready infrastructure is becoming the primary differentiator between companies that achieve scalable ROI and those that remain stuck in the pilot phase.

This challenge is particularly acute in the enterprise sector, where decades of digital transformation have often resulted in “data graveyards”—vast repositories of PDFs, spreadsheets, and emails stored across disparate cloud services and local servers. For an AI to provide reliable business intelligence, it requires high-quality, structured, and labeled data. When a company lacks a centralized data governance framework, the AI cannot distinguish between a final contract from 2024 and a draft from 2018, leading to operational risks and strategic errors.

The Architecture of AI-Ready Data

The fundamental shift required is a move toward a “data fabric” or a unified data layer. In the past, data management was often viewed as a storage problem—how much space do we have and how do we back it up? In the age of AI, data management is a retrieval and quality problem. The goal is to create a single source of truth that is accessible, clean, and contextually rich.

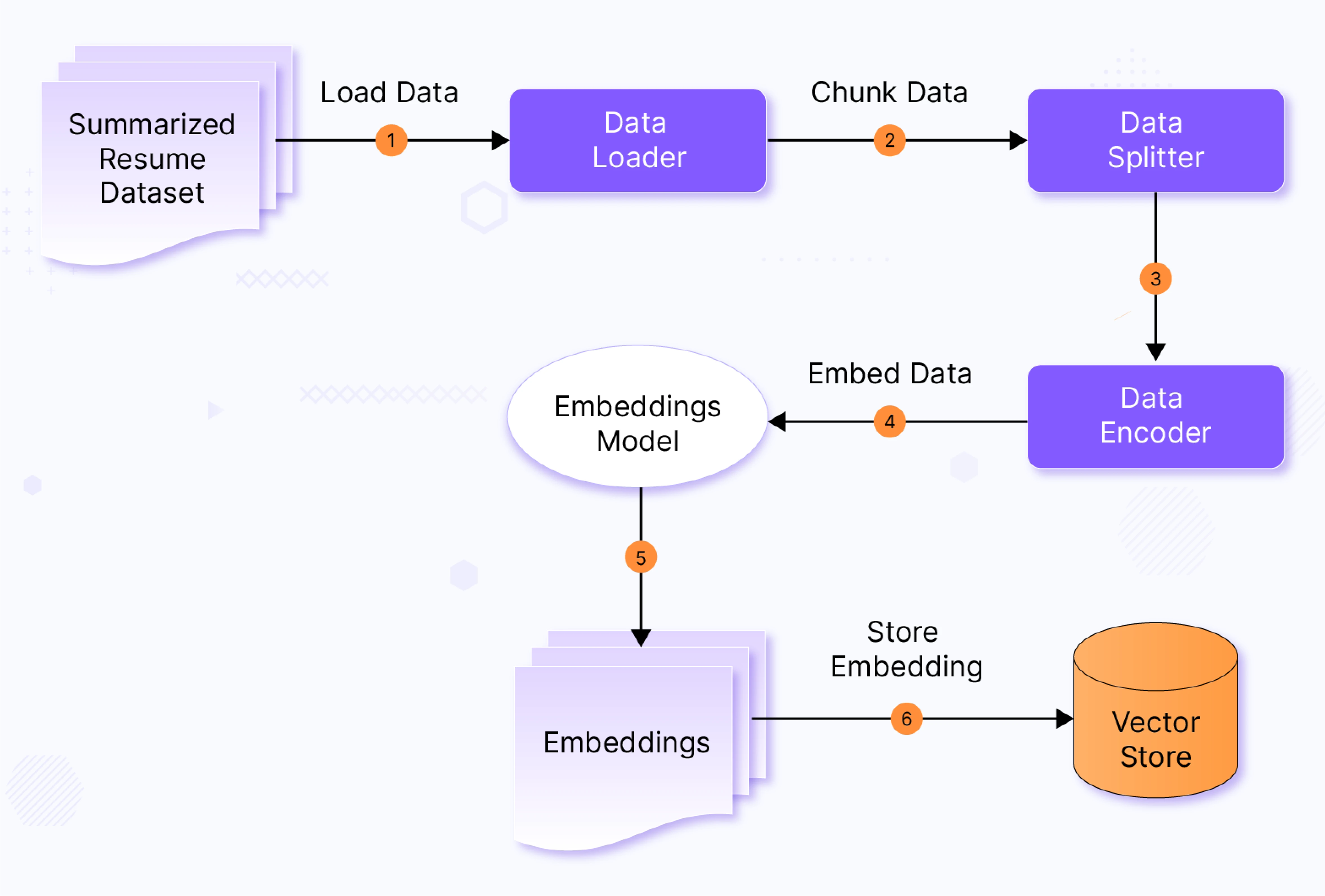

A critical component of this architecture is the implementation of Retrieval-Augmented Generation (RAG). Instead of relying solely on the general knowledge a model was trained on, RAG allows a company to “ground” the AI in its own private data. By retrieving relevant documents from a verified internal database before generating a response, the AI can cite its sources, drastically reducing the likelihood of hallucinations. Still, RAG only works if the data being retrieved is accurate and up-to-date.

Effective data management for AI generally follows a specific hierarchy of needs:

- Data Auditing: Identifying where data lives, who owns it, and what is actually useful.

- Cleaning and Deduplication: Removing redundant files and correcting formatting errors that confuse machine learning models.

- Structuring: Converting unstructured text into formats that AI can navigate more efficiently, such as vector databases.

- Governance: Establishing strict permissions so the AI does not accidentally leak sensitive payroll data to a junior employee.

The Cost of Data Neglect

Ignoring the “data hygiene” phase of AI adoption carries tangible costs. When an AI is fed poor-quality data, the resulting errors can lead to incorrect financial forecasting, flawed customer service interactions, or compliance failures. The computational cost of processing “noisy” data is higher, as models require more tokens and processing power to find the signal within the noise.

Many firms are discovering that the “quick win” of deploying a chatbot is often overshadowed by the long-term necessity of a data cleanup project. This process is rarely glamorous; it involves the tedious operate of sorting through legacy folders and defining metadata standards. Yet, this foundational work is what enables the sophisticated capabilities of data governance, ensuring that information is not only available but trustworthy.

| Feature | Legacy Approach | AI-Ready Approach |

|---|---|---|

| Primary Goal | Storage and Archival | Retrieval and Utility |

| Structure | Siloed Folders/Databases | Unified Data Fabric/Vector Store |

| Quality Control | Manual/Periodic | Continuous/Automated Validation |

| Access | User-driven Search | AI-driven Semantic Retrieval |

Navigating the Implementation Gap

The gap between ambition and execution often stems from a cultural disconnect. IT departments understand the technical requirements of data cleaning, but business leaders often view these tasks as “plumbing” that slows down the deployment of flashy AI tools. To bridge this, companies are increasingly adopting a “data-first” mindset, where the success of an AI project is measured by the quality of the dataset it utilizes, rather than the complexity of the model.

Stakeholders affected by this transition include not just CTOs, but also legal teams and compliance officers. The EU AI Act and GDPR regulations place a high premium on data lineage and transparency. If a company cannot explain how its AI arrived at a specific conclusion because the underlying data is a chaotic mess, they may face significant regulatory hurdles. This makes the “sorting” of data a legal necessity as much as a technical one.

For those starting the process, the recommendation is to avoid the “boil the ocean” approach. Instead of trying to clean every byte of company data at once, firms should identify a high-value utilize case—such as automating customer support for a specific product line—and clean only the data relevant to that specific domain. This creates a “virtuous cycle” where the AI provides immediate value, justifying the investment in further data curation.

The Role of Human Oversight

Despite the automation capabilities of modern tools, human expertise remains indispensable. “Human-in-the-loop” systems are required to validate the accuracy of the AI’s outputs and to refine the data labeling process. Experts in the field emphasize that AI should not be trusted to clean its own data without supervision, as the model may inadvertently delete “outlier” data that is actually critical for edge-case accuracy.

As companies move forward, the focus will likely shift toward autonomous data agents—AI tools designed specifically to categorize, tag, and clean data in the background. However, the strategic direction—deciding what data is “truth” and what is “noise”—remains a human prerogative. The ability to curate a high-quality corporate knowledge base is becoming a core competency for the modern executive.

The next critical milestone for many organizations will be the transition to the ISO/IEC 42001 standard, which provides a framework for the management of AI systems. Adopting such standards will likely require a comprehensive audit of data pipelines and the implementation of rigorous quality controls to ensure AI reliability.

Disclaimer: This article is intended for informational purposes and does not constitute professional technical or legal advice regarding data compliance.

If you are navigating the challenges of corporate AI integration, we wish to hear from you. How is your organization handling the transition from legacy data to AI-ready systems? Share your experiences in the comments below or share this piece with your network.