The invisible architecture of the artificial intelligence boom is not made of code, but of copper, coolant, and immense amounts of electricity. As generative AI models grow in complexity, the hardware required to train and run them—specifically high-performance GPUs—is generating heat levels that traditional data center cooling systems simply cannot manage. The industry is hitting a “thermal wall,” where air-cooled servers are no longer sufficient to prevent hardware throttling or failure.

To address this bottleneck, Telehouse Canada is modernizing its infrastructure by integrating direct-to-chip liquid cooling technology. This shift represents a fundamental change in data center design, moving away from the traditional method of blowing chilled air through aisles and toward a precision-engineered system that removes heat directly from the hottest components of the server. For the Canadian tech ecosystem, this upgrade is more than a technical patch; it is a prerequisite for hosting the next generation of AI workloads.

As a former software engineer, I have seen how software often outpaces the hardware it runs on. We are currently in a period where the demand for compute power—driven by Large Language Models (LLMs) and complex neural networks—is exceeding the physical capacity of existing facilities. By adopting liquid cooling, Telehouse is positioning its Canadian footprint to support the high-density racks required by NVIDIA’s latest H100 and B200 series chips, which can push thermal design power (TDP) to levels that would melt traditional air-cooled setups.

The Physics of the Thermal Wall

For decades, data centers relied on Computer Room Air Conditioners (CRACs) to maintain a steady temperature. This involved cooling the entire room to ensure that the servers remained within operational limits. However, AI chips are fundamentally different from the CPUs used in standard web hosting. They operate at much higher power densities, concentrating heat in a very small surface area.

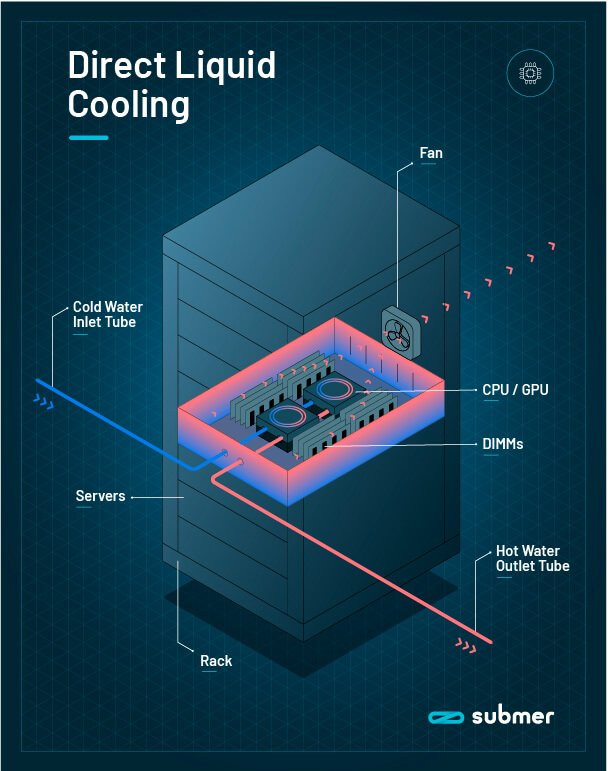

Direct-to-chip (DLC) cooling solves this by circulating a coolant—usually water or a specialized dielectric fluid—through a cold plate that sits directly atop the processor. This liquid absorbs heat far more efficiently than air. While air is an insulator, liquid has a significantly higher thermal conductivity, allowing it to whisk heat away before it can seep into the rest of the chassis. This allows for “high-density” deployments, where more compute power can be packed into a single rack without risking a thermal shutdown.

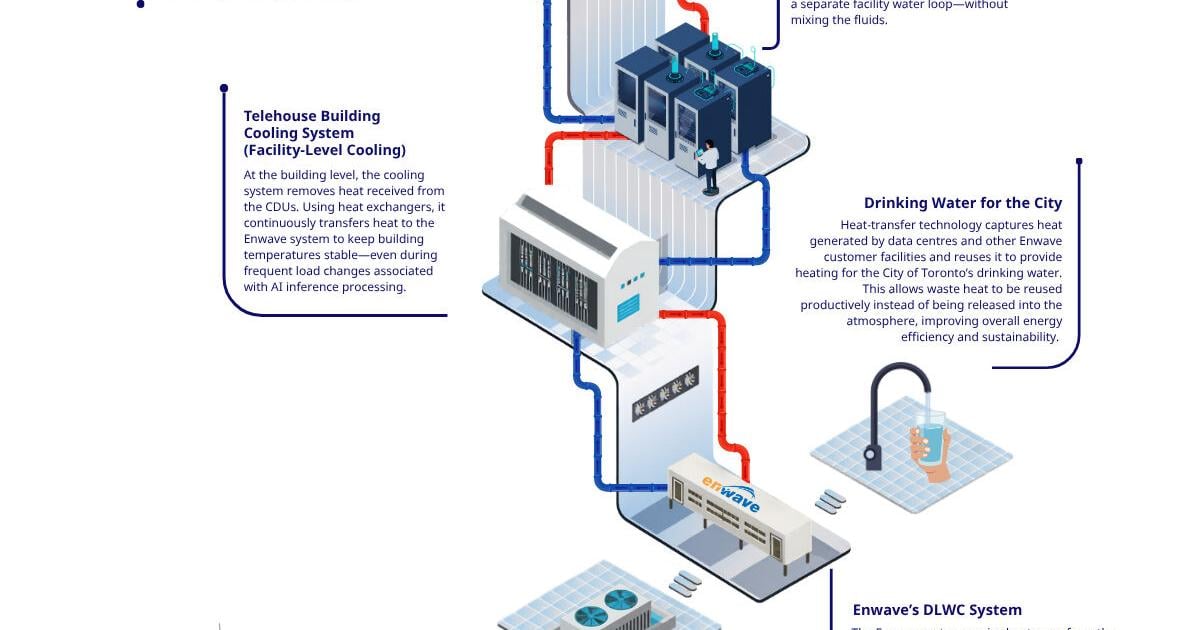

The transition to liquid cooling is not without its challenges. It requires a complete overhaul of the plumbing within a data center, including the installation of Coolant Distribution Units (CDUs) and leak-detection systems. For Telehouse Canada, this modernization involves integrating these systems into existing facilities, ensuring that the infrastructure can scale as more clients migrate their AI workloads to the cloud.

Comparing Cooling Architectures

The move toward liquid cooling is a response to the diminishing returns of air-based systems. As power density increases per rack, the amount of energy required to move enough air to cool the system becomes prohibitively expensive and inefficient.

| Feature | Traditional Air Cooling | Direct-to-Chip (DLC) |

|---|---|---|

| Heat Dissipation | Low to Medium | Very High |

| Energy Efficiency | Lower (High fan power) | Higher (Reduced fan usage) |

| Rack Density | Limited (approx. 15-30kW) | High (100kW+ possible) |

| Infrastructure Cost | Standard/Lower | Higher initial CAPEX |

Impact on the Canadian AI Ecosystem

Canada has long been a global hub for AI research, anchored by institutions in Toronto and Montreal. However, there has historically been a gap between research and commercial deployment due to a lack of specialized “AI-ready” infrastructure. When a startup develops a cutting-edge model, they often have to rely on hyperscalers in the U.S., which can lead to data sovereignty concerns and increased latency.

By providing the physical infrastructure to support direct-to-chip cooling, Telehouse Canada enables local enterprises and AI startups to deploy their own high-density hardware locally. This is critical for industries with strict regulatory requirements, such as finance and healthcare, where data cannot leave Canadian borders. The ability to run high-performance compute (HPC) clusters within Canada reduces the reliance on foreign cloud providers and fosters a more autonomous domestic tech economy.

The stakeholders benefiting from this move include:

- Enterprise Clients: Who can now deploy private AI clouds with GPU clusters that would have been too hot for previous data center specs.

- Cloud Service Providers: Who can offer higher-performance instances to their customers.

- Sustainability Officers: Because liquid cooling typically reduces the Power Usage Effectiveness (PUE) ratio, lowering the overall carbon footprint of the facility.

The Sustainability Paradox

While liquid cooling is more energy-efficient in terms of electricity used for fans and air conditioning, it introduces a new variable: water consumption. The industry is currently grappling with the sustainability of “water-cooled” AI. Many direct-to-chip systems use closed-loop cycles to minimize water loss, but the heat eventually has to be rejected into the environment, often via cooling towers that evaporate water.

Telehouse and other global operators are under increasing pressure to report their Water Usage Effectiveness (WUE) alongside their PUE. The goal is to move toward “waterless” or “closed-loop” cooling where the heat is exchanged with the outside air or recycled for district heating, though these implementations are more complex and costly. As Telehouse modernizes, the balance between compute density and environmental stewardship will remain a central point of scrutiny for regulators and the public.

The broader trend suggests that we are moving toward a hybrid model. While legacy servers will continue to use air, the “AI zones” of the data center will be liquid-cooled. This zoning allows operators to maximize efficiency without having to retroactively plumb every single rack in a facility.

The next phase of this infrastructure rollout will likely involve the integration of more advanced liquid cooling standards as the industry converges on a unified specification for “AI-ready” racks. Further updates on Telehouse Canada’s capacity expansions and specific site certifications are expected in their upcoming quarterly infrastructure reports.

Do you think local AI infrastructure is the key to data sovereignty, or will the hyperscalers always win on scale? Share your thoughts in the comments below.