For the better part of a decade, our interaction with artificial intelligence has been defined by the “box”—the chat window, the search bar, the rigid boundary between the user’s input and the machine’s output. Even as LLMs became more sophisticated, the interface remained a transactional exchange of text and prompts. But Google is currently attempting to dismantle that boundary, shifting Gemini from a tool you use to a presence you interact with.

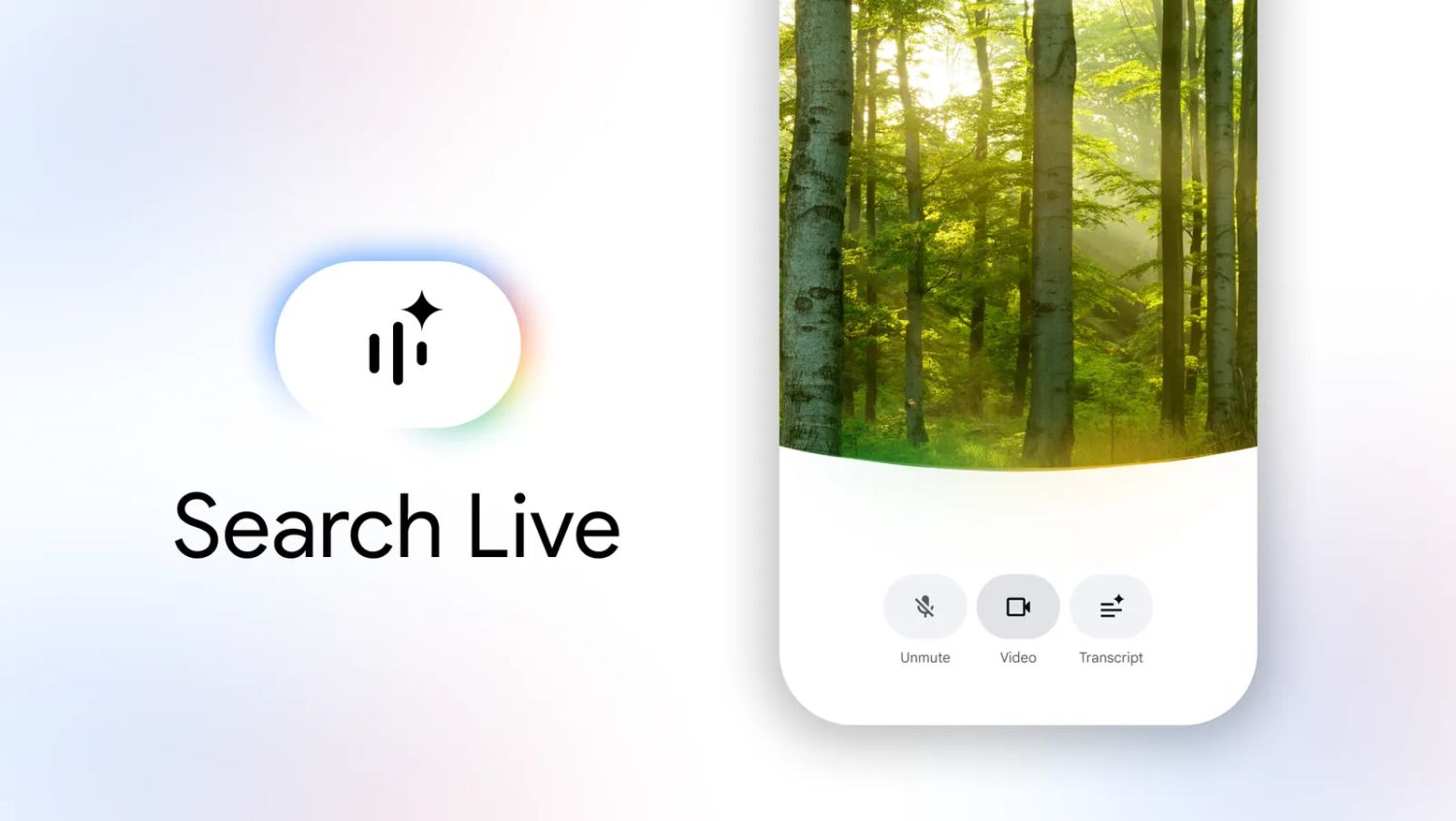

The latest evolution in this shift is a significant UI overhaul for Gemini Live on Android. While the core functionality of the multimodal AI remains the same, the way it occupies the screen has changed. Google is rolling out a new interface that prioritizes visual space and removes the structural friction of previous versions, signaling a move toward a more immersive, “ambient” AI experience.

This update, appearing in version 17.20 of the Google app, isn’t just a cosmetic refresh. By expanding the real estate available for camera streams and conversation logs, Google is leaning into the “Live” aspect of the product. The goal is to make the AI feel less like a software application and more like a lens through which the user perceives and interacts with the world in real-time.

Breaking the Digital Divide: From Split-Screen to Full-Canvas

Previously, Gemini Live utilized a design language that clearly demarcated the “human” space from the “AI” space. Users will remember a distinct curved line that separated the functional controls from the content area, effectively splitting the screen into two zones. While visually polished, this design served as a constant reminder of the interface’s limitations—it was a partitioned experience.

The new design effectively erases this boundary. The curved separator is gone, allowing both the camera feed and the conversation history to expand and occupy the majority of the display. When a user shares their camera—a key feature of Gemini’s multimodal capabilities—the video stream can now stretch to fill the screen, providing a much clearer view of the environment the AI is analyzing.

This shift mirrors a broader trend in mobile design toward “edge-to-edge” utility. By removing the structural clutter, Google is reducing the cognitive load on the user. The interface now recedes into the background, leaving the focus on the multimodal input—whether that is a live video of a broken appliance the AI is helping you fix or a complex document being discussed in real-time.

A New Visual Vocabulary of Color and Motion

Because the rigid structural lines have been removed, Google has introduced a more fluid system of visual cues to tell the user exactly what the AI is doing. Rather than relying on text labels or static icons, the interface now uses a dynamic animation at the bottom of the display that changes in color and scale based on the state of the conversation.

This “pulse” serves as the heartbeat of the interaction:

- Blue: When the user is speaking, the animation glows blue, confirming that the system is actively listening.

- Yellow and Green: When Gemini responds, the animation transitions into a pulsating blend of yellow and green, signaling the AI’s processing and output phase.

- Blue Frame: When the camera is activated and sharing a stream, a blue border frames the entire display, providing a persistent visual reminder that the AI can “see” the environment.

The control buttons have also been updated to a “floating” design, ensuring they remain accessible without obstructing the primary content. This combination of ambient light and floating elements creates a sense of depth, moving away from the flat, layered look of traditional Android apps.

The Strategic Pivot Toward AI Agents

To understand why a UI change matters, one has to look at the trajectory of the “AI Agent.” For the last year, the industry has been obsessed with the “chatbot”—a system that answers questions. The next frontier is the “agent”—a system that perceives, reasons and acts. For an AI to act as an agent, it must be able to see what the user sees without the user feeling like they are managing a complex piece of software.

By maximizing the camera stream’s visibility and simplifying the interaction cues, Google is preparing users for a future where Gemini isn’t just a voice in a phone, but a visual collaborator. This update aligns with the broader goals seen in Project Astra, Google’s vision for a universal AI assistant that can remember where you left your keys or explain a piece of code by simply looking at a monitor.

| Feature | Previous Gemini Live UI | New Gemini Live UI (v17.20) |

|---|---|---|

| Screen Layout | Split by a curved separator line | Unified, expansive canvas |

| Camera Integration | Confined to a specific zone | Can occupy the full display |

| Status Indicators | Functional area separation | Color-coded bottom animations |

| Control Elements | Fixed functional areas | Floating action buttons |

How to Access the Update

The new interface is currently being rolled out in stages to Android users. Because Google typically utilizes server-side switches for these updates, some users may see the changes immediately after updating their app, while others may have to wait.

To check for the update, users should ensure their Google app is updated to version 17.20 or higher via the Google Play Store. Once updated, the new layout should trigger automatically upon entering the Gemini Live mode.

As Google continues to refine the synergy between its Gemini models and the Android OS, the focus is clearly shifting toward invisibility. The ultimate goal is an interface that disappears entirely, leaving only the conversation and the shared visual experience.

The next major milestone for the Gemini ecosystem will likely be the deeper integration of “Remy,” the rumored AI model designed to transform Gemini from a conversationalist into a proactive agent capable of executing complex tasks across multiple apps. We expect more details on these agentic capabilities in upcoming official Google developer briefings.

Do you prefer the new immersive layout, or do you find the previous structural separation more intuitive? Share your thoughts in the comments below.