Google’s Threat Intelligence Group (GTIG) recently intercepted a sophisticated effort by hackers to orchestrate a “mass vulnerability exploitation operation,” signaling a dangerous escalation in the use of artificial intelligence to automate cyberattacks. According to a report released Monday, the company has “high confidence” that threat actors utilized an AI model to identify and exploit a zero-day vulnerability—a software flaw unknown to the developers—specifically designed to bypass two-factor authentication (2FA).

The discovery is a sobering reminder that the tools intended to revolutionize productivity are being mirrored by a shadow industry of AI-driven offense. While Google believes its own Gemini model was not used in the attack, the incident underscores a systemic vulnerability: the democratization of high-level coding and analysis tools allows criminal actors to find “holes” in software at a speed and scale that human security teams struggle to match.

For years, the discovery of a zero-day vulnerability was the result of painstaking manual labor by elite researchers or state-sponsored hackers. By integrating AI into this process, attackers can now scan millions of lines of code in seconds, identifying patterns of weakness that would take a human analyst weeks to uncover. In this instance, the target was 2FA, the primary line of defense for millions of corporate and government accounts worldwide.

The Mechanics of an AI-Driven Breach

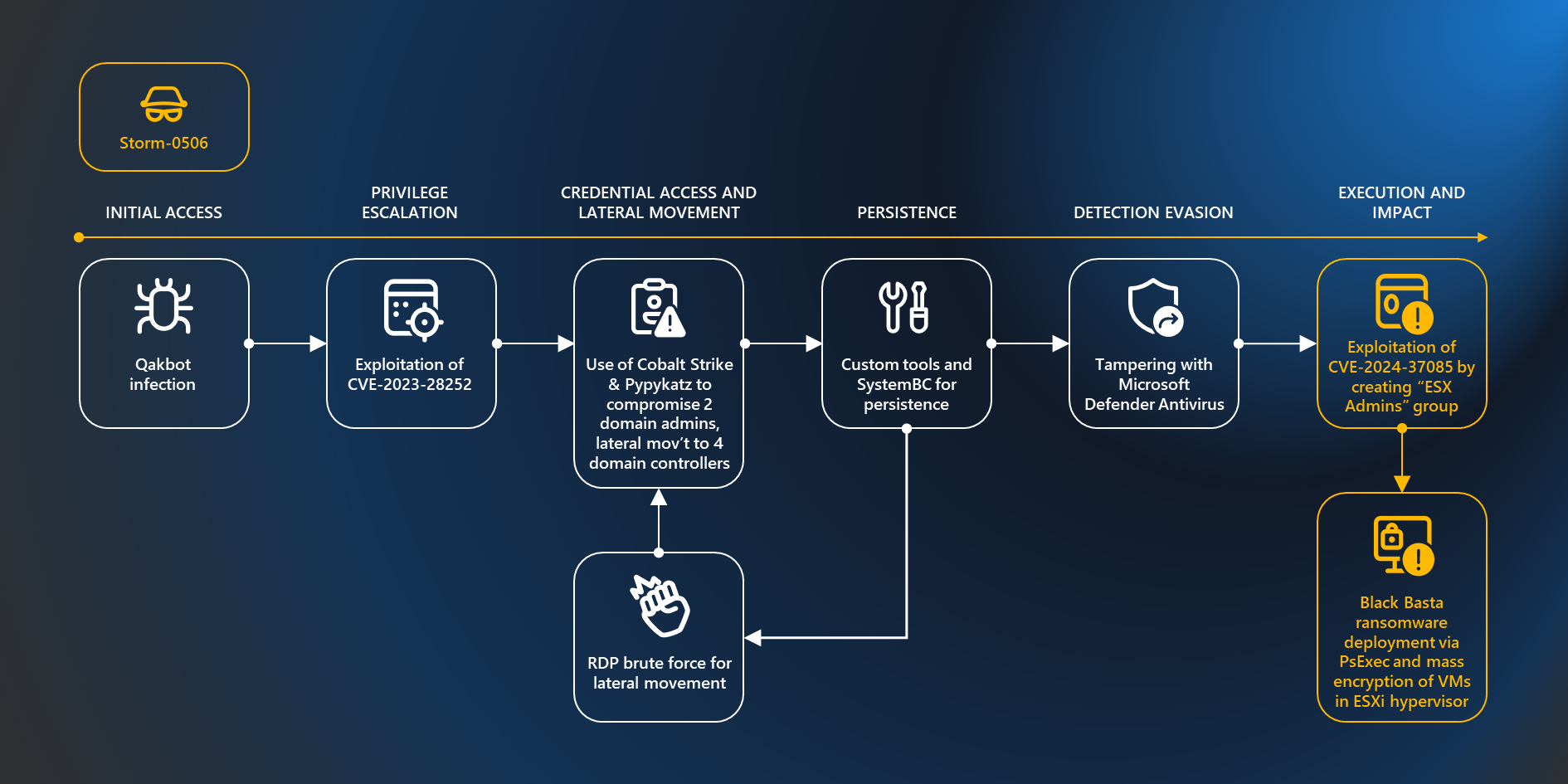

The core of the threat lies in the ability of AI to not only find a flaw but to help “weaponize” it. In the case thwarted by Google, the hackers weren’t just looking for a bug; they were using AI to plan a “mass exploitation event.” This suggests a shift from targeted espionage—where a hacker hits one high-value target—to a “spray and pray” model, where a single AI-discovered flaw is used to compromise thousands of systems simultaneously.

Bypassing two-factor authentication is particularly critical. 2FA was designed to ensure that even if a password is stolen, the account remains secure. By finding a zero-day that renders this secondary check useless, the hackers effectively unlocked the front door to any organization using the affected software. Google’s “proactive counter discovery” stopped the event before it could be deployed, but the report suggests the infrastructure for such an attack is already being built.

The speed of this evolution has created a volatile environment for cybersecurity firms. Despite billions of dollars in investment toward AI-driven defenses, the offensive side of the equation is moving faster. When an AI can generate exploit code in real-time, the traditional “patch-and-update” cycle becomes a race the defenders are often losing.

A Geopolitical Arms Race in Silicon

The report specifically points to a heightened interest in AI-enabled vulnerability discovery among groups linked to China and North Korea. This is not surprising given the strategic goals of these nations, which have long used cyber operations as a primary tool for intelligence gathering and financial gain. For these state-sponsored actors, AI is a force multiplier, allowing them to conduct operations with a smaller footprint and greater efficiency.

This trend has already triggered alarms at the highest levels of government. The threat of AI-driven cyber warfare has led to a series of closed-door meetings at the White House involving technology executives and national security advisors. The central tension is one of “dual-use”: the same AI capabilities that allow a developer to fix a bug can be inverted to create one.

Industry leaders are now grappling with the ethics of model release. Some companies have delayed the rollout of advanced models or restricted their access to vetted partners—including firms like Microsoft, Apple, and CrowdStrike—to prevent the tools from falling into the hands of adversaries who could use them to prey on decades-old legacy software still used by critical infrastructure.

| Feature | Traditional Exploitation | AI-Enabled Exploitation |

|---|---|---|

| Discovery Speed | Weeks to months of manual auditing | Near-instantaneous pattern scanning |

| Scale | Targeted, high-value attacks | Mass, automated exploitation events |

| Skill Requirement | Elite, specialized human expertise | Lowered barrier via AI-assisted coding |

| Detection | Based on known signatures/patterns | Polymorphic code that evades signatures |

The Defensive Pivot: AI vs. AI

As the offensive landscape shifts, the industry is moving toward a “defensive AI” posture. This involves using AI not just to find bugs, but to predict where they will occur before a human or a malicious bot even thinks to look. Google’s ability to thwart this specific attack suggests that its internal threat intelligence is successfully leveraging these predictive capabilities.

However, the risk remains that the “AI gap” will widen. Modest to mid-sized organizations, which lack the resources of a Google or a Microsoft, are increasingly vulnerable. They rely on third-party software that may contain vulnerabilities that AI hackers can find, but which the software vendors—lacking advanced AI auditing tools—cannot see.

The current strategy among top-tier cybersecurity teams is to implement “Zero Trust” architectures, where no user or device is trusted by default, regardless of whether they have bypassed 2FA. By requiring continuous verification, organizations can mitigate the damage even if a zero-day vulnerability is exploited.

The next critical checkpoint for the industry will be the upcoming review of AI safety guidelines by international regulatory bodies, as governments seek to establish “guardrails” that prevent AI models from generating malicious code. Whether these regulations can keep pace with the rapid deployment of open-source models remains the central question for global security.

We want to hear from you. Do you believe AI guardrails are enough to stop state-sponsored cyberattacks, or is the “AI arms race” inevitable? Share your thoughts in the comments below.