The boundary between human conversation and machine response has shifted. With the introduction of GPT-4o, OpenAI has moved beyond the era of the chatbot and into the era of the digital companion, creating a system that can see, hear, and speak in real time with emotional nuance.

Unlike its predecessors, which relied on a fragmented chain of separate models to process different types of data, GPT-4o—the “o” standing for “Omni”—is a single neural network trained across text, vision, and audio. This architectural shift eliminates the lag and loss of information that previously plagued AI voice interactions, allowing the model to perceive tone, background noise, and visual cues simultaneously.

The result is a tool that doesn’t just process commands but interprets the environment. Whether it is helping a student solve a math problem by looking through a smartphone camera or translating a conversation between two people in different languages with near-zero latency, the GPT-4o capabilities represent a fundamental change in how humans interact with artificial intelligence.

The End of the AI Pipeline

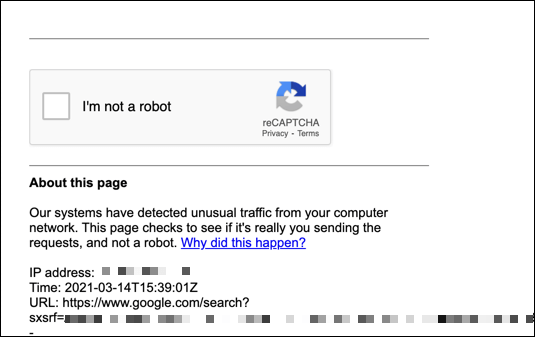

To understand why GPT-4o feels more human, one must understand the “pipeline” it replaced. Previously, when a user spoke to an AI, the system performed three distinct steps: it converted speech to text, processed that text to generate a response, and then converted that text back into synthetic speech. This process stripped away the emotional context of the user’s voice and introduced a noticeable delay.

GPT-4o operates as a native multimodal model. By processing audio and vision directly, it can detect if a user is sounding frustrated, excited, or hesitant. According to OpenAI’s official announcement, the model can respond to audio inputs in as little as 232 milliseconds, which aligns with human response times in a natural conversation.

This capability allows for “interruptibility,” a key feature of human speech. Users no longer have to wait for the AI to finish its entire sentence before correcting it or asking a follow-up question. The AI can hear the interruption in real time and pivot its response instantly, mirroring the fluid dynamics of a face-to-face meeting.

Expanding the Senses: Vision and Real-Time Analysis

The integration of vision into the Omni model transforms the AI from a digital assistant into a visual observer. By utilizing a device’s camera, GPT-4o can “see” the world and provide context-aware assistance. This has immediate implications for accessibility, education, and technical support.

In practical terms, a user can point their camera at a piece of broken equipment, and the AI can identify the part and talk the user through the repair process. In an educational setting, the model can look at a handwritten physics equation and, rather than simply providing the answer, guide the student through the logic of the problem step-by-step, noticing where the student is pausing or struggling.

Beyond utility, the model exhibits a level of emotional intelligence previously unseen in large language models. It can adjust its own voice to be more empathetic, playful, or professional depending on the context of the interaction. This suggests a move toward AI that provides not just information, but a tailored social experience.

Democratizing High-End Intelligence

One of the most significant shifts accompanying the launch of GPT-4o is the change in accessibility. OpenAI has expanded the availability of its most advanced reasoning capabilities to users on the free tier, though with lower message limits than those paying for a subscription.

This move effectively brings GPT-4 level intelligence to millions of users who previously relied on the more limited GPT-3.5. By offering the Omni model’s speed and multimodal features for free, OpenAI is accelerating the integration of AI into daily workflows, from drafting emails to real-time language translation.

| Feature | GPT-4 (Previous) | GPT-4o (Omni) |

|---|---|---|

| Processing Method | Multi-step Pipeline | Single Neural Network |

| Response Latency | Noticeable Lag | ~232ms (Human-like) |

| Input Types | Text/Image (Separate) | Text/Audio/Vision (Unified) |

| Voice Nuance | Synthetic/Robotic | Emotional/Dynamic |

The Implications of a “Her”-like Interface

The capabilities of GPT-4o inevitably draw comparisons to the film *Her*, where an AI becomes an emotionally resonant partner. While the utility is clear, the shift toward a more “human” AI raises critical questions about privacy and psychological dependency. The ability of a model to analyze a user’s emotional state through their voice and facial expressions creates a new frontier of data collection.

the seamlessness of the interaction increases the risk of “anthropomorphism,” where users attribute human consciousness or intent to a mathematical model. As AI becomes more convincing in its empathy, the distinction between a tool and a companion continues to blur.

Industry experts and regulators are now focusing on how these multimodal systems handle sensitive data, especially when the AI has constant access to a user’s camera and microphone. The challenge for developers will be balancing the “magic” of real-time interaction with rigorous safety guardrails to prevent manipulation or privacy breaches.

For those seeking the latest official documentation on safety and deployment, the OpenAI Safety page provides updates on how the company is addressing the risks associated with multimodal models.

The rollout of the full Voice Mode is expected to occur in stages, with Plus users receiving the update first before it expands to the broader public. This phased approach allows for monitoring of the model’s behavior in real-world, high-stakes conversational environments.

We invite you to share your thoughts on the transition to multimodal AI in the comments below. How do you see these tools changing your daily routine?