The first few seconds of OpenAI’s latest demonstration are designed to unsettle the viewer. A woman walks through a neon-lit Tokyo street, the reflections of rain-slicked pavement shimmering beneath her feet with a precision that feels tactile. There is no jitter, no obvious “AI hallucination” warping the edges of the frame, and no sudden jump in logic. It is a seamless, hyper-realistic sequence that suggests the boundary between generated imagery and captured reality has finally collapsed.

This is Sora, OpenAI’s new text-to-video model, and its debut has sent a shockwave through the creative industries. Unlike previous iterations of generative video, which often resembled fever dreams—characterized by melting limbs and shifting backgrounds—Sora produces videos up to a minute long that maintain a surprising level of temporal consistency. For those of us who have tracked the trajectory of AI from simple text prompts to DALL-E’s images, Sora represents a leap in scale and sophistication that transforms the tool from a novelty into a potential industry disruptor.

But beneath the breathtaking visuals lies a complex set of technical achievements and glaring limitations. While the model can simulate complex camera movements and intricate character expressions, it still struggles with the fundamental laws of physics. In some demos, a person takes a bite of a cookie, but the cookie remains whole. In others, glass shatters without a clear cause. These “glitches” are the current frontier of AI research: the struggle to move from visual mimicry to a genuine understanding of how the physical world operates.

The Architecture of Motion: How Sora Works

To understand why Sora feels different from its predecessors, one must look at how it handles data. Most previous video AI models treated video as a sequence of images, essentially “guessing” the next frame. Sora takes a different approach by utilizing a transformer architecture—the same foundational tech behind GPT-4—and treating video frames as “patches.”

OpenAI describes these patches as the visual equivalent of tokens in a text model. By breaking a video down into these small, spatio-temporal cubes, Sora can analyze and generate motion across a wider window of time. This allows the model to maintain “object permanence,” meaning a character can move out of the frame and return without their clothes changing color or their face morphing into someone else. This consistency is what allows for the creation of complex scenes with multiple characters and specific types of motion.

The training process involves a massive dataset of captioned videos, allowing the model to learn the relationship between descriptive language and visual movement. However, the sheer computing power required to render these 60-second clips is immense, which explains why the tool has not yet been released to the general public.

The Creative Collision: Impact and Stakeholders

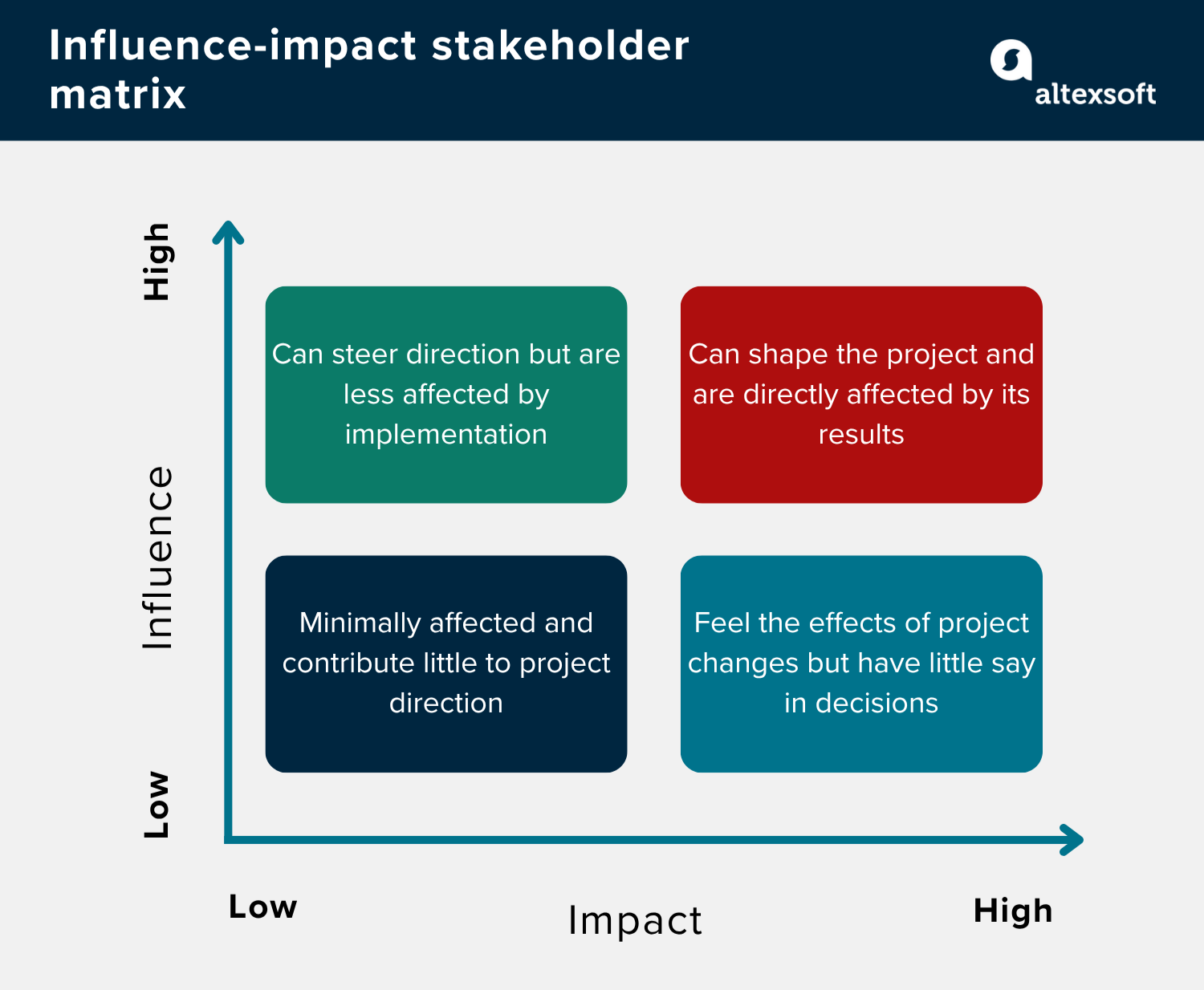

The arrival of Sora creates an immediate tension between technological efficiency and artistic labor. The stakeholders in this shift are diverse, ranging from high-budget Hollywood studios to independent content creators and stock footage agencies.

- Filmmakers and Animators: While some see Sora as a tool for rapid prototyping and storyboarding, others fear the devaluation of cinematography and visual effects (VFX) artistry. The ability to generate a “perfect” B-roll shot without a crew or a location could eliminate thousands of entry-level production jobs.

- Advertising Agencies: The potential for hyper-personalized video ads—where the background and product change based on the viewer’s demographic in real-time—is a massive commercial opportunity.

- Digital Forensics and Journalism: As the “cost” of creating a convincing fake video drops to near zero, the burden of verification increases. The risk of sophisticated deepfakes used for political disinformation is a primary concern for election monitors and newsrooms.

Capabilities vs. Constraints

To provide a clearer picture of where Sora stands today, it is helpful to contrast its strengths against its current failures. The model is not a “world simulator” in the literal sense, but a visual predictor.

| Capability | Current Limitation |

|---|---|

| High temporal consistency (stable characters) | Failure to simulate complex physics (e.g., cause-and-effect) |

| Complex camera movement (pans, tilts, zooms) | Occasional spatial confusion (left vs. Right) |

| Hyper-realistic textures and lighting | Difficulty with precise descriptions of event sequences |

| Videos up to 60 seconds in length | High computational cost and slow rendering times |

The Safety Guardrails and the ‘Red Teaming’ Phase

OpenAI has been careful to note that Sora is not yet available for public use. The model is currently undergoing “red teaming”—a process where external experts attempt to break the system, trigger biased outputs, or generate harmful content. This phase is critical given the potential for Sora to create non-consensual imagery or deceptive political content.

The company is collaborating with visual artists, designers, and filmmakers to understand how the tool can be integrated into professional workflows without erasing the human element. OpenAI has committed to implementing C2PA metadata—digital watermarks that identify a piece of content as AI-generated—to help platforms and users distinguish between synthetic and organic media.

Despite these efforts, the gap between a “safe” release and the inevitable leak of similar technology from competitors remains a point of anxiety for regulators. The race for generative video is no longer just about who has the best pixels, but who can implement the most robust safety framework without stifling the tool’s utility.

Disclaimer: This article discusses emerging AI technology. The capabilities described are based on OpenAI’s technical reports and demonstration videos; actual user experience may vary upon public release.

The next major milestone for Sora will be the conclusion of its red teaming phase and the potential rollout of a limited beta for a select group of creative professionals. Until then, the industry remains in a state of suspended animation, watching a series of 60-second clips that signal the beginning of a new era in digital storytelling.

We want to hear from you. Does the rise of text-to-video AI excite you as a creator, or does it feel like a threat to artistic integrity? Share your thoughts in the comments below.