For decades, our relationship with technology has been defined by the glass rectangle. From the first bulky monitors to the iPhones that now live in our pockets, the digital world has been something we look at. With the introduction of the Apple Vision Pro, that boundary is designed to dissolve, shifting the paradigm from traditional screens to what the company calls spatial computing.

The Apple Vision Pro is not merely a headset; it is a high-stakes bet on the future of human-computer interaction. By blending digital content with the physical world, Apple is attempting to move the user interface off the device and into the room. While the hardware is an undeniable feat of engineering, the true ambition lies in how it integrates into the existing Apple ecosystem, promising a world where your laptop screen can float in mid-air while you remain present in your living room.

Launched in the U.S. On February 2, 2024, the device enters the market at a premium starting price of $3,499. This positioning makes it clear that the Vision Pro is not aimed at the casual consumer—at least not yet—but rather at “pro” users, developers, and early adopters willing to pay a steep entry fee to experience the first iteration of a new computing era.

Redefining the Interface: Eyes, Hands, and Voice

The most immediate departure from previous wearable tech is the total absence of handheld controllers. Instead, the Apple Vision Pro utilizes a sophisticated array of sensors to track eye movements and hand gestures with surgical precision. In this system, your eyes act as the cursor; simply looking at an icon highlights it, and a gentle tap of the fingers—even while resting in your lap—acts as the click.

This intuitive approach aims to reduce the friction often associated with augmented reality (AR) and virtual reality (VR) headsets. By leveraging high-resolution displays that mimic the density of a 4K TV for each eye, the device minimizes the “screen door effect” that plagued earlier mixed reality hardware. The result is a visual experience where digital windows feel anchored to the physical environment, allowing users to organize their workspace across their entire field of vision.

To solve the isolation problem inherent in headsets, Apple introduced EyeSight. This external display projects a version of the user’s eyes to people nearby, signaling whether the wearer is fully immersed in a virtual environment or available for interaction. While the execution has received mixed reviews regarding its realism, the intent is to keep the user socially connected to their surroundings.

The visionOS Ecosystem and Spatial Productivity

Powering the hardware is visionOS, a spatial operating system designed to feel familiar to anyone who has used an iPad or Mac. The OS allows users to open multiple apps—such as Safari, Messages, and Notes—and arrange them in three-dimensional space. This capability transforms a physical room into an infinite canvas, potentially replacing the need for multiple physical monitors in a professional setting.

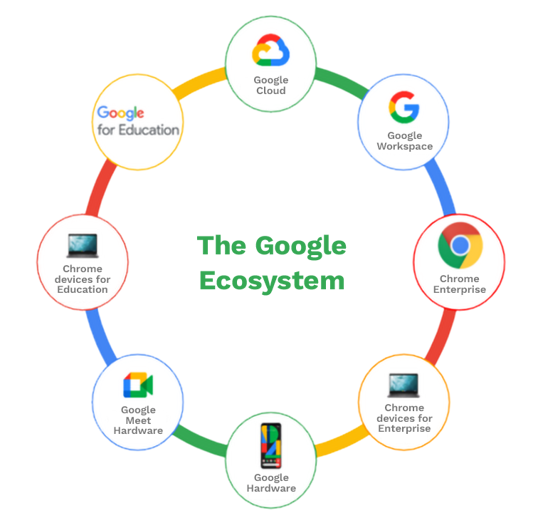

The integration with the broader Apple ecosystem is where the device finds its strongest utility. Users can glance at their MacBook to instantly bring a virtual display into their vision, or sync their calendars and photos seamlessly. However, the success of the platform depends heavily on developer adoption. While many existing iPad apps work on the Vision Pro, the industry is still waiting for a “killer app”—a piece of software that makes the device an essential tool rather than a luxury novelty.

Core Specifications at a Glance

| Feature | Detail |

|---|---|

| Starting Price | $3,499 |

| Operating System | visionOS |

| Primary Inputs | Eye tracking, Hand gestures, Voice |

| Launch Date | February 2, 2024 (U.S.) |

| Key Hardware | Dual-chip design (M2 and R1) |

The Challenges of the “Pro” Label

Despite the technical brilliance, the Apple Vision Pro faces significant hurdles. The most prominent is the price point, which places it far beyond the reach of the average user. The physical design—while premium, using aluminum and glass—has led to reports of the device being heavy during extended use. The reliance on an external battery pack, connected by a cable, also serves as a reminder that battery density has not yet caught up to the processing demands of spatial computing.

There is also the question of utility. While the device excels at immersive cinema and focused productivity, the “spatial” aspect of computing is still in its infancy. For many, the leap from a lightweight laptop to a head-mounted display is a psychological and physical hurdle that requires a clear, daily-use justification that goes beyond the novelty of floating windows.

As reported by Reuters, the trajectory of such high-end hardware typically follows a pattern: a costly “Pro” version establishes the technology’s viability, followed by a more affordable, streamlined version for the mass market. The Vision Pro is the vanguard, absorbing the early critiques and technical growing pains to pave the way for future generations.

The next critical milestone for the device will be its expansion into international markets and the rollout of further visionOS updates, which are expected to refine the user interface and expand the library of native spatial applications. Whether the Apple Vision Pro becomes a ubiquitous tool or remains a niche luxury depends on how effectively Apple can transition the world from looking at screens to living within them.

We want to hear your thoughts on the shift toward spatial computing. Do you see this replacing your laptop, or is it a tool for specific tasks? Share your perspective in the comments below.