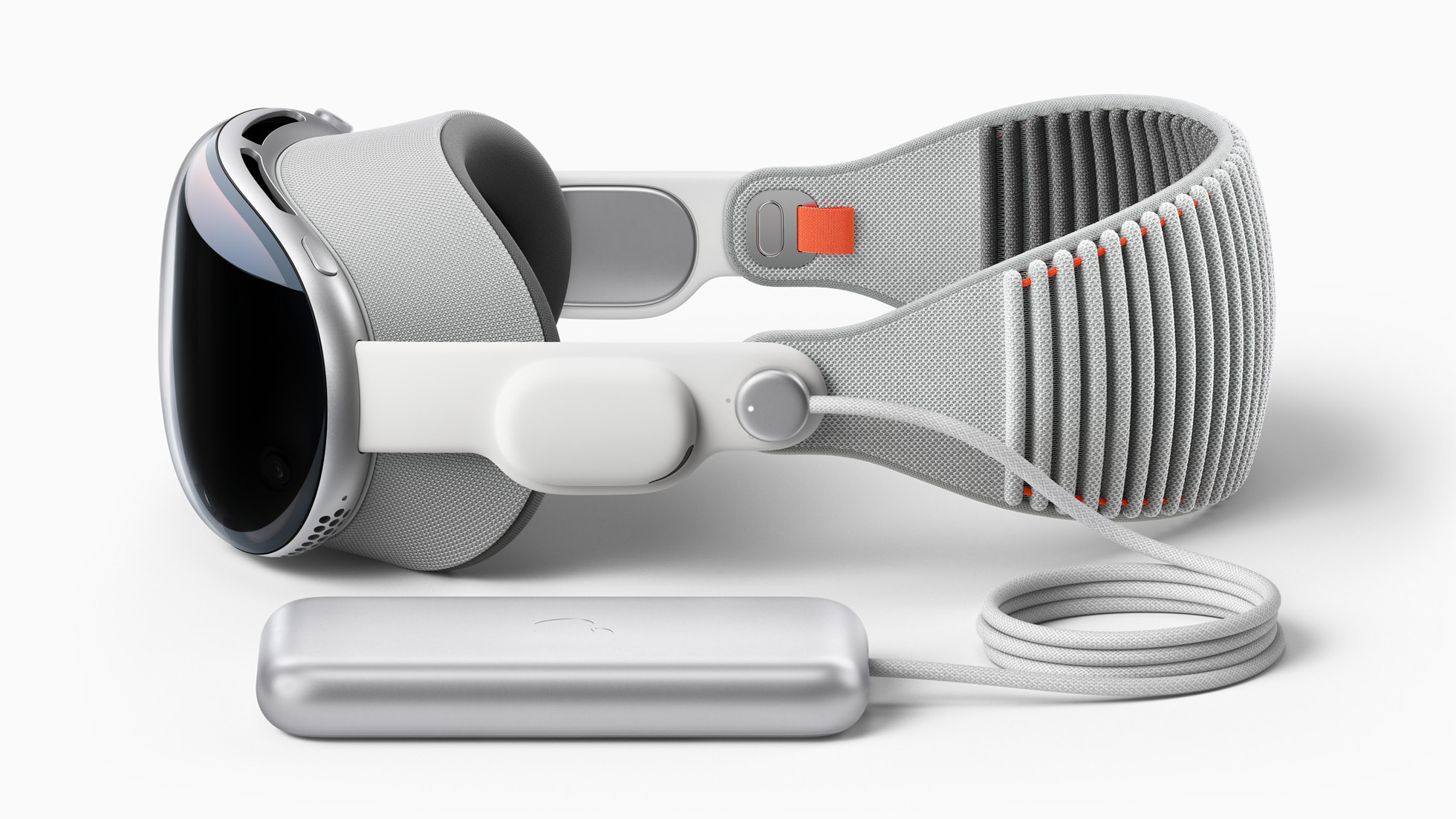

The first few hours with the Apple Vision Pro feel less like using a gadget and more like stepping into a science-fiction film. The resolution is startling, the eye-tracking is intuitive, and the promise of “spatial computing”—the idea that your digital workspace can float effortlessly around your physical living room—feels like a genuine leap forward in human-computer interaction.

But as the initial adrenaline fades, a different reality sets in. For those who have moved past the honeymoon phase and integrated the $3,499 headset into their daily routines, the experience has shifted from one of pure wonder to a complex calculation of utility versus friction. The device represents a masterclass in engineering, yet it struggles to answer the most fundamental question of any consumer electronic: Why do I need to wear this every day?

After months of real-world testing, the consensus among early adopters and tech analysts suggests that the Vision Pro is not yet a product for the masses, but rather an expensive, polished prototype for a future that hasn’t quite arrived. While the hardware is nearly flawless, the software ecosystem and the physical toll of the device create a gap between the vision Apple sold and the experience users actually have.

The Friction of Spatial Computing

The primary hurdle for the Vision Pro isn’t a lack of power, but the physical reality of wearing it. Despite the premium materials, the device is heavy. Users frequently report a “front-heavy” sensation that leads to facial fatigue after an hour or two of continuous use. This physical constraint fundamentally alters how the device is used; This proves rarely a primary workstation and more often a high-end cinema for short bursts of entertainment.

Then there is the issue of isolation. While Apple introduced “EyeSight”—the external display that shows a digital version of the user’s eyes to people nearby—it fails to bridge the social gap. To the outside world, the user is still wearing a bulky visor, and the digital eyes often feel like a sterile approximation of human connection rather than a replacement for it.

This isolation extends to the “Personas” used during FaceTime calls. These AI-generated 3D avatars are a technical marvel, capturing the user’s likeness with startling accuracy. However, they frequently fall into the “uncanny valley,” where the likeness is close enough to be recognizable but off enough to feel eerie. For many, the digital avatar is a distraction that detracts from the intimacy of a conversation.

The Quest for the “Killer App”

Apple’s strategy has always been to build the hardware and the ecosystem simultaneously. With the Vision Pro, the hardware is ready, but the ecosystem is still catching up. Much of the current experience relies on ported iPad apps—windows that float in space but don’t truly leverage the three-dimensional environment.

The “wow” factor remains strongest in media consumption. Watching a 4K movie on a virtual 100-foot screen is an unmatched experience, and the “Immersive Video” capabilities provide a sense of presence that traditional screens cannot replicate. However, productivity remains a mixed bag. While having multiple virtual monitors is a boon for some, the lack of a physical keyboard (unless paired via Bluetooth) and the occasional latency in gaze-and-pinch navigation make it less efficient than a traditional MacBook setup for deep work.

The current state of the Vision Pro can be summarized as a struggle between potential and practicality:

| Feature | Initial Promise | 3-Month Reality |

|---|---|---|

| Daily Utility | Primary computer replacement | Occasional entertainment hub |

| Social Interaction | Seamlessly integrated | Socially isolating / “Uncanny” Personas |

| Comfort | Ergonomic for long sessions | Noticeable weight and facial fatigue |

| App Ecosystem | Revolutionary spatial apps | Mostly adapted 2D iPad applications |

The High Cost of Being First

At $3,499, the Vision Pro is positioned as a Pro device, but in practice, it functions as a developer kit for the general public. The price point excludes nearly everyone except the most affluent tech enthusiasts and corporate developers. This creates a “chicken and egg” problem: developers are hesitant to build complex, spatial-first apps for a tiny user base, and users are hesitant to buy the hardware without a library of essential apps.

Despite these critiques, the device proves that the technology works. The integration of the R1 chip for near-zero latency and the precision of the micro-OLED displays set a benchmark that the rest of the industry will spend years trying to match. The Vision Pro isn’t a failure; it is a successful demonstration of what is possible, provided the hardware can become lighter and the price can become accessible.

For the average consumer, the lesson of the first few months is clear: the future of computing is spatial, but it isn’t here yet. The Vision Pro is a glimpse into a world where screens disappear and digital content blends with our physical environment, but that world currently requires a heavy headset and a significant financial investment.

The next critical milestone for the platform will be the rollout of visionOS 2 and the anticipated announcement of a more affordable, consumer-grade version of the headset. These updates will determine if spatial computing becomes a staple of modern life or remains a high-priced curiosity for the few.

Do you think spatial computing will replace the laptop, or is it destined to remain a niche tool for entertainment? Share your thoughts in the comments below.