The boundary between simulated imagery and captured reality has shifted. With the unveiling of Sora, OpenAI’s new text-to-video model, the ability to generate high-fidelity, cinematic footage from a simple written prompt has moved from the realm of experimental research into a tangible, if currently restricted, reality.

Sora represents a significant leap in generative AI, moving beyond the flickering, surrealist loops that characterized previous iterations of AI video. The model can produce videos up to a minute long, featuring complex camera motion, multiple characters, and a level of temporal consistency that allows objects to persist even when they move out of the frame. For those of us who have tracked the rapid ascent of Large Language Models (LLMs) and image generators like DALL-E, Sora is the logical, albeit jarring, next step in the quest to create a “world simulator.”

While the visual demonstrations are compelling—ranging from a stylized Tokyo street shimmering with neon lights to a whimsical animation of a fluffy monster—the technology arrives at a precarious moment. As the global community grapples with the proliferation of deepfakes and the erosion of digital trust, the arrival of a tool capable of creating photorealistic video raises urgent questions about verification and the future of the creative economy.

The Mechanics of a World Simulator

Unlike previous video AI tools that often functioned as “animated images,” Sora utilizes a diffusion transformer architecture. This approach combines the strengths of diffusion models—which excel at generating high-quality imagery from noise—with the transformer architecture that powers GPT-4, allowing the model to handle sequences of data over time more effectively.

OpenAI describes the model as being trained on a vast array of data, enabling it to understand not just the visual appearance of an object, but the physics of how it should move. However, the “simulation” is not perfect. In several of the released samples, the model struggles with complex cause-and-effect physics. For instance, a character might take a bite out of a cookie, but the cookie remains whole, or a glass may shatter without the expected trajectory of shards.

These “hallucinations” in motion highlight the gap between visual mimicry and true physical understanding. Sora does not “know” physics in the way a game engine like Unreal Engine does; instead, it predicts the most likely next frame based on patterns learned from millions of hours of video. This distinction is critical for professionals in the VFX and cinematography industries who require precision over approximation.

Safety, Red Teaming, and the Trust Gap

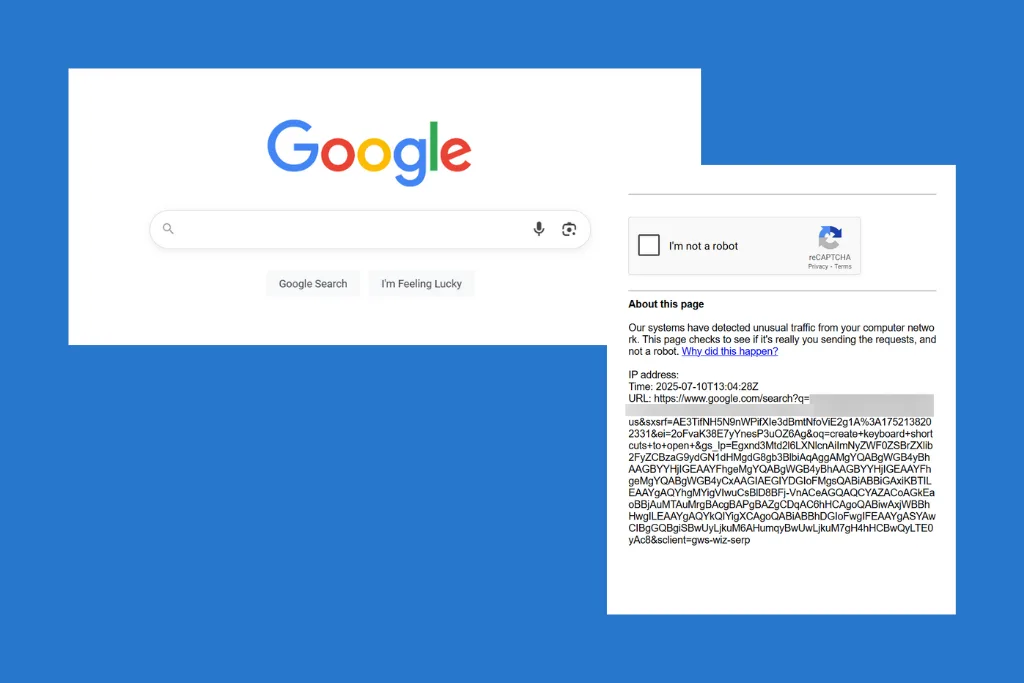

Given the potential for misuse, OpenAI has not yet released Sora to the general public. The model is currently undergoing “red teaming”—a process where expert testers intentionally try to provoke the AI into generating harmful, biased, or deceptive content to identify vulnerabilities before a wide release.

The risks are twofold: the creation of non-consensual intimate imagery and the generation of hyper-realistic misinformation. In an election year across multiple global powers, the ability to create a convincing video of a political figure saying something they never said is a systemic risk. To combat this, OpenAI is working with the Coalition for Content Provenance and Authenticity (C2PA) to embed metadata into the files, which would allow users to verify if a video was AI-generated.

Critics argue that metadata is a fragile defense, as it can be stripped away by simple re-encoding or screen-recording. The company is also developing “classifiers”—AI tools designed to detect Sora-generated content—though the history of AI detection suggests a perpetual arms race where the generator eventually outpaces the detector.

Capabilities and Constraints of Sora

| Feature | Demonstrated Capability | Known Limitation |

|---|---|---|

| Duration | Up to 60 seconds of continuous video | Potential for degradation in long-form coherence |

| Consistency | Maintains character/object identity across cuts | Occasional “morphing” of background elements |

| Physics | Realistic fluid and fabric movement | Struggles with complex cause-and-effect (e.g., eating) |

| Camera Work | Complex panning and tracking shots | Occasional “impossible” camera angles/glitches |

Impact on the Creative Economy

The arrival of Sora sends a shockwave through the creative industries. For independent filmmakers and advertisers, the tool offers a way to prototype scenes or create B-roll without the overhead of a full production crew. The cost of “visualizing” an idea has effectively dropped to near zero.

However, this efficiency comes at a cost to human labor. Concept artists, storyboarders, and stock footage creators face an existential threat as the need for manual asset creation diminishes. The conversation is shifting from “how can this help us” to “how do we protect the intellectual property of the artists whose work likely trained these models?”

The stakeholders in this transition include:

- Visual Artists: Who must navigate a landscape where their style can be replicated in seconds.

- News Organizations: Who must implement rigorous verification protocols to prevent the broadcast of AI-generated falsehoods.

- Tech Regulators: Who are tasked with creating frameworks for AI transparency and copyright.

Sora is not just a tool for making videos; We see a tool for synthesizing reality. The challenge for society will be maintaining a shared sense of truth in an era where seeing is no longer believing.

Disclaimer: This article discusses emerging AI technology. The capabilities described are based on OpenAI’s public demonstrations and technical reports; actual performance may vary upon general release.

The next significant milestone for Sora will be the conclusion of its red teaming phase and the potential release of a limited API for developers. OpenAI has indicated that feedback from visual artists and designers is currently being used to refine the model’s utility and safety guardrails before any broader deployment.

Do you believe AI-generated video will enhance human creativity or replace it? Share your thoughts in the comments below or join the conversation on our social channels.