The intersection of global diplomacy and emerging technology has reached a critical juncture as nations grapple with the rapid proliferation of artificial intelligence. In a recent analysis of the geopolitical landscape, the role of AI in shaping international relations has shifted from a theoretical concern to a primary driver of strategic competition, particularly between the United States and China.

This strategic competition, often framed as an AI arms race, extends beyond mere software development. It encompasses the control of hardware—specifically the high-end semiconductors required to train large-scale models—and the securing of data pipelines. As these technologies integrate into military and administrative infrastructure, the risk of systemic instability increases, necessitating a new framework for digital diplomacy.

The current trajectory suggests that AI will not only automate labor but will fundamentally alter how states project power and maintain sovereignty. For those of us who have reported from conflict zones and diplomatic summits across 30 countries, the pattern is familiar: technology often outpaces the treaties designed to contain it. The challenge now is to establish “guardrails” that prevent accidental escalation while allowing for legitimate innovation.

The Semiconductor Stranglehold and Hardware Diplomacy

At the heart of the AI struggle is the physical infrastructure. The global supply chain for advanced chips is remarkably concentrated, with TSMC in Taiwan producing the vast majority of the world’s most sophisticated semiconductors. This geographic concentration creates a singular point of vulnerability that has turned silicon into a primary instrument of national security.

The United States has implemented rigorous export controls to limit the access of strategic competitors to high-end AI chips, such as those produced by Nvidia. These measures are designed to slow the development of advanced military AI capabilities, yet they have simultaneously spurred a drive for domestic chip production in both the U.S. And China. This “de-risking” strategy is creating a fragmented global tech ecosystem, potentially splitting the internet and AI standards into two distinct, incompatible spheres.

The implications for global trade are profound. As nations prioritize “secure” supply chains over “efficient” ones, the cost of technology is likely to rise, and the speed of collaborative scientific discovery may slow. The shift from globalization to “friend-shoring” reflects a broader trend where economic interdependence is no longer seen as a guarantee of peace, but as a potential liability.

Algorithmic Governance and the Erosion of Truth

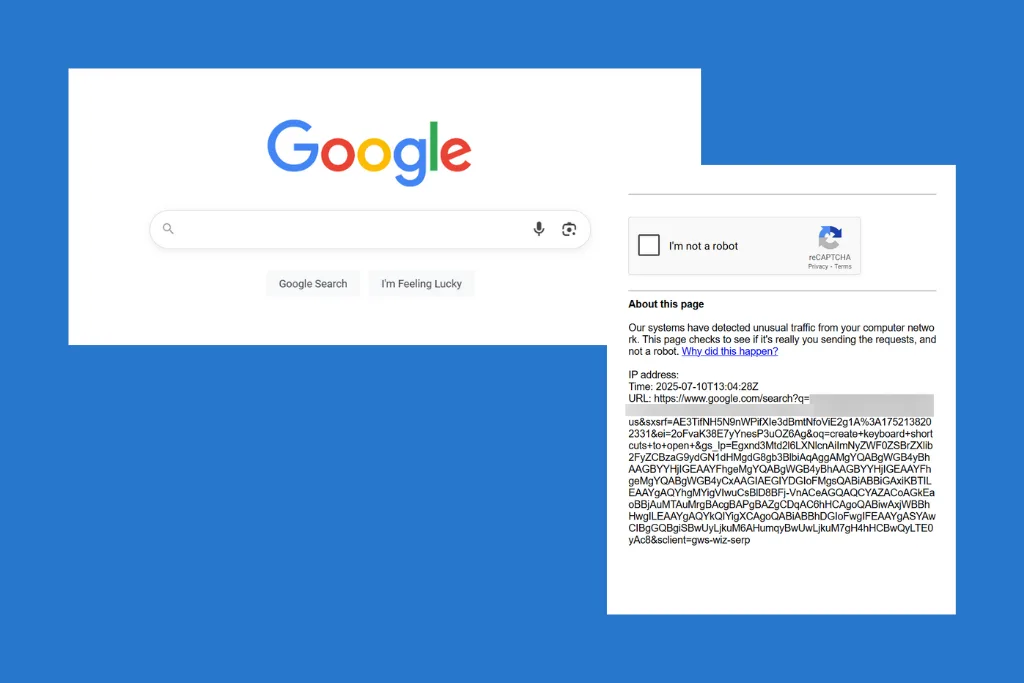

Beyond the hardware, the deployment of AI in the information space is reshaping the nature of truth and public trust. The rise of generative AI has made the creation of hyper-realistic deepfakes trivial, complicating the work of journalists and diplomats who rely on verified evidence to hold power to account.

In regions already plagued by instability, AI-driven disinformation campaigns can act as force multipliers for existing tensions. The ability to automate the production of tailored propaganda allows state actors to influence foreign elections and domestic sentiment with unprecedented precision. What we have is not merely a technical problem but a sociological one, as the “liar’s dividend” allows lousy actors to dismiss genuine evidence as AI-generated.

To counter this, several international bodies are proposing digital watermarking and provenance standards. However, the effectiveness of these tools depends on universal adoption—a difficult prospect given the current climate of mutual suspicion between major powers. The struggle to define “truth” in the age of AI is becoming as central to national security as the defense of physical borders.

Key Dimensions of the AI Geopolitical Struggle

| Domain | Primary Objective | Key Risk Factor |

|---|---|---|

| Hardware | Compute Sovereignty | Supply chain disruption |

| Software | Model Superiority | Algorithmic bias/instability |

| Information | Narrative Control | Erosion of public trust |

| Diplomacy | Norm Setting | Lack of universal treaty |

The Path Toward International AI Norms

Despite the competition, there is a growing recognition that certain risks—such as the autonomous launch of nuclear weapons or the accidental collapse of financial markets—are existential and transcend national interests. This has led to a series of tentative “summit diplomas” aimed at establishing basic safety standards.

The goal is to move toward a multilateral agreement similar to the non-proliferation treaties of the Cold War. Such a framework would require transparency regarding “frontier models” and a commitment to keep humans “in the loop” for critical decision-making processes. However, verifying compliance in the digital realm is far more difficult than counting missile silos, as AI development often happens in the cloud, hidden from satellite imagery.

Stakeholders affected by these shifts include not only governments but also the massive private corporations that own the compute power. These entities now wield a form of “sovereign power,” negotiating with heads of state as equals. The challenge for future governance is how to regulate these entities without stifling the innovation that could solve climate change or cure diseases.

What Lies Ahead for Global Stability

The immediate future will likely be defined by a series of “small-circle” agreements—mini-lateral pacts between trusted allies to share AI research and secure hardware. This fragmented approach may provide short-term security but leaves the broader global community without a unified safety net.

The next critical checkpoint will be the upcoming cycle of international AI safety summits, where delegates will attempt to codify the definitions of “catastrophic risk.” Whether these meetings result in binding treaties or mere expressions of intent will determine if the AI era is characterized by a new era of cooperation or a descent into digital volatility.

We invite our readers to share their perspectives on the balance between AI innovation and national security in the comments below.