For the first few years of the generative AI boom, spotting a fake image was almost a game of “Where’s Waldo,” but with anatomy. We looked for the telltale signs of a model struggling with the complexities of the human body: a sixth finger emerging from a palm, teeth that looked like a continuous picket fence, or earrings that melted into a neck. It was an era of obvious glitches, where the “uncanny valley” was a wide, easily navigable chasm.

But the gap is closing. With the release of newer iterations of Midjourney, DALL-E 3 and Stable Diffusion, the anatomical errors have largely vanished. Skin textures are pore-perfect, and hands are increasingly believable. As these models ingest more data and refine their weights, they have become masters of the appearance of reality. However, a recent study published in the journal Science suggests that while AI can mimic the look of a photograph, it still cannot mimic the laws of the universe.

The core issue is a fundamental ignorance of physics. While an AI model can predict that a shiny surface should have a bright spot on it, it doesn’t actually understand how a photon travels from a light source, bounces off a surface, and hits a lens. It is performing a sophisticated statistical guess based on patterns, not a simulation of geometry. For those who know where to look, the laws of physics are providing a reliable “fingerprint” that separates the synthetic from the organic.

The difference between pattern and physics

To understand why AI fails at physics, it helps to look at how these images are built. As a former software engineer, I think of it as the difference between a rendering engine and a diffusion model. A traditional 3D render (like those used in Pixar films or high-end architecture) uses “ray tracing.” This is a mathematical simulation of light; the software calculates the exact path of light rays to determine shadows and reflections. It is physics-based.

Generative AI does not use ray tracing. It uses diffusion, a process of removing noise from an image to reveal a pattern that matches a prompt. The AI isn’t thinking, “The sun is at a 45-degree angle, so the shadow should fall here.” Instead, it is thinking, “In millions of photos of people standing in the sun, there is usually a dark patch on the ground near their feet.”

This distinction creates “physical hallucinations.” The image looks correct at a glance, but the geometry is often impossible. A reflection in a mirror might show a version of the person that doesn’t match their actual pose, or a shadow might stretch in a direction that contradicts the primary light source in the frame.

| Feature | AI Generative Approach | Physical Reality / Ray Tracing |

|---|---|---|

| Light Sources | Predicts brightness based on pixel proximity. | Calculates photon travel and intensity. |

| Reflections | Mimics the “look” of a reflection. | Calculates angle of incidence and reflection. |

| Shadows | Places dark areas where they “usually” are. | Determines occlusion based on object geometry. |

| Geometry | Maintains visual consistency. | Maintains mathematical proportionality. |

The forensic toolkit for the AI era

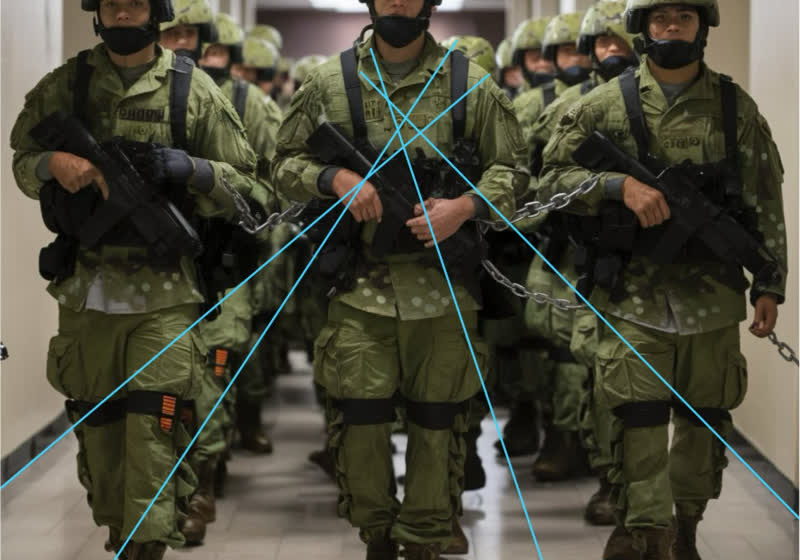

As we move away from counting fingers, digital forensic experts and journalists are shifting their focus toward “geometric consistency.” This involves a more rigorous interrogation of the image’s internal logic. If you suspect an image is AI-generated, We find three primary physical markers to examine:

- Light Source Convergence: Identify the primary light source (the sun, a lamp, a window). Trace the shadows of multiple objects in the scene. In a real photo, all shadows should diverge from a single point of origin. AI often creates “floating” light sources, where different objects cast shadows in slightly different directions.

- Reflection Accuracy: Look at mirrors, puddles, or polished chrome. AI frequently fails to maintain the correct spatial relationship between the object and its reflection. The reflected object may be slightly shifted, or a detail present in the real object may be missing in the reflection.

- Contact Shadows: Pay close attention to where an object touches a surface. In physics, this is called “ambient occlusion”—the darkest part of a shadow where two surfaces meet. AI often creates a “halo” effect or a slight gap, making objects look as if they are hovering a few millimeters above the ground.

Why this gap matters for truth and trust

The stakes for this technical nuance are incredibly high. We are entering a period of “epistemic instability,” where the ability to verify visual evidence is critical for democratic processes, legal proceedings, and journalism. When a fake image of a political event or a corporate disaster can look 99% real, that remaining 1%—the physics—becomes the last line of defense for the truth.

However, the stakeholders in this battle are not evenly matched. While forensic experts can use software to analyze light angles, the average social media user scrolling through a feed is unlikely to perform a geometric audit of a photo. This creates a vulnerability where “believability” outweighs “verifiability.”

The challenge for AI developers is that “solving” physics requires a paradigm shift. To move beyond pattern recognition, models may need to integrate “World Models”—AI that doesn’t just learn from 2D images, but learns from 3D environments or video, understanding how objects move and interact in a physical space.

The road toward ‘World Models’

The industry is already moving in this direction. The next frontier isn’t just better prompts, but the integration of spatial intelligence. We are seeing early attempts in video generation models, such as OpenAI’s Sora, which attempt to simulate a persistent 3D world. While these models still struggle with “physics fails”—such as a person eating a cookie but the cookie remaining whole—they represent an attempt to move from 2D pattern matching to 3D world simulation.

The next major checkpoint in this evolution will be the widespread integration of “physics-informed neural networks” (PINNs). These are models that have the laws of physics baked into their loss functions, meaning the AI is penalized not just for looking “wrong,” but for violating a law of gravity or optics. Until then, the most reliable way to spot a fake remains the most ancient method: looking closely at how the light hits the floor.

Do you have a “tell” you use to spot AI images? Share your tips in the comments or join the conversation on our social channels.