Apple has spent years playing a cautious game with generative AI, watching from the sidelines while competitors rushed to release standalone chatbots and experimental plugins. With the introduction of Apple Intelligence, the company is finally stepping into the ring—not by launching a separate app, but by weaving artificial intelligence into the very fabric of iOS, iPadOS, and macOS.

For the average user, this isn’t about chatting with a bot for fun; We see about “personal intelligence.” By leveraging the deep integration of the operating system, Apple aims to create a system that understands a user’s personal context—who they are emailing, what meeting is coming up, and where they saved that specific PDF—to automate tasks that previously required manual searching and switching between apps.

As a former software engineer, I find the most compelling part of this rollout to be the architectural split. Apple is attempting a high-wire act: delivering the power of large language models (LLMs) while maintaining a strict privacy posture. This is achieved through a hybrid approach that processes as much data as possible on-device, using the Neural Engine, and offloading more complex requests to a new server-side architecture known as Private Cloud Compute.

The Core Pillars of Apple Intelligence

Apple Intelligence is not a single feature, but a suite of capabilities designed to reduce the cognitive load of daily digital maintenance. The most immediate impact is felt through “Writing Tools,” which are integrated system-wide. Whether in Mail, Notes, or a third-party app, users can now rewrite text for different tones, proofread for grammar, or summarize long threads into concise bullet points.

Beyond text, the company is leaning into generative visuals with Image Playground and Genmoji. Image Playground allows users to create stylized images based on prompts or photos of their friends, while Genmoji enables the creation of entirely new emojis on the fly. While these features lean toward the playful, they signal Apple’s intent to make generative AI a standard tool for communication rather than a niche productivity hack.

The most significant shift, however, is the overhaul of Siri. The virtual assistant is moving away from a rigid command-and-control structure toward a more fluid, context-aware experience. Siri can now maintain the thread of a conversation even if the user stumbles over their words and, more importantly, it possesses “onscreen awareness.” So Siri can understand what the user is looking at and take action based on that specific context—such as adding a flight detail from a text message directly into a calendar event.

Privacy and the Private Cloud Compute Model

The primary friction point for AI adoption has always been data privacy. To solve this, Apple is introducing Private Cloud Compute (PCC). When a request is too complex for the device’s local hardware, it is sent to Apple-silicon-powered servers. Unlike traditional cloud AI, where data might be stored or used to train future models, PCC is designed so that data is never stored and is inaccessible even to Apple.

To ensure transparency, Apple has committed to allowing independent experts to verify the code running on these servers. This move attempts to transform privacy from a marketing promise into a verifiable technical specification, a critical distinction for enterprise users and privacy advocates.

Hardware Requirements and Compatibility

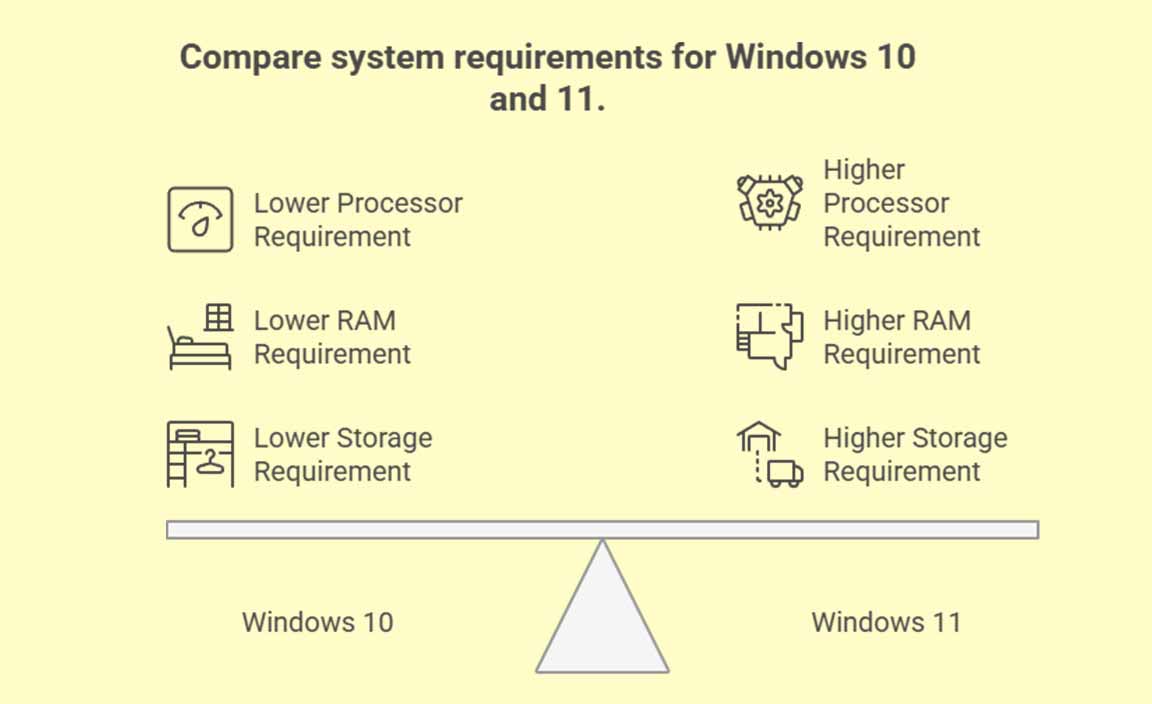

Because Apple Intelligence relies heavily on local processing power and memory (RAM), it is not available on all legacy devices. The requirement for a powerful Neural Engine means that only the most recent high-end chips can support the full suite of features.

| Device Category | Minimum Requirement | Support Level |

|---|---|---|

| iPhone | iPhone 15 Pro / Pro Max | Full Support |

| iPad | M1 Chip or newer | Full Support |

| Mac | M1 Chip or newer | Full Support |

The OpenAI Partnership and Ecosystem Reach

Recognizing that no single model can answer every query, Apple has formed a strategic partnership with OpenAI to integrate ChatGPT-4o into Siri and Writing Tools. When a user asks a question that falls outside the scope of Apple’s personal intelligence—such as “Plan a five-day itinerary for Tokyo”—Siri will ask for permission to share the query with ChatGPT.

This creates a tiered intelligence system: Apple handles the personal, private data on-device, while OpenAI handles the broad, world-knowledge queries in the cloud. This partnership allows Apple to offer state-of-the-art generative capabilities without having to build a massive, general-purpose LLM from scratch, which would have delayed the rollout by years.

However, this integration is not exclusive to OpenAI. Apple has indicated that it intends to support other third-party models in the future, suggesting a modular approach where users might eventually choose which “brain” powers their general-knowledge queries.

The rollout of these features is staggered. Initial betas began in late 2024, with a phased release of capabilities continuing through 2025. Users can track the official deployment schedule and update their devices via the System Settings menu in iOS 18, iPadOS 18, and macOS Sequoia.

The next major milestone will be the full public release of the second wave of Apple Intelligence features, expected in the coming months, which will further expand Siri’s ability to take actions across different apps.

Do you think on-device AI is the right move for privacy, or is the hardware requirement too restrictive? Share your thoughts in the comments below.