For years, interacting with a large language model has felt like a digital game of tennis: you hit a prompt over the net, wait for the machine to process, and then receive a response. There was always a perceptible gap—a “thinking” pause—that reminded the user they were speaking to a server in a data center, not a person. With the introduction of GPT-4o, OpenAI has effectively deleted that gap.

The “o” in GPT-4o stands for “Omni,” and the shift is more than just a branding exercise. As a former software engineer, I find the technical leap here fascinating. Unlike previous iterations that relied on a “pipeline” approach—where a voice-to-text model transcribed your words, a text-based LLM processed them, and a text-to-speech engine voiced the answer—GPT-4o is natively multimodal. It processes text, audio, and images within a single neural network. This allows the model to understand nuance, tone, and emotion in a way that was previously impossible.

The result is a latency that mimics human conversation, averaging about 232 milliseconds. This isn’t just a speed upgrade; This proves a fundamental shift in the user experience. For the first time, an AI can be interrupted in mid-sentence, can sense the sarcasm in a user’s voice, and can respond with emotional inflection that feels startlingly organic. It moves the AI from being a tool we use to a presence we interact with.

The End of the Processing Pause

The most immediate impact of GPT-4o is the removal of the “uncanny valley” associated with AI voice assistants. In the official demonstrations, the model doesn’t just answer questions; it sings, whispers, and laughs. Because it processes audio directly, it can hear the emotional state of the user. If a user sounds stressed, the AI can adjust its tone to be more soothing; if the user is excited, the AI can mirror that energy.

This capability transforms the AI’s utility in real-world scenarios. Imagine a language learner practicing conversation with a tutor that can correct their pronunciation in real-time without the awkward delay of a transcription service. Or a professional using the AI to brainstorm a presentation, where the flow of ideas isn’t stunted by the need to wait for a text block to generate. By integrating the audio and text modalities, OpenAI has created a loop that feels intuitive rather than transactional.

Seeing the World Through the Lens

Beyond voice, GPT-4o’s vision capabilities introduce a new layer of practical utility. By utilizing a device’s camera, the model can “see” and reason about the physical world in real-time. In one demonstration, the AI helped a student solve a math problem by looking at their handwritten work on a piece of paper, guiding them through the logic rather than simply providing the answer.

This visual reasoning has profound implications for accessibility. For the visually impaired, GPT-4o could act as a sophisticated set of eyes, describing surroundings, reading menus, or identifying objects with a level of detail and context that current tools lack. The ability to synthesize visual input with immediate voice output allows the AI to act as a real-time narrator of the physical world.

However, this “always-on” vision capability also raises significant privacy concerns. The transition from a chatbot to a multimodal agent means the AI has the potential to ingest far more personal data—including the layout of a user’s home or the contents of their private documents—simply by being active in the background.

A New Standard for Global Accessibility

One of the most overlooked aspects of the GPT-4o rollout is its expanded efficiency in non-English languages. By optimizing the tokenizer—the way the model breaks down text into manageable chunks—OpenAI has made the model more performant and cost-effective for a broader range of global languages. This reduces the “English-centric” bias that has plagued early LLMs.

OpenAI has made GPT-4o available to free-tier users, democratizing access to a model that previously required a monthly subscription. While there are usage limits for free users, the move signals a strategic shift: OpenAI is no longer just selling a premium product, but is attempting to embed its “Omni” intelligence into the daily habits of as many people as possible.

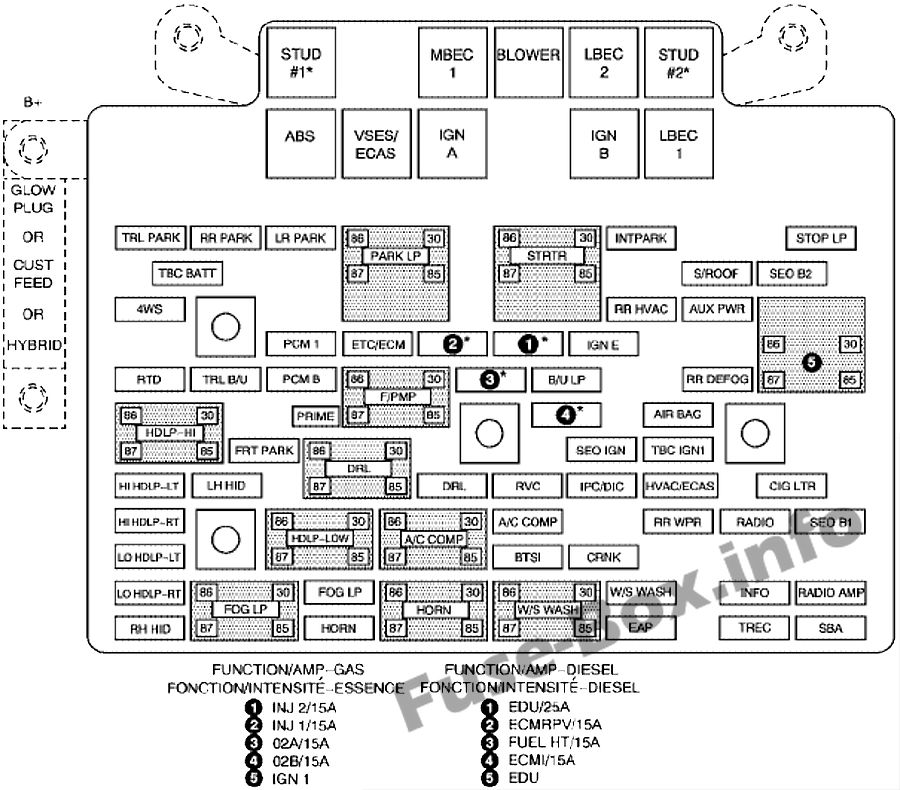

| Feature | GPT-4 Turbo | GPT-4o (Omni) |

|---|---|---|

| Architecture | Chained Modalities | Native Multimodal |

| Audio Latency | High (Multi-step) | Low (~232ms) |

| Input Types | Text, Image | Text, Audio, Image |

| Availability | Paid / API | Free / Paid / API |

| Emotional Tone | Synthetic/Flat | Dynamic/Inflected |

The ‘Her’ Effect and the Ethics of Empathy

The fluidity of GPT-4o inevitably brings to mind the film *Her*, where a man develops a relationship with an emotionally intelligent OS. This is where the technology moves from engineering to ethics. When an AI can sound empathetic and respond with human-like warmth, the risk of emotional over-reliance increases. The “Sky” voice controversy, where the AI’s tone was compared to actress Scarlett Johansson, highlighted the tension between creating a “human” experience and respecting human identity, and consent.

The danger isn’t necessarily that the AI is sentient—it isn’t—but that humans are biologically wired to anthropomorphize things that sound and act like us. As we move toward AI agents that can act as companions, tutors, and assistants, the industry must grapple with the psychological impact of these “synthetic relationships.”

Disclaimer: This article is for informational purposes and does not constitute professional advice on software procurement or AI implementation.

The next major milestone for GPT-4o will be the full rollout of the “Advanced Voice Mode” to all Plus users, which will allow for the seamless, low-latency interactions showcased in the initial reveal. As OpenAI continues to refine the model’s ability to handle complex visual and auditory tasks, the industry will be watching closely to see how these capabilities are balanced with user privacy and safety guardrails.

What do you think about the shift toward natively multimodal AI? Does the “human” voice make you more comfortable or more uneasy? Let us know in the comments or share this story on social media.