Apple has spent the last year watching the generative AI gold rush from the sidelines, but the company is now stepping into the fray with a strategy that prioritizes personal context over raw data processing. Rather than launching a standalone chatbot to compete with the likes of Google Gemini or OpenAI’s ChatGPT, the company is introducing “Apple Intelligence,” a systemic integration of AI designed to weave directly into the fabric of iOS, iPadOS, and macOS.

The pivot marks a fundamental shift in how the company views the relationship between a user and their device. By leveraging on-device processing and a new architectural approach to the cloud, Apple is attempting to solve the primary tension of the AI era: the trade-off between the utility of large language models and the sanctity of user privacy. For the millions of users embedded in the Apple ecosystem, this isn’t just a new feature set—We see a redesign of the interface through which they interact with their digital lives.

At its core, Apple Intelligence focuses on “personal intelligence.” While traditional LLMs are trained on the broad expanse of the internet, Apple’s system is designed to understand the user’s specific world—their emails, calendar events, messages, and files. This allows the system to perform complex tasks, such as summarizing a long thread of messages or finding a specific flight detail buried in a confirmation email, without the user having to manually search for the data.

A New Brain for Siri

The most visible beneficiary of this overhaul is Siri. For years, the virtual assistant has been criticized for its rigidity and lack of contextual awareness. Apple Intelligence aims to fix this by giving Siri “onscreen awareness,” allowing it to understand what the user is currently looking at and take action based on that context. For example, if a friend texts an address, a user can simply tell Siri to “add this to my contact card,” and the assistant will identify the address on the screen and execute the command.

The updated Siri also features improved linguistic capabilities, allowing it to handle stutters or mid-sentence corrections more naturally. However, Apple acknowledges that its own models cannot answer every query. To fill this gap, the company has established a partnership with OpenAI to integrate ChatGPT (specifically GPT-4o). When Siri determines a request requires broad world knowledge—such as writing a complex meal plan or a detailed travel itinerary—it will ask the user for permission to share the query with ChatGPT. This opt-in model ensures that the third-party AI is used as a tool rather than a default replacement for the system’s internal logic.

Creative Tools and Generative Utility

Beyond the assistant, Apple is introducing a suite of generative tools designed to reduce the friction of daily digital communication. “Writing Tools” are being integrated system-wide, allowing users to rewrite text for different tones—professional, concise, or friendly—and proofread documents across Mail, Notes, and third-party apps. The system can also generate summaries of long articles or highlight the most essential points in a notification stack.

On the visual side, the company is introducing “Image Playground,” an app and system tool that allows users to create images in three distinct styles: Sketch, Illustration, and Animation. This is complemented by “Genmoji,” which allows users to generate entirely custom emojis based on a text prompt, filling the gap when a standard Unicode emoji cannot express a specific sentiment or person.

The Privacy Architecture: Private Cloud Compute

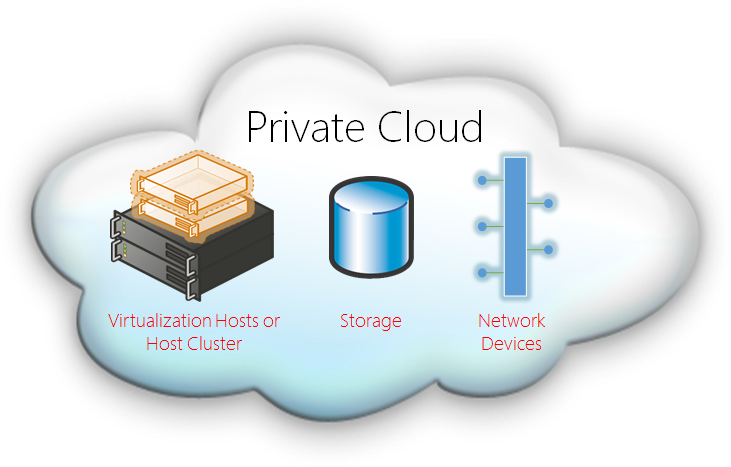

The most significant technical hurdle for any AI integration is privacy. To address this, Apple is deploying a hybrid model. Most tasks are handled on-device, meaning the data never leaves the hardware. For more complex requests that require more compute power than a phone or laptop can provide, Apple is introducing “Private Cloud Compute” (PCC).

Unlike traditional cloud AI, where data is often stored or used to train future models, PCC utilizes Apple-silicon-powered servers designed to ensure that user data is never stored or accessible to Apple. The company has committed to allowing independent security researchers to verify the code running on these servers, aiming to create a transparent “black box” that provides the power of the cloud with the privacy of an on-device chip.

However, this sophisticated architecture comes with a high hardware cost. The requirements for Apple Intelligence are stringent, effectively cutting off older devices that lack the necessary neural engine capabilities and RAM.

| Device Category | Minimum Requirement | Supported Models |

|---|---|---|

| iPhone | A17 Pro Chip / 8GB RAM | iPhone 15 Pro, 15 Pro Max, and later |

| iPad | M1 Chip or later | iPad Air (M1+), iPad Pro (M1+) |

| Mac | M1 Chip or later | All M-series MacBook Air, Pro, iMac, Mac mini, Mac Studio |

The Implementation Gap

While the vision is expansive, the rollout is gradual. Apple Intelligence is not a single “flip of the switch” update but a phased release. Initially launching in U.S. English, the features are rolling out via developer and public betas (starting with iOS 18.1). This staggered approach allows Apple to monitor the stability of Private Cloud Compute and refine the integration of GPT-4o.

The primary constraint remains the hardware. By limiting these features to the most recent Pro-tier iPhones and M-series chips, Apple is creating a stark divide in the user experience. Users with an iPhone 15 (base model) or older will not have access to the core Apple Intelligence features, potentially accelerating a hardware upgrade cycle.

As Apple moves toward a wider global release, the next major checkpoint will be the expansion of language support beyond U.S. English and the full public release of iOS 18.1, which will bring the first wave of these tools to the general consumer market.

Do you think Apple’s focus on privacy outweighs the power of a fully cloud-integrated AI? Share your thoughts in the comments or share this story with your network.