For the better part of two years, the artificial intelligence race has been defined by a quest for raw intelligence—the ability of a model to reason, code, and converse with human-like fluency. But with the unveiling of Gemini 1.5 Pro, Google is shifting the goalposts. The focus is no longer just on how a model thinks, but on how much it can “remember” in a single session.

The breakthrough center-stage in Google’s latest release is a massive expansion of the “context window,” the amount of data a model can process and hold in its active memory at one time. While industry leaders have largely operated within limits of tens of thousands of tokens, Gemini 1.5 Pro introduces a window of up to 1 million tokens, with some internal tests pushing toward 2 million. In practical terms, this means the AI can now ingest and analyze an entire codebase, an hour of video, or thousands of pages of text in a single prompt.

This evolution represents a fundamental change in how users interact with Large Language Models (LLMs). Rather than relying on Retrieval-Augmented Generation (RAG)—a process where a system searches a database for relevant snippets to feed the AI—Gemini 1.5 Pro can effectively “read” the entire library. This reduces the risk of the AI missing critical context and allows for a level of complex, cross-document synthesis that was previously impossible.

The Architecture: Moving to Mixture-of-Experts

To achieve this scale without requiring an impossible amount of computing power for every single query, Google has transitioned Gemini 1.5 Pro to a “Mixture-of-Experts” (MoE) architecture. In traditional dense models, every part of the neural network is activated for every request. MoE changes this by dividing the model into smaller, specialized sub-networks.

When a prompt is entered, the system activates only the most relevant “experts” required to solve that specific task. This efficiency allows the model to maintain a high level of performance—comparable to the larger Gemini 1.0 Ultra—while being significantly faster and more cost-effective to run. It is the difference between asking a general practitioner to perform a complex surgery and bringing in a curated team of specialists for a specific operation.

Breaking the ‘Needle in a Haystack’ Barrier

One of the primary challenges with large context windows is the “lost in the middle” phenomenon, where AI models forget information buried in the center of a massive dataset. Google claims Gemini 1.5 Pro solves this through a rigorous “needle in a haystack” test. In these evaluations, a specific, random piece of information is placed inside a massive block of text; the model is then asked to retrieve it.

According to Google’s data, Gemini 1.5 Pro maintains near-perfect retrieval accuracy across the entire 1-million-token range. This capability is critical for professional applications, such as legal discovery or medical research, where a single missed sentence in a 500-page document can change the entire outcome of an analysis.

Practical Applications and Multimodal Reasoning

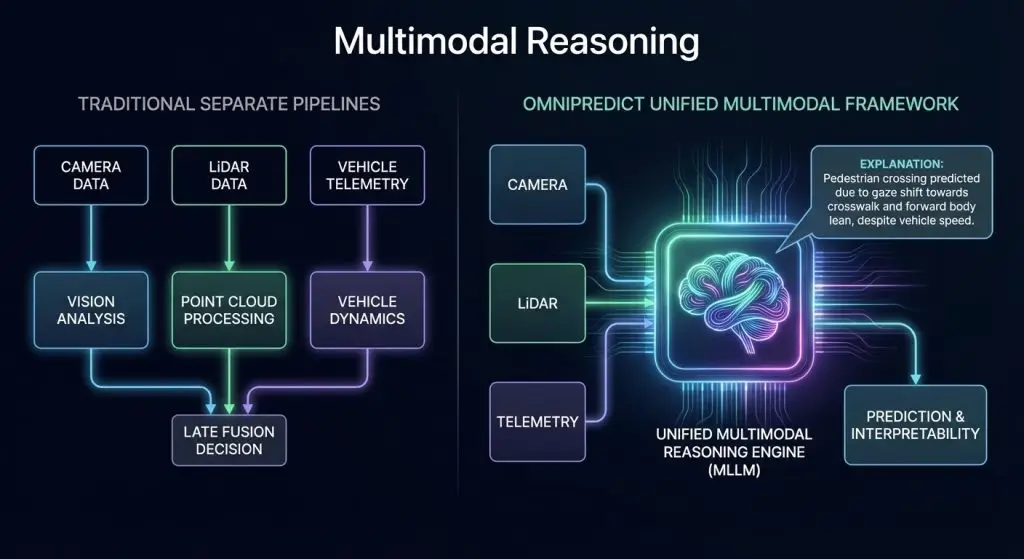

The implications of a million-token window extend far beyond text. Because Gemini 1.5 Pro is natively multimodal, it treats video and audio as first-class citizens. A user can upload a one-hour video file, and the model can reason across the entire timeline, identifying specific visual cues or summarizing a complex narrative without needing a pre-written transcript.

For software engineers, the impact is even more immediate. The ability to ingest an entire codebase—thousands of lines of logic across dozens of files—allows the AI to understand the global architecture of a project. This enables the model to suggest bug fixes that account for dependencies in distant parts of the code, a task that previously required the developer to manually feed the AI small, fragmented snippets of the project.

| Model | Context Window (Tokens) | Equivalent Data Capacity |

|---|---|---|

| GPT-4 Turbo | 128,000 | ~300 pages of text |

| Claude 3 (Opus) | 200,000 | ~500 pages of text |

| Gemini 1.5 Pro | 1,000,000+ | ~700,000 words / 1 hour video |

The Strategic Stakes in the AI Arms Race

Google’s move is a direct challenge to OpenAI and Anthropic. While GPT-4 and Claude 3 have focused heavily on nuance and “steerability,” Gemini 1.5 Pro bets on scale. By expanding the context window, Google is attempting to make the AI an indispensable tool for enterprise-level data analysis, where the volume of information is the primary bottleneck.

However, the rollout comes at a time of intense scrutiny. The industry is grappling with the environmental cost of training these massive models and the ongoing legal battles over the data used to feed them. While MoE architecture improves efficiency, the sheer scale of data processing required for million-token prompts still places a significant load on data centers.

What remains unknown

Despite the technical milestones, several questions remain. First is the latency associated with processing 1 million tokens; while the model can *handle* the data, the time it takes to generate a response for a massive prompt can still be substantial. Second is the cost for API users, as processing millions of tokens per request could prove prohibitively expensive for smaller developers if not priced aggressively.

For those looking to track the rollout, official updates and API documentation are available via the Google DeepMind portal and the Google AI Studio.

The next critical checkpoint for the Gemini ecosystem will be the wider public release of the 1.5 Pro model via the API and its deeper integration into Google Workspace. As the model moves from a limited preview to a general-availability tool, the industry will see whether the “million-token” advantage translates into a dominant market position or if competitors can quickly bridge the gap in memory capacity.

We want to hear from the developers and analysts in our community. Does a larger context window replace your need for RAG pipelines, or is it just a luxury? Share your thoughts in the comments below.