Instagram is expanding its most stringent safety measures to a global audience, automatically placing users under the age of 18 into specialized “Teen Accounts.” This systemic overhaul moves beyond optional settings, implementing a baseline of protections designed to limit exposure to sensitive content and restrict who can contact minors on the platform.

The rollout centers on a new “13+” content standard, which serves as a default filter to shield teenagers from inappropriate material. By integrating these restrictions into the core account architecture, Meta is attempting to standardize child safety across different jurisdictions, responding to mounting pressure from regulators and parents worldwide regarding the impact of social media on adolescent mental health.

For those of us who have spent years in software engineering, this shift represents a significant move toward “safety by design.” Rather than relying on users—or their parents—to navigate complex privacy menus, the platform is now shifting the burden of protection onto the system itself. Which means that for millions of teenagers, the Instagram experience is now fundamentally different from that of an adult user from the moment the account is created.

The “13+” Standard and Content Filtering

At the heart of this update is the automatic application of the most restrictive sensitive content settings. Under the new 13+ standards, Instagram’s algorithms are tuned to filter out content that may be inappropriate for minors, including suggestive imagery and graphic violence, even if the content does not explicitly violate the platform’s general community guidelines.

This filtering system applies to the “Explore” page, Reels, and suggested posts. By restricting the visibility of “borderline” content—material that doesn’t quite cross the line into a ban but is unsuitable for teens—Meta aims to create a more sanitized environment. Whereas the platform has long had content controls, the global mandate of these restrictions ensures that a teenager in France or Brazil receives the same baseline protection as one in the United States.

Default Privacy and Messaging Constraints

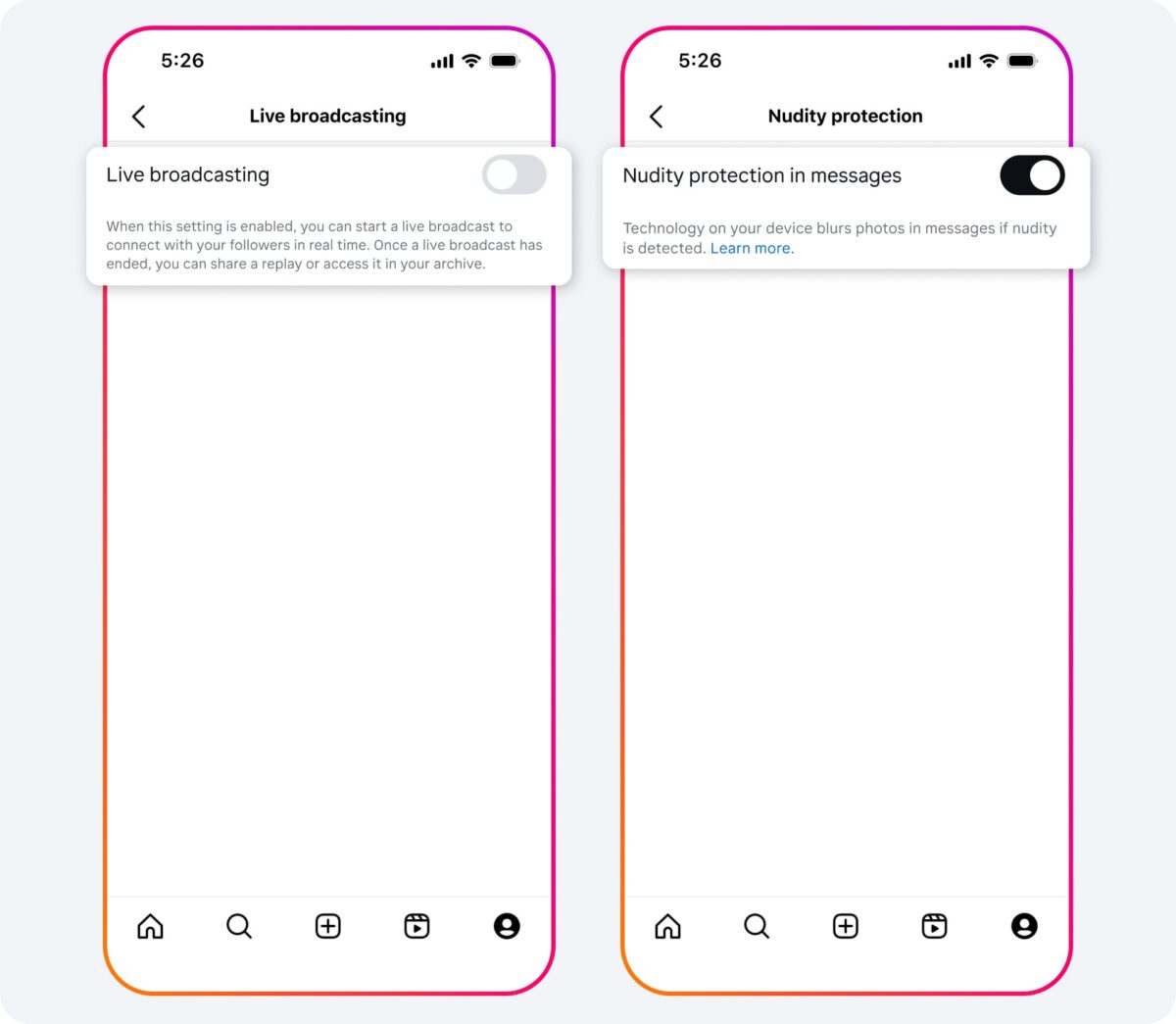

The transition to Teen Accounts introduces a “private by default” mandate. When a user under 18 creates an account, it is automatically set to private, meaning only approved followers can notice their posts or stories. This prevents the default state of a teen’s profile from being public to the entire internet.

Messaging has also seen a critical update to prevent unsolicited contact from strangers. Teen accounts are now restricted to messaging people they already follow. This effectively blocks “cold” direct messages from adult accounts that the teenager has not explicitly opted to follow, closing a common vector for online grooming and harassment.

To provide a clear picture of how these changes alter the user experience, the following table outlines the primary differences between a standard account and the new Teen Account configuration:

| Feature | Standard Account (18+) | Teen Account (Under 18) |

|---|---|---|

| Account Privacy | User Choice (Public/Private) | Private by Default |

| Messaging | Open to Requests | Followers Only |

| Content Filtering | Standard Guidelines | Strict “13+” Filtering |

| Account Setup | Manual Configuration | Automatic Enrollment |

Parental Supervision and Oversight Tools

While the system automates many protections, Meta has introduced a suite of supervision tools that allow parents to maintain a level of oversight. These tools are not automatic; they require a request from the parent and acceptance from the teen, maintaining a balance between safety and adolescent autonomy.

Parents can now set daily time limits and schedule “sleep mode” hours, during which notifications are silenced and the app encourages the user to log off. Supervisors can see who their teen is following and who follows them back, providing a window into their digital social circle without requiring the parent to have the teen’s password.

For teenagers who wish to opt out of these restrictions, the process is intentionally rigorous. To revert to a standard account, a teen must obtain parental permission, which is verified through the supervision tools. This prevents users from simply toggling off the safety features to regain access to unrestricted content.

The Regulatory Push for Digital Safety

This global pivot is not happening in a vacuum. Meta is facing unprecedented scrutiny from governments globally. In the European Union, the Digital Services Act (DSA) has placed strict requirements on platforms to mitigate systemic risks to minors. Similarly, in the U.S., multiple state-level bills and federal inquiries have targeted the “addictive” nature of algorithms and the lack of age-appropriate safeguards.

From a technical perspective, the biggest challenge remains age verification. While Instagram uses AI to estimate age and encourages users to upload IDs or use “video selfies” to verify their birth dates, the system is not foolproof. Many users still bypass age gates by entering false birth years. The effectiveness of the “Teen Accounts” rollout depends heavily on Meta’s ability to accurately identify who is actually under 18.

The industry is now watching to see if these “hard-coded” restrictions will actually reduce the prevalence of harmful content among minors or if users will find new workarounds. As platforms move toward more prescriptive safety models, the tension between user privacy and child protection will only increase.

The next significant milestone for these policies will be the upcoming regulatory reviews in the EU and the U.S., where officials will evaluate whether these automatic restrictions sufficiently meet the legal requirements for child safety. Further updates on age verification accuracy and the impact of these filters are expected in Meta’s next quarterly transparency report.

Do you think automatic restrictions are the right approach for teen safety, or should the responsibility remain with parents? Share your thoughts in the comments below.