For years, the act of “Googling” something was a linear transaction: you typed a query, you received a list of blue links, and you did the heavy lifting of synthesizing the answer. But the latest wave of announcements from Google signals a fundamental pivot in the relationship between humans and information. We are moving away from the era of the search engine and entering the era of the AI agent.

The overarching theme of these updates—framed by the evocative call to “press start” on a new technological epoch—is the deep integration of the Gemini ecosystem into every facet of the digital experience. From the way we navigate our smartphones to the way we conceptualize video production, Google is no longer just organizing the world’s information; We see attempting to interpret and generate it in real-time.

As a culture critic who has watched the trajectory of tech-integrated art and media for a decade, these aren’t just incremental software updates. They are architectural changes to the internet. By shifting from a retrieval-based system to a generative one, Google is redefining the “start” button for the modern web, placing an AI collaborator between the user and the source material.

The Death of the Blue Link: AI Overviews

The most visible shift for the average user is the rollout of AI Overviews. Rather than forcing users to click through multiple websites to compile a comprehensive answer, Google now provides a synthesized summary at the top of the search results page. This “snapshot” uses Gemini to pull information from across the web, offering a cohesive narrative answer to complex queries.

While this increases efficiency for the user, it creates a precarious moment for the open web. Publishers and creators, who rely on click-through traffic to sustain their businesses, are now facing a “zero-click” reality. The tension here is palpable: Google provides the convenience of an immediate answer, but in doing so, it risks starving the very sources that provide the data the AI needs to learn.

To mitigate this, Google has integrated citations directly into the AI Overviews, though the efficacy of these links in driving meaningful traffic remains a subject of intense debate among digital strategists and journalists.

Project Astra: The Universal Assistant

Beyond the search bar, the most ambitious reveal is Project Astra. What we have is Google’s vision for a “universal AI agent” that can see, hear, and remember. Unlike previous voice assistants that felt like glorified timers or weather reports, Astra is multimodal. In demonstrations, the AI could identify a piece of code on a computer screen, find a misplaced pair of glasses in a room via a phone camera, and engage in fluid, natural conversation without the jarring latency of previous iterations.

The implications for accessibility and productivity are staggering. Astra transforms the smartphone from a tool we consult into a companion that observes. For the visually impaired, this could mean a real-time narrator of the physical world; for the professional, it means an assistant that remembers where a specific detail was mentioned in a three-hour meeting.

The New Creative Suite: Veo and Imagen 3

Google is also making a concerted push into the generative media space to compete with the likes of OpenAI’s Sora and Midjourney. The introduction of Veo, a high-definition video generation model, and Imagen 3, their most advanced text-to-image tool, marks a shift toward professional-grade creative tools.

Veo is capable of producing 1080p cinematic video that understands cinematic terms like “timelapse” or “aerial shot,” allowing creators to iterate on visual ideas without the need for an immediate production budget. Imagen 3 focuses on photorealism and the long-standing AI struggle with rendering accurate text within images.

However, these tools bring renewed scrutiny regarding copyright and the authenticity of digital media. As the line between captured reality and generated imagery blurs, Google’s commitment to SynthID—a tool for watermarking AI-generated content—will be the litmus test for the company’s ethical framework.

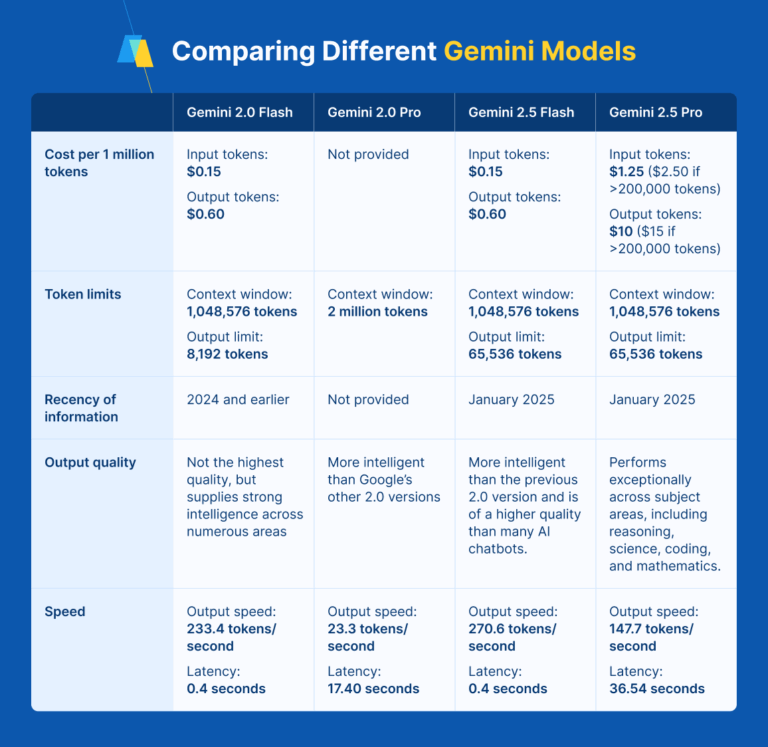

Comparing the Gemini Model Hierarchy

To power these diverse experiences, Google has streamlined its AI offerings into a tiered system, ensuring that the right amount of computing power is applied to the right task.

| Model | Primary Strength | Ideal Use Case |

|---|---|---|

| Gemini Ultra | Highly complex reasoning | Scientific research, advanced coding |

| Gemini Pro | Versatility & Scale | General purpose assistance, long-context analysis |

| Gemini Flash | Speed & Efficiency | Real-time chat, high-frequency API tasks |

The Android Integration: AI as the OS

The final piece of the puzzle is the hardware integration. Google is effectively replacing the traditional Google Assistant with Gemini across the Android ecosystem. Features like “Circle to Search”—which allows users to highlight anything on their screen to trigger a search—demonstrate a move toward a more intuitive, gesture-based interface.

The goal is “contextual awareness.” By allowing Gemini to access the data across different apps—your emails, your calendar, your documents—the AI can perform complex tasks, such as “Find the flight details in my Gmail and add a reminder to pack my passport two days before.” This removes the friction of app-switching, making the operating system itself the primary interface.

For the stakeholders—developers, advertisers, and users—this represents a massive redistribution of power. The “entry point” to the internet is no longer a URL or an app icon; it is a conversation with an AI that has a holistic view of the user’s digital life.

The next confirmed checkpoint for this rollout is the continued expansion of AI Overviews to more global markets and the official public release of Project Astra’s capabilities into the Gemini app, expected in phases throughout the coming months.

Do you think AI-synthesized search results help or hurt the future of independent journalism? Share your thoughts in the comments below or join the conversation on our social channels.