Microsoft is fundamentally shifting the role of artificial intelligence in the workplace, moving away from the traditional “chatbot” model toward a system of autonomous agents capable of executing complex tasks 24/7 in the background. This evolution marks a transition from reactive AI—where a user asks a question and receives an answer—to proactive AI, where agents monitor data streams and trigger actions without constant human intervention.

The strategy centers on the deployment of Microsoft Copilot agents background tasks, which are designed to operate independently within the Microsoft 365 ecosystem. Unlike the standard Copilot interface, these agents can be programmed to watch for specific triggers, such as a new lead entering a CRM or a change in a supply chain spreadsheet, and then execute a predefined series of steps to resolve the issue or update the relevant stakeholders.

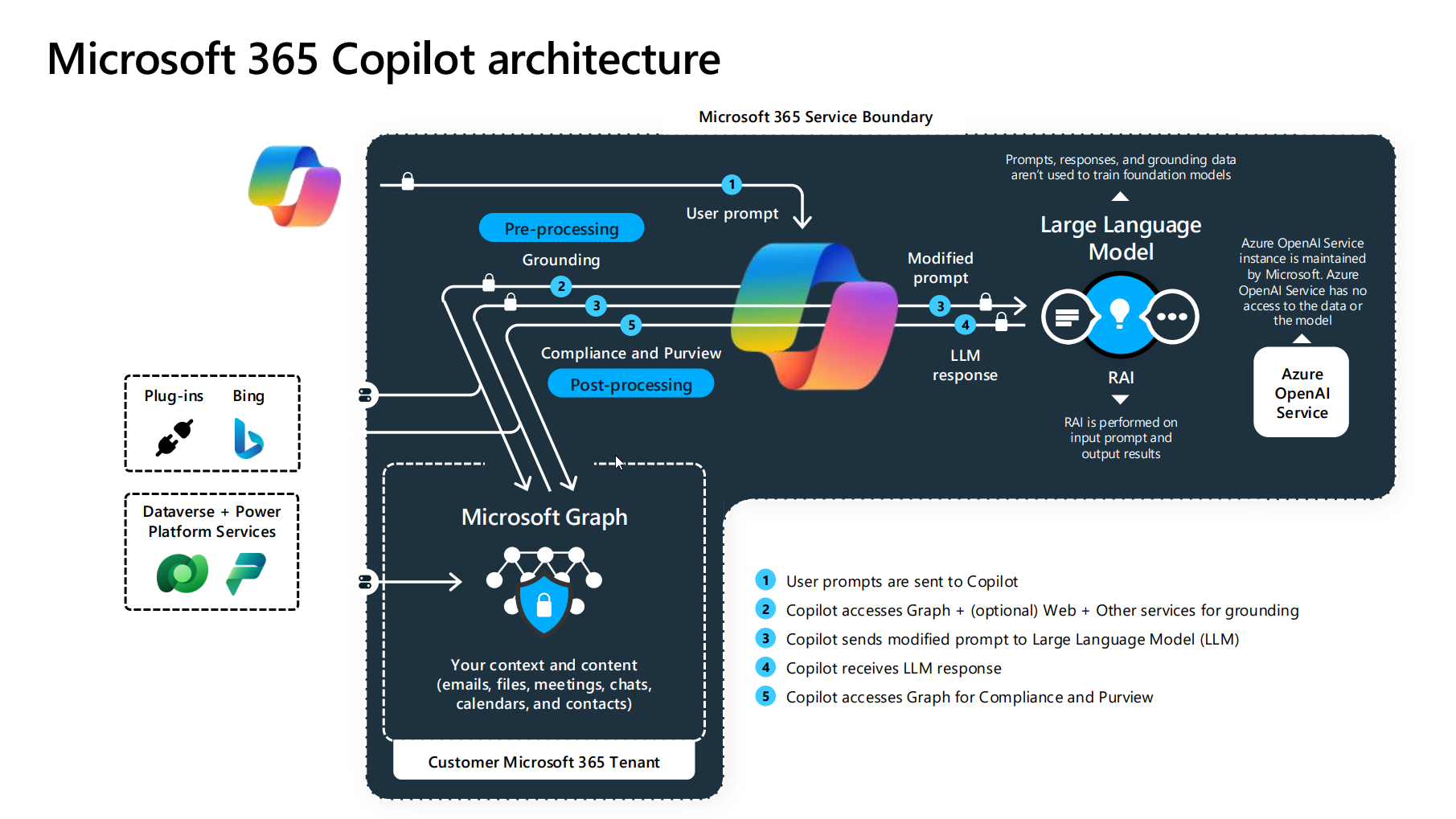

For those of us who spent years in software engineering before moving into reporting, this shift represents a move toward “agentic workflows.” In a standard LLM interaction, the process is linear: prompt, process, response. An agent, but, operates in a loop: it perceives the environment, reasons about the necessary steps, uses a tool to execute a task, and then evaluates the result before moving to the next step. By allowing these loops to run in the background, Microsoft is attempting to turn the AI from a digital assistant into a digital employee.

The Architecture of Autonomy via Copilot Studio

The engine driving this transition is Microsoft Copilot Studio, a low-code tool that allows organizations to build and orchestrate these autonomous agents. These agents are not merely wrappers for a chat interface; they are integrated into the organization’s data layer, allowing them to interact with SharePoint, Outlook, and third-party APIs.

The ability to run 24/7 means these agents can handle asynchronous workloads. For example, an agent tasked with procurement can monitor inventory levels across multiple warehouses and automatically draft purchase orders when stock hits a critical threshold, notifying a human manager only for final approval. This removes the “human-in-the-loop” requirement for every single micro-step, significantly reducing the cognitive load on employees.

Microsoft is also testing advanced capabilities that allow Copilot to navigate user interfaces in a manner similar to how a human would. This “UI-based automation” allows the AI to interact with legacy software that lacks a modern API, effectively bridging the gap between cutting-edge AI and aging enterprise infrastructure. By simulating clicks and reads across a screen, these agents can automate workflows that were previously considered “un-automatable.”

A Strategic Pivot in User Interface Design

While Microsoft is expanding the backend power of its AI, This proves simultaneously scaling back the visible presence of Copilot in the Windows 11 user interface. Recent updates have seen the removal of dedicated Copilot buttons and references from several native applications, including Notepad. However, this is not a retreat from AI, but rather a refinement of how it is delivered.

The removal of “Copilot” branding from specific app buttons suggests a shift toward “invisible AI.” Instead of requiring users to click a specific AI button to trigger a feature, Microsoft is integrating the underlying AI functionality directly into the app’s existing tools. This reduces UI clutter and moves the experience toward a more organic integration where the AI is a feature of the tool, rather than a separate entity the user must “summon.”

This design philosophy aligns with the move toward background agents. If the AI is performing tasks autonomously in the background, a prominent “Ask Copilot” button becomes less relevant. The value shifts from the interaction (the chat) to the outcome (the completed task).

Comparison of AI Interaction Models

| Feature | Reactive Copilot (Chat) | Autonomous Agents (Background) |

|---|---|---|

| Trigger | User Prompt | Event-based or Scheduled |

| Operation | Foreground/Active | Background/Asynchronous |

| User Role | Driver/Operator | Supervisor/Approver |

| Workflow | Single-turn response | Multi-step iterative loops |

Enterprise Implications and the “Supervisor” Role

The deployment of 24/7 background agents fundamentally changes the nature of white-collar work. The primary skill set for employees is shifting from “execution” to “orchestration.” When AI handles the data entry, monitoring, and initial drafting, the human worker becomes a supervisor who audits the AI’s work and manages the exceptions that the agent cannot handle.

This transition introduces new challenges in governance and security. Allowing an AI agent to operate autonomously in the background requires strict permissioning. If an agent has the authority to send emails or modify database records, the risk of “hallucinations” leading to real-world errors increases. Microsoft has addressed this through “guardrails” within Copilot Studio, allowing admins to set hard limits on what an agent can do without explicit human sign-off.

Who is most affected by this change? The impact is most immediate in roles characterized by repetitive data orchestration:

- Sales Operations: Agents can qualify leads and schedule meetings based on calendar availability without manual back-and-forth.

- Human Resources: Agents can handle initial employee onboarding checklists and document verification in the background.

- Supply Chain Management: Agents can track shipments in real-time and automatically trigger alerts or reroute orders based on weather or geopolitical delays.

The Path Toward General Agency

The current trajectory suggests that Microsoft is aiming for a state of “general agency” within the enterprise. By combining the 24/7 background execution of Copilot agents with the deeper integration into Windows 11 and Microsoft 365, the goal is to create a seamless layer of intelligence that exists between the user and their data.

This evolution is part of a broader industry trend. As LLMs become more stable, the focus is shifting from the quality of the text they generate to the quality of the actions they can take. The “agentic” era is defined by the ability to execute a goal—such as “Organize the quarterly board meeting and prepare all briefing docs”—rather than simply answering a question about how to do it.

The next confirmed checkpoint for this technology will be the continued rollout of updated Copilot Studio capabilities and the integration of more autonomous triggers into the standard Microsoft 365 license tiers. As these tools move from preview to general availability, the industry will be watching closely to see how organizations manage the balance between autonomous efficiency and human oversight.

Do you think autonomous AI agents will make your workday easier, or do they add too much risk to the workflow? Share your thoughts in the comments below.