For years, the conversation around artificial intelligence has centered almost exclusively on the “brains” of the operation—the GPUs. We talk about TFLOPS, parameter counts, and the raw compute power of the Blackwell architecture. But as AI models scale from billions to trillions of parameters, the industry is hitting a physical wall. The problem isn’t just how speedy a chip can think, but how fast those chips can talk to one another.

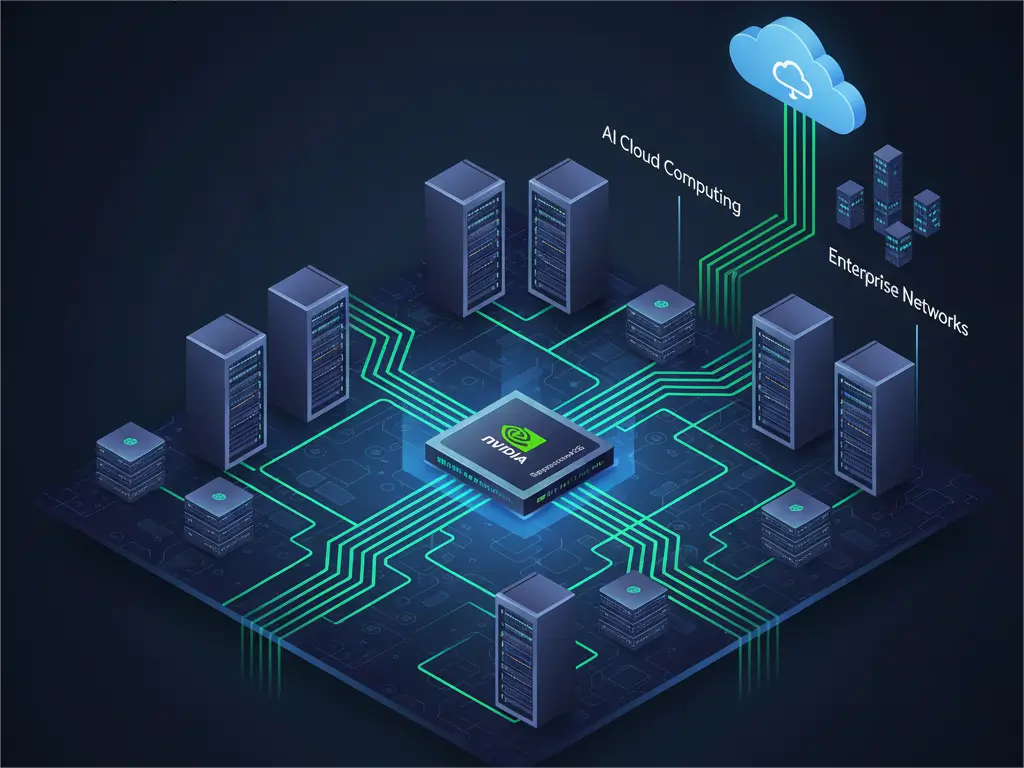

In the world of gigascale AI, the network is the bottleneck. When thousands of GPUs must act as a single, synchronized machine to train a frontier Large Language Model (LLM), a single network hiccup or a congested data path can bring the entire operation to a grinding halt. This is the challenge NVIDIA is addressing with Spectrum-X, an AI-native Ethernet fabric designed specifically to handle the erratic, high-burst traffic patterns of AI workloads.

The latest evolution in this infrastructure is the introduction of Multipath Reliable Connection (MRC), a new RDMA transport protocol. Developed in collaboration with industry heavyweights including Microsoft, OpenAI, AMD, Broadcom, and Intel, MRC is designed to move AI networking away from fragile, single-path connections toward a resilient, grid-like architecture. By allowing data to flow across multiple paths simultaneously, NVIDIA and its partners are attempting to standardize the “plumbing” of the world’s largest AI factories.

For those of us who spent years in software engineering, this shift is familiar. It is the transition from a fragile, linear system to a distributed one. In a traditional setup, if a network path fails or becomes congested, the system often stalls while it searches for a workaround. MRC eliminates this hesitation, ensuring that the massive capital investment in GPU clusters isn’t wasted on “idle time” while the network catches up.

Beyond the Single Lane: How MRC Solves Congestion

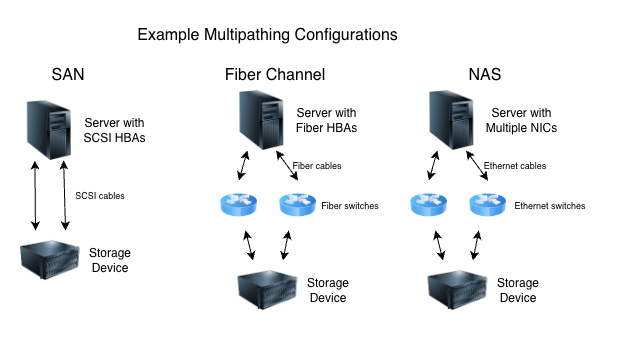

To understand why MRC is necessary, it helps to use a traffic analogy. Traditional networking often resembles a single-lane highway connecting two cities. If there is an accident or a road closure, every car behind it stops, regardless of how fast the engines are. In an AI training cluster, this “accident” is network congestion or a failed link, and the “cars” are the massive data packets moving between GPUs.

MRC replaces that single highway with a sophisticated street grid. It enables a single RDMA (Remote Direct Memory Access) connection to distribute traffic across multiple available network paths. If one path becomes overloaded, the system reroutes data in real-time, ensuring that the bandwidth remains consistent. This load balancing is critical because AI training is a synchronous process; the entire cluster can only move as fast as its slowest link.

The technical impact is most visible in GPU utilization. By avoiding “hot spots” in the network, MRC ensures that every GPU in a cluster receives the data it needs without waiting. The protocol introduces intelligent retransmission. When data loss occurs, the system recovers the specific missing packets rapidly and precisely, rather than triggering a massive, inefficient reset of the connection.

| Feature | Traditional RDMA | MRC (Spectrum-X) |

|---|---|---|

| Traffic Routing | Single-path (Linear) | Multipath (Grid-based) |

| Congestion Handling | Reactive/Stalling | Dynamic Load Balancing |

| Failure Recovery | Software-level timeout | Hardware-speed bypass (Microseconds) |

| GPU Efficiency | Prone to idle time | High utilization via consistent bandwidth |

The Architecture of the AI Factory

This technology isn’t just theoretical; it is already powering some of the most ambitious compute projects on the planet. Microsoft’s “Fairwater” and Oracle Cloud Infrastructure’s (OCI) “Abilene” data centers are prime examples of “AI factories”—facilities purpose-built for the training of frontier LLMs. Both rely on MRC and Spectrum-X to maintain the stability required for gigascale operations.

OpenAI has also integrated these systems into its newest deployments. Sachin Katti, head of industrial compute at OpenAI, noted that deploying MRC within the Blackwell generation was a success, allowing the team to avoid typical network-related slowdowns that often plague frontier training runs. For OpenAI, the goal is “efficiency at scale,” meaning the ability to add more GPUs to a project without seeing a diminishing return in performance due to network overhead.

A key part of this stability is the use of multiplanar network designs. Instead of one giant network, OpenAI and others deploy multiple independent “planes” of fabric. The NVIDIA Spectrum-X Multiplane capability allows for hardware-accelerated load balancing across these planes. This architecture ensures that even if an entire section of the network fails, alternate communication paths exist, keeping latencies predictably low across hundreds of thousands of GPUs.

An Open Standard for a Competitive Ecosystem

Perhaps the most significant move in this announcement is NVIDIA’s decision to release MRC as an open specification through the Open Compute Project (OCP). In an industry often criticized for “vendor lock-in,” moving toward an open standard is a strategic play to ensure that Spectrum-X becomes the industry default for AI Ethernet.

By collaborating with competitors like Intel, AMD, and Broadcom, NVIDIA is acknowledging that the scale of AI factories has surpassed the capacity of any single company’s proprietary stack. An open protocol allows for a more composable infrastructure, where different hardware components can interoperate as long as they follow the same “rules of the road.”

Spectrum-X provides the flexibility to run multiple transport models—including Adaptive RDMA and MRC—natively across NVIDIA ConnectX SuperNICs and Spectrum-X switches. This allows data center architects to choose the specific transport protocol that best fits their particular workload, whether they are focusing on massive training runs or high-throughput inference.

The move toward an AI-native Ethernet fabric signals a broader shift in the data center. For years, InfiniBand was the gold standard for high-performance computing (HPC) due to its low latency. However, Ethernet’s ubiquity, scalability, and now, the intelligence provided by MRC, make it the more viable path for the “gigascale” era of AI.

The next critical milestone for the industry will be the widespread adoption of these OCP specifications across other cloud service providers, which will determine if MRC becomes the universal language of AI networking. Official technical whitepapers and datasheets for Spectrum-X are available via NVIDIA’s enterprise portal for engineers seeking implementation details.

Do you think open standards will eventually replace proprietary fabrics in the AI race? Share your thoughts in the comments or join the conversation on our social channels.