For decades, the mantra of mission-critical software development has been “measure twice, cut once.” In environments where software governs complex hardware—think aerospace, medical devices, or industrial automation—the cost of a bug isn’t just a crashed app; it is a potential systemic failure. This inherent caution creates a grueling development lifecycle, where the distance between a design concept and a tested, deployable product is measured in months of manual coding and exhaustive verification.

Simplex is attempting to collapse that timeline. By integrating ChatGPT Enterprise and OpenAI’s Codex, the engineering firm is fundamentally rethinking how it approaches the software development lifecycle (SDLC). The goal isn’t to replace the engineer, but to automate the “boilerplate” of innovation—the repetitive, time-consuming tasks of drafting initial code, documenting architecture, and writing test scripts that often stall momentum.

As a former software engineer, I’ve seen firsthand how the “build” phase of a project often becomes a bottleneck. Developers spend a disproportionate amount of time wrestling with syntax or hunting through legacy documentation rather than solving the actual engineering problem. Simplex’s shift toward AI-driven workflows suggests a move toward a “copilot” model of engineering, where the AI handles the first draft and the human expert provides the critical verification.

Accelerating the Design-Build-Test Cycle

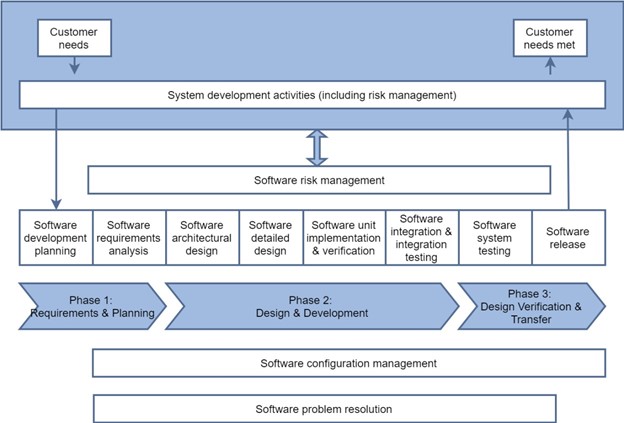

The integration of OpenAI’s tools at Simplex targets three specific pressure points in the development pipeline: design, construction, and testing. In the design phase, ChatGPT Enterprise serves as a high-level architect. Engineers can use the tool to brainstorm system requirements, draft technical specifications, and map out logic flows before a single line of code is written. This reduces the “blank page” syndrome that often slows the early stages of a project.

Once the design is solidified, Codex—the model that powers GitHub Copilot and specializes in translating natural language into code—takes over. For Simplex, this means the ability to generate skeletal code structures or translate complex mathematical formulas into executable functions. This is particularly valuable when dealing with legacy systems, where AI can help interpret older codebases and suggest modernizations without requiring an engineer to manually reverse-engineer every line.

The most significant gains, however, are appearing in the testing phase. Traditionally, writing test cases is the most tedious part of the process, yet it is the most vital for safety-critical systems. By leveraging AI to generate comprehensive test suites based on the initial design requirements, Simplex can identify edge cases and vulnerabilities far earlier in the cycle than was previously possible.

| Phase | Traditional Approach | AI-Augmented Approach |

|---|---|---|

| Design | Manual drafting of specs and logic maps. | Rapid prototyping via LLM-assisted architecture. |

| Build | Manual coding and legacy code translation. | AI-generated boilerplate and syntax assistance. |

| Testing | Hand-written test scripts and manual QA. | Automated test case generation and edge-case detection. |

The Tension Between Speed and Safety

Scaling AI-driven workflows in a high-stakes engineering environment introduces a primary conflict: the trade-off between velocity and veracity. Large Language Models (LLMs) are probabilistic, not deterministic. They predict the next likely token in a sequence, which means they can “hallucinate” code that looks correct but contains subtle, dangerous logic errors.

To mitigate this, Simplex employs a “human-in-the-loop” framework. The AI provides the acceleration, but the human engineer remains the sole authority for validation. This creates a new role for the senior developer: moving from a “writer” of code to an “editor” and “auditor” of code. The expertise shifts from knowing exactly how to write a specific function to knowing exactly how to verify that the AI-generated function meets rigorous safety standards.

the use of ChatGPT Enterprise provides a critical layer of security that consumer-grade AI lacks. Enterprise-grade deployments ensure that the proprietary code and sensitive intellectual property Simplex develops are not used to train OpenAI’s public models. In the world of industrial engineering, where trade secrets are the primary currency, this data isolation is a non-negotiable requirement.

The Broader Shift in Engineering Culture

The adoption of Codex and ChatGPT at Simplex is a microcosm of a larger shift occurring across the tech industry. We are moving away from “manual labor” coding toward “intent-based” development. In this new paradigm, the value of an engineer is no longer measured by their fluency in a specific programming language, but by their ability to clearly define a problem and rigorously verify the solution.

This transition affects stakeholders across the organization. Project managers can now provide more accurate timelines because the “build” phase is less volatile. Junior engineers can onboard faster by using AI to explain complex sections of the codebase. Most importantly, the company can scale its operations without a linear increase in headcount, allowing them to take on more complex projects without proportionally increasing their overhead.

Despite these efficiencies, the “unknowns” remain. The industry is still grappling with the long-term implications of AI-generated code—specifically regarding technical debt. If AI makes it easier to write code, there is a risk that companies will produce *more* code than they can realistically maintain, leading to a future “maintenance crisis” where the volume of AI-generated legacy code becomes overwhelming.

For now, the focus remains on the immediate utility of these tools. Simplex has demonstrated that when AI is treated as a sophisticated power tool rather than a replacement for the craftsman, the result is a leaner, faster, and more agile development process.

As OpenAI continues to refine its models and integrate more specialized coding capabilities, the next major checkpoint for Simplex and similar firms will be the integration of autonomous agentic workflows—systems that can not only write code but also run it, find the errors, and fix them independently before the human auditor ever sees the first draft.

Do you think AI-generated code in mission-critical systems is a risk or a necessity for progress? Share your thoughts in the comments or join the conversation on our social channels.